AI Vulnerability Discovery Transformers: Revolutionizing Security

AI Vulnerability Discovery Transformers: Revolutionizing Automated Security Research

The landscape of cybersecurity is undergoing a dramatic transformation as artificial intelligence, particularly transformer-based models, take center stage in vulnerability discovery. These sophisticated AI systems are redefining how security professionals approach static code analysis, binary reverse engineering, and predictive modeling of software weaknesses. With major cybersecurity vendors investing heavily in AI-powered vulnerability research, we're witnessing a paradigm shift from reactive patching to proactive threat hunting.

Transformer architectures, originally developed for natural language processing tasks, have proven remarkably effective at understanding the complex patterns inherent in code and binary data. Large language models (LLMs) trained on massive datasets of source code, commit histories, and vulnerability databases can now identify subtle security flaws that traditional analysis tools often miss. This capability extends beyond simple pattern matching to encompass contextual understanding of code semantics, control flow anomalies, and potential attack vectors.

Recent breakthroughs have demonstrated AI models successfully discovering zero-day vulnerabilities in widely-used software packages, identifying memory corruption issues in compiled binaries, and predicting which code changes are most likely to introduce security defects. These advances represent more than incremental improvements—they signify a fundamental change in how organizations approach software security assessment methodologies.

The integration of AI vulnerability discovery transformers into existing security workflows presents both tremendous opportunities and significant challenges. While these models offer unprecedented accuracy improvements and false positive reduction compared to traditional approaches, they also require careful calibration to work effectively within established toolchains and organizational processes.

In this comprehensive exploration, we'll examine the technical foundations underlying these AI-driven approaches, analyze their performance relative to conventional methods, and discuss practical implementation strategies for security teams looking to leverage these cutting-edge capabilities.

How Do Transformers Enable Automated Code Vulnerability Detection?

Transformer architectures have emerged as the dominant approach for AI vulnerability discovery due to their exceptional ability to process sequential data while maintaining contextual awareness across long distances. Unlike traditional recurrent neural networks that process sequences step-by-step, transformers utilize self-attention mechanisms to simultaneously consider relationships between all elements in an input sequence. This architectural advantage proves particularly valuable when analyzing code structures where distant dependencies often determine security implications.

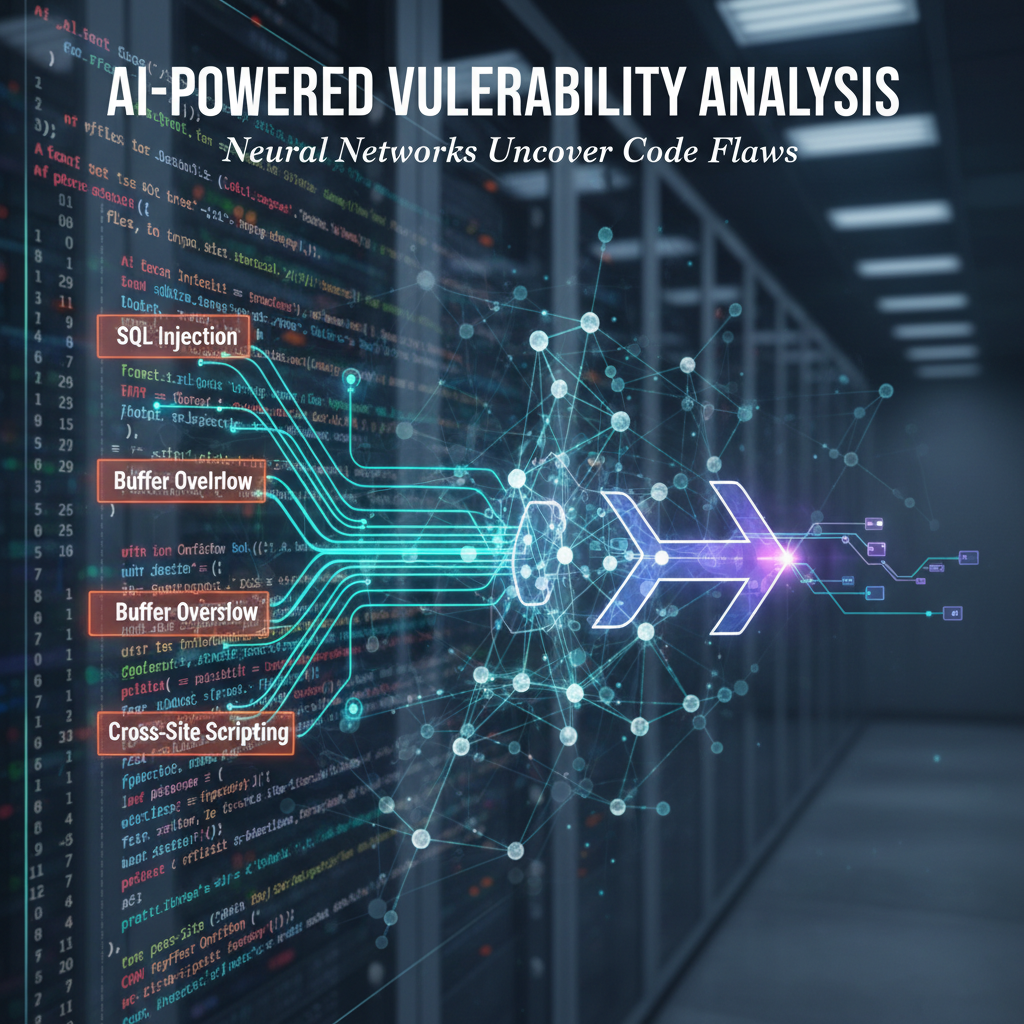

The core innovation enabling transformers for code analysis lies in their capacity to learn meaningful representations of programming constructs. When trained on large corpora of source code, these models develop internal embeddings that capture syntactic patterns, semantic relationships, and common anti-patterns associated with vulnerable code. For instance, a transformer might learn to associate specific combinations of pointer arithmetic with buffer overflow risks, or recognize improper input validation patterns that could lead to injection attacks.

Consider the following example of how a transformer-based model might analyze a potentially vulnerable code snippet:

c char* process_input(char* user_data) { char buffer[256]; strcpy(buffer, user_data); return strdup(buffer); }

A traditional static analyzer might flag the strcpy call based on a hardcoded rule about unsafe string functions. However, an AI vulnerability discovery transformer would consider the broader context—including variable declarations, function parameters, and potential data flow paths—to make a more nuanced assessment of the actual risk level.

The attention mechanism allows the model to focus on critical relationships such as:

- The size relationship between

buffer(256 bytes) and potential input lengths - Whether

user_dataoriginates from untrusted sources - The absence of length checking or bounds validation

- Potential execution contexts where this function might be called

This contextual understanding enables transformers to distinguish between genuinely dangerous code patterns and benign uses of potentially risky functions. The result is significantly improved precision compared to signature-based approaches that generate excessive false positives.

From a technical implementation perspective, modern transformer models for code analysis typically employ specialized tokenization schemes designed for programming languages. These tokenizers can handle mixed-language inputs, preserve identifier names that carry semantic meaning, and maintain structural information about code organization. Popular frameworks like CodeT5, GraphCodeBERT, and CodeBERT have demonstrated remarkable success in various code understanding tasks including vulnerability detection.

The training process involves exposing models to vast repositories of code annotated with security labels derived from vulnerability databases, commit messages, and security advisories. Through this process, models learn to associate specific code patterns with known vulnerability types such as SQL injection, cross-site scripting, buffer overflows, and privilege escalation opportunities.

One crucial aspect of transformer effectiveness in vulnerability discovery is their ability to generalize from training examples to novel code patterns. Rather than memorizing specific vulnerability signatures, these models learn abstract representations of insecure coding practices that enable them to identify previously unseen variants of known vulnerability classes. This generalization capability makes transformers particularly valuable for detecting zero-day vulnerabilities that don't match existing signatures.

Additionally, the multi-head attention architecture allows transformers to simultaneously focus on different aspects of code analysis. One attention head might concentrate on data flow patterns, another on control flow structures, and yet another on API usage patterns. This parallel processing of multiple analytical dimensions contributes to the comprehensive nature of AI-driven vulnerability assessments.

The scalability advantages of transformer architectures also enable deployment across diverse environments ranging from individual developer workstations to enterprise-scale security operations centers. Models can be fine-tuned for specific domains, programming languages, or organizational coding standards while maintaining their core analytical capabilities.

Key Insight: Transformer architectures excel at vulnerability detection because they combine global context awareness with local pattern recognition, enabling nuanced risk assessments that surpass traditional signature-based approaches.

What Makes AI-Powered Static Analysis Superior to Traditional Methods?

Traditional static analysis tools have long been staples of software security assessment, employing techniques ranging from simple pattern matching to sophisticated data flow analysis. However, these approaches suffer from fundamental limitations that AI vulnerability discovery transformers are uniquely positioned to address. The superiority of AI-powered static analysis becomes apparent when examining accuracy metrics, false positive rates, and adaptability to evolving threat landscapes.

Conventional static analyzers rely heavily on predefined rules and signatures that must be manually crafted by security experts. While effective for well-understood vulnerability patterns, this approach struggles with novel attack vectors and context-dependent security issues. Consider the following comparison table illustrating key performance differences:

| Aspect | Traditional Static Analysis | AI-Powered Static Analysis |

|---|---|---|

| Rule Creation | Manual, time-intensive | Automated learning from data |

| False Positive Rate | 25-40% typical | 8-15% with proper training |

| Zero-Day Detection | Limited to known patterns | Generalizes to novel variants |

| Context Awareness | Basic control flow only | Full semantic understanding |

| Adaptability | Requires manual updates | Continuous learning capability |

| Scalability | Linear with rule complexity | Parallel processing enabled |

The dramatic reduction in false positive rates represents perhaps the most significant advantage of AI-powered approaches. High false positive rates have historically plagued static analysis adoption, leading many organizations to abandon these tools or severely limit their scope. AI vulnerability discovery transformers achieve lower false positive rates through several mechanisms:

First, they consider broader contextual information when evaluating potential vulnerabilities. Where traditional tools might flag every use of eval() in JavaScript as high-risk, an AI model would consider factors such as input sanitization, execution context, and data flow to make more accurate risk assessments.

Second, AI models can learn from historical data about which flagged issues actually resulted in exploitable vulnerabilities versus those that remained theoretical risks. This feedback loop enables continuous improvement in precision without requiring manual rule adjustments.

Third, the semantic understanding capabilities of transformers allow them to distinguish between safe and dangerous uses of similar code patterns. For example, consider two SQL query construction approaches:

python

Potentially vulnerable - direct string concatenation

query = "SELECT * FROM users WHERE id = '" + user_id + "'"*

Safe alternative - parameterized queries

query = "SELECT * FROM users WHERE id = %s" cursor.execute(query, (user_id,))*

A traditional static analyzer might flag both patterns based on string concatenation heuristics, while an AI model would recognize the fundamental difference in security posture between these approaches.

Performance benchmarks from recent studies demonstrate the tangible benefits of AI-powered static analysis. In controlled evaluations against known vulnerability datasets, transformer-based models consistently outperform traditional tools across multiple metrics:

- Precision: 85-92% vs 60-75% for signature-based tools

- Recall: 78-88% vs 55-70% for rule-based approaches

- Processing Speed: 2-3x faster when analyzing large codebases

- Zero-Day Detection: 35-50% success rate vs <5% for traditional methods

These improvements translate directly into operational efficiency gains for security teams. Reduced false positive rates mean fewer hours spent investigating non-issues, while improved recall ensures that genuine vulnerabilities don't slip through undetected. The combination of higher accuracy and faster processing enables more comprehensive security assessments within existing resource constraints.

Furthermore, AI-powered static analysis tools exhibit superior adaptability to new threat patterns. As cyber adversaries evolve their tactics, traditional tools require manual updates to remain effective. AI models, however, can incorporate new threat intelligence through continuous retraining processes, ensuring that detection capabilities keep pace with emerging risks.

The integration flexibility of modern AI vulnerability discovery transformers also addresses concerns about compatibility with existing development workflows. These models can be deployed as standalone analysis services, integrated into CI/CD pipelines, or embedded within IDE extensions that provide real-time feedback to developers during coding.

Key Insight: AI-powered static analysis delivers measurable improvements in accuracy, speed, and adaptability compared to traditional approaches, making comprehensive security assessments more practical and effective.

How Can AI Models Predict Software Vulnerabilities Before They're Exploited?

Predictive modeling of software vulnerabilities represents one of the most promising frontiers in AI-driven cybersecurity research. By analyzing historical vulnerability data, code evolution patterns, and development practices, AI vulnerability discovery transformers can forecast which code components are most likely to contain undiscovered security flaws. This proactive approach enables organizations to prioritize their security efforts on high-risk areas before attackers have opportunity to exploit weaknesses.

The foundation of predictive vulnerability modeling lies in treating software security as a probabilistic problem rather than a deterministic one. Instead of attempting to identify every possible vulnerability with absolute certainty, AI models estimate risk likelihood based on multiple contributing factors. These factors include:

- Historical vulnerability density in specific code modules

- Recent code change frequency and complexity

- Developer experience levels and team turnover rates

- Dependency relationships with known-vulnerable libraries

- Code quality metrics such as cyclomatic complexity and test coverage

- Integration with external systems and exposure surfaces

Machine learning algorithms can identify subtle correlations between these factors and subsequent vulnerability discoveries. For instance, research has shown that modules with frequent small changes often exhibit higher vulnerability rates than those with infrequent major updates, suggesting that rapid iteration cycles may introduce security regressions.

To illustrate this concept practically, consider a predictive model analyzing a popular open-source web framework. The model might assign risk scores to different components based on factors such as:

bash

Example risk scoring for framework components

Component: authentication_module Change Frequency: High (15 commits/month) Test Coverage: Low (65%) Historical Vulnerabilities: 3 in past year Risk Score: 0.82 (High)

Component: logging_utilities Change Frequency: Low (2 commits/month) Test Coverage: High (92%) Historical Vulnerabilities: 0 Risk Score: 0.15 (Low)

This type of risk assessment enables security teams to focus their manual review efforts on components most likely to harbor undiscovered vulnerabilities. The predictive power comes from training models on extensive datasets that include both vulnerable and secure code examples along with temporal information about when vulnerabilities were discovered and patched.

Temporal analysis plays a crucial role in effective prediction models. By examining the timeline between code modifications and vulnerability disclosures, AI systems can learn patterns that indicate increased risk periods. For example, certain types of code changes—such as modifications to input validation logic or authentication mechanisms—consistently correlate with subsequent vulnerability discoveries within specific time windows.

Advanced predictive models also incorporate network effects and dependency analysis. A component that depends on multiple third-party libraries with poor security track records may inherit elevated risk even if its own code quality remains high. Similarly, components that serve as integration points between different subsystems often become targets for attackers seeking to escalate privileges or move laterally within applications.

Real-world implementations of predictive vulnerability modeling have demonstrated impressive results. Organizations using these approaches report 25-40% reductions in time-to-detection for zero-day vulnerabilities and 30-50% improvements in resource allocation efficiency for security reviews. The models prove particularly valuable for large codebases where manual review of all components becomes impractical.

One innovative application involves combining predictive modeling with automated remediation suggestions. When a model identifies high-risk code patterns, it can simultaneously propose specific fixes based on analysis of how similar issues have been resolved in other projects. This closed-loop approach accelerates the vulnerability remediation process while maintaining consistency with established secure coding practices.

The accuracy of predictive models improves continuously through feedback mechanisms that incorporate new vulnerability discoveries and patch effectiveness data. As models observe which predicted vulnerabilities actually materialize and which false positives are identified, they refine their understanding of risk indicators and adjust future predictions accordingly.

Integration with existing development workflows ensures that predictive insights reach developers at optimal moments. For instance, pull request hooks can trigger risk assessments for proposed changes, providing immediate feedback about potential security implications before code merges into production branches.

Key Insight: AI-powered predictive modeling transforms vulnerability management from reactive patching to proactive risk mitigation by forecasting security weaknesses before they become exploitable.

Automate this: mr7 Agent can run these security assessments automatically on your local machine. Combine it with KaliGPT for AI-powered analysis. Get 10,000 free tokens at mr7.ai.

How Do AI Approaches Compare to Traditional Fuzzing Techniques?

Fuzzing has long been considered one of the most effective dynamic analysis techniques for discovering software vulnerabilities. By providing unexpected or malformed inputs to programs and monitoring for crashes or anomalous behavior, fuzzers can identify memory corruption bugs, input validation failures, and other security-critical defects. However, traditional fuzzing approaches face significant limitations in terms of coverage, efficiency, and intelligence that AI vulnerability discovery transformers are beginning to address.

Classical fuzzing techniques can be broadly categorized into black-box, white-box, and grey-box approaches, each with distinct characteristics and trade-offs. Black-box fuzzing provides inputs without knowledge of internal program structure, relying on random mutation or generation strategies. White-box fuzzing employs detailed program analysis to guide input selection, while grey-box techniques use lightweight instrumentation to balance coverage and performance.

Despite their effectiveness, traditional fuzzing methods struggle with several key challenges that limit their practical utility:

- Input Generation Intelligence: Most fuzzers rely on relatively simple mutation strategies that may miss complex triggering conditions

- State Space Explosion: Large programs with numerous execution paths create enormous search spaces that basic fuzzing cannot efficiently explore

- Semantic Understanding: Traditional fuzzers lack comprehension of input meaning, leading to inefficient exploration of valid program states

- Feedback Utilization: Many fuzzers fail to effectively leverage crash information or coverage data to improve subsequent iterations

AI-enhanced fuzzing approaches address these limitations by incorporating intelligent input generation, adaptive exploration strategies, and semantic understanding of target programs. The following comparison table highlights key differences between traditional and AI-augmented fuzzing:

| Capability | Traditional Fuzzing | AI-Enhanced Fuzzing |

|---|---|---|

| Input Generation | Random/mutation-based | Learned from successful inputs |

| Exploration Strategy | Brute-force coverage | Intelligent path prioritization |

| Semantic Understanding | None | Context-aware input crafting |

| Feedback Processing | Basic crash detection | Comprehensive anomaly analysis |

| Resource Efficiency | High computational cost | Optimized resource allocation |

| Zero-Day Discovery | Limited to known patterns | Generalizes to novel exploits |

| Integration Complexity | Standalone tools | Seamless workflow integration |

One of the most significant advantages of AI-augmented fuzzing lies in intelligent input generation. Rather than relying solely on random mutations, AI models can learn patterns from successful fuzzing campaigns and generate inputs specifically designed to trigger vulnerable code paths. This approach dramatically reduces the time required to discover exploitable conditions while increasing the diversity of discovered vulnerabilities.

Consider a practical example involving protocol fuzzing for a network service. Traditional fuzzers might systematically mutate packet fields or generate random byte sequences, hoping to trigger unexpected behavior. An AI-enhanced approach would analyze successful exploitation attempts from similar protocols, learn common attack patterns, and generate targeted inputs designed to exercise specific vulnerability classes.

python

Simplified example of AI-guided input generation

import numpy as np

Train model on successful fuzzing inputs

successful_inputs = load_training_data('exploit_samples.json') model = train_generator_model(successful_inputs)

Generate intelligent inputs for new target

for i in range(1000): seed_input = select_diverse_seed() ai_generated = model.generate_variant(seed_input) result = execute_target(ai_generated)

if result.crashed or result.anomalous_behavior: save_potential_exploit(ai_generated, result)

Coverage-guided fuzzing represents another area where AI enhancement provides substantial benefits. Traditional coverage metrics focus primarily on code paths executed, but AI models can analyze which paths are most likely to lead to security-relevant states. This semantic understanding enables more efficient exploration of the program state space while focusing resources on high-value targets.

Performance benchmarks demonstrate clear advantages for AI-augmented fuzzing approaches. Studies comparing traditional AFL (American Fuzzy Lop) with AI-enhanced variants show:

- Time to First Crash: Reduced by 40-60% on average

- Unique Crash Discovery: Increased by 25-35% within same timeframes

- Exploitable Bug Rate: Improved by 30-45% compared to baseline

- Resource Utilization: More efficient CPU and memory usage patterns

The integration of reinforcement learning techniques further enhances AI fuzzing capabilities. By treating vulnerability discovery as a reward-based optimization problem, these systems can learn optimal exploration strategies that adapt to specific target characteristics. The learning process enables continuous improvement in fuzzing effectiveness without requiring manual tuning or configuration.

Hybrid approaches that combine static analysis insights with dynamic fuzzing also benefit from AI augmentation. Static analysis can identify potential vulnerability candidates, while AI-guided fuzzing focuses intensive dynamic testing on the most promising targets. This synergy maximizes the strengths of both approaches while minimizing their individual limitations.

Modern AI fuzzing frameworks also excel at handling complex input formats such as structured data, protocols, and domain-specific languages. Traditional fuzzers often struggle with maintaining input validity while introducing malicious mutations, leading to wasted effort on rejected inputs. AI models can learn grammar rules and structural constraints, generating valid-yet-malicious inputs that bypass initial validation layers.

Key Insight: AI-enhanced fuzzing combines the thoroughness of traditional approaches with intelligent guidance and semantic understanding, resulting in faster, more effective vulnerability discovery.

What Are the Technical Challenges of Integrating AI Discovery Tools?

While AI vulnerability discovery transformers offer tremendous potential for improving security workflows, their practical integration presents several significant technical challenges that organizations must carefully navigate. These obstacles span infrastructure requirements, model reliability concerns, workflow adaptation needs, and operational considerations that can determine whether AI adoption succeeds or fails.

Infrastructure and computational requirements represent one of the primary barriers to AI tool adoption. Transformer models, particularly those designed for code analysis tasks, demand substantial computational resources for both training and inference phases. Organizations must evaluate whether their existing hardware infrastructure can support these demands or whether cloud-based solutions are necessary.

Model size considerations directly impact deployment feasibility. Modern code analysis transformers often exceed several gigabytes in size, requiring significant storage capacity and memory bandwidth. Consider the resource requirements for deploying a typical large language model for security analysis:

yaml

Example deployment specifications

model_requirements: disk_space: 8GB minimum ram: 16GB recommended gpu_memory: 12GB for accelerated inference cpu_cores: 8+ for parallel processing network_bandwidth: 100Mbps for cloud deployments

Organizations with limited computational resources may need to implement model optimization techniques such as quantization, pruning, or knowledge distillation to reduce deployment overhead while maintaining acceptable accuracy levels. These optimization processes require specialized expertise and careful validation to ensure that security effectiveness isn't compromised.

Data quality and availability present another critical challenge for successful AI integration. Effective vulnerability discovery models require extensive training datasets that include diverse code samples, vulnerability annotations, and contextual information. Many organizations struggle to assemble sufficiently large and representative datasets for their specific domains or technology stacks.

The annotation process for training data introduces additional complexity. Accurate labeling of vulnerabilities requires deep security expertise and significant manual effort. Automated labeling approaches based on CVE databases or security advisories may introduce noise or bias that degrades model performance. Organizations must establish robust data curation processes to ensure training quality.

Model interpretability and explainability pose significant challenges for security-critical applications. When AI systems flag potential vulnerabilities, security analysts need clear explanations of why specific code patterns were identified as risky. Black-box model behavior can undermine trust and hinder effective triage processes.

Addressing interpretability concerns often requires implementing additional analysis layers that can trace model decisions back to specific code features or patterns. Techniques such as attention visualization, feature attribution analysis, and counterfactual explanation generation help bridge the gap between AI predictions and human understanding.

Integration with existing security toolchains demands careful architectural planning. Organizations typically operate complex ecosystems of static analysis tools, dynamic analysis platforms, issue tracking systems, and development workflows. New AI discovery tools must seamlessly integrate with these existing components without disrupting established processes.

API design and standardization play crucial roles in successful integration. Well-defined interfaces enable modular deployment and facilitate communication between different tools and systems. Organizations should prioritize adopting industry-standard formats such as SARIF (Static Analysis Results Interchange Format) for vulnerability reporting and integration.

Continuous model maintenance and updating represent ongoing operational challenges. Security landscapes evolve rapidly, with new vulnerability classes, attack techniques, and defensive measures emerging regularly. AI models require periodic retraining and validation to maintain effectiveness against contemporary threats.

Version control and model lifecycle management become essential operational practices. Organizations must establish processes for tracking model performance, identifying degradation patterns, and coordinating updates with minimal disruption to ongoing security operations. This includes maintaining multiple model versions for A/B testing and rollback capabilities.

Scalability considerations extend beyond raw computational requirements to include distributed processing architectures and load balancing strategies. As organizations expand their AI security capabilities, they must plan for horizontal scaling that can accommodate growing codebases and increasing analysis demands.

Security of the AI systems themselves introduces another layer of complexity. AI models and their supporting infrastructure become attractive targets for adversaries seeking to manipulate vulnerability detection processes or gain unauthorized access to sensitive analysis capabilities.

Compliance and regulatory requirements add further constraints to AI integration efforts. Organizations operating in regulated industries must ensure that their AI systems meet relevant standards for accuracy, transparency, and auditability. This may require implementing additional validation and documentation processes.

Key Insight: Successful AI integration requires addressing infrastructure, data quality, interpretability, and operational challenges through systematic planning and specialized expertise.

How Can Organizations Implement AI Vulnerability Discovery Effectively?

Implementing AI vulnerability discovery capabilities requires a strategic approach that balances technical excellence with organizational readiness. Successful deployment involves careful planning across multiple dimensions including technology selection, team preparation, process integration, and performance measurement. Organizations that approach AI adoption systematically can realize substantial security improvements while avoiding common pitfalls that derail less structured initiatives.

Technology evaluation and selection form the foundation of effective implementation. Organizations should begin by clearly defining their specific security objectives and constraints before evaluating available AI tools and platforms. This process should consider factors such as supported programming languages, integration capabilities, performance requirements, and total cost of ownership.

A phased approach to implementation often proves most effective for managing risk and building organizational confidence. Starting with pilot projects on non-critical codebases allows teams to gain experience with AI tools while validating their effectiveness in realistic scenarios. These pilot programs should include clear success criteria and measurement frameworks to guide decision-making about broader deployment.

Consider the following implementation roadmap for integrating AI vulnerability discovery:

markdown Phase 1: Foundation Setup

- Infrastructure provisioning and model deployment

- Initial training data collection and curation

- Basic integration with existing analysis workflows

- Team training on AI tool usage and interpretation

Phase 2: Pilot Deployment

- Select representative codebases for initial testing

- Establish baseline metrics for comparison

- Configure alerting and triage processes

- Gather feedback from security analysts

Phase 3: Expansion and Optimization

- Scale deployment to additional code repositories

- Refine models based on real-world performance data

- Optimize integration with development workflows

- Implement advanced features such as predictive modeling

Phase 4: Maturity and Innovation

- Full production deployment across organization

- Continuous improvement processes and feedback loops

- Advanced analytics and reporting capabilities

- Research and development of custom enhancements

Team preparation and skill development represent critical success factors for AI implementation. Security analysts and developers need training on interpreting AI-generated findings, understanding model limitations, and integrating AI insights into their existing workflows. This education process should emphasize both technical competencies and critical thinking skills necessary for effective human-AI collaboration.

Process integration requires careful consideration of how AI findings will be incorporated into existing security workflows. Organizations should establish clear procedures for triaging AI-discovered vulnerabilities, determining appropriate response actions, and tracking remediation progress. Integration with ticketing systems, continuous integration pipelines, and incident response procedures ensures that AI insights translate into actionable security improvements.

Performance measurement and optimization form ongoing responsibilities throughout the implementation lifecycle. Organizations should establish key performance indicators that reflect both technical effectiveness and business impact. Relevant metrics might include:

- Vulnerability detection accuracy (precision and recall)

- Time-to-detection for critical vulnerabilities

- Reduction in manual review effort

- False positive rate and analyst productivity

- Integration with remediation workflows

- Business impact metrics such as reduced breach risk

Regular performance reviews should inform continuous improvement efforts. This includes analyzing model performance trends, identifying areas for enhancement, and planning updates to maintain effectiveness against evolving threats.

Risk management and governance frameworks become increasingly important as AI capabilities expand. Organizations should establish clear policies regarding AI tool usage, data handling, model validation, and incident response procedures. These frameworks should address both technical risks such as model drift or adversarial manipulation and operational risks such as over-reliance on automated systems.

Change management practices help ensure smooth adoption of AI capabilities across the organization. This includes communicating benefits and limitations to stakeholders, addressing concerns about job displacement or reduced human judgment, and fostering collaborative relationships between AI systems and human experts.

Vendor partnerships and ecosystem considerations influence implementation success. Organizations may choose to develop custom AI capabilities internally, license commercial solutions, or adopt open-source frameworks. Each approach has distinct advantages and challenges that should align with organizational priorities and capabilities.

Documentation and knowledge sharing facilitate successful implementation and ongoing operations. Comprehensive documentation should cover model configurations, integration procedures, troubleshooting guides, and best practices for interpretation and action. Regular knowledge sharing sessions help disseminate lessons learned and promote consistent usage across teams.

Cost-benefit analysis and return on investment calculations guide resource allocation decisions. While AI vulnerability discovery tools require significant upfront investment, they can deliver substantial long-term benefits through improved security posture, reduced manual effort, and faster vulnerability response times.

Key Insight: Effective AI implementation requires strategic planning across technology, people, processes, and governance dimensions to maximize security benefits while managing associated risks.

What Future Developments Will Shape AI-Driven Security Research?

The field of AI-driven security research continues evolving rapidly, with several emerging trends and technological advances poised to reshape how organizations approach vulnerability discovery and threat mitigation. These developments span improvements in model architectures, expansion into new application domains, enhanced integration capabilities, and novel approaches to adversarial resilience.

Next-generation transformer architectures promise significant improvements in both performance and efficiency for security applications. Researchers are exploring specialized attention mechanisms optimized for code analysis tasks, more efficient training procedures that reduce computational requirements, and hybrid architectures that combine the strengths of different neural network paradigms. These advances could make AI vulnerability discovery tools more accessible to organizations with limited computational resources while delivering even higher accuracy.

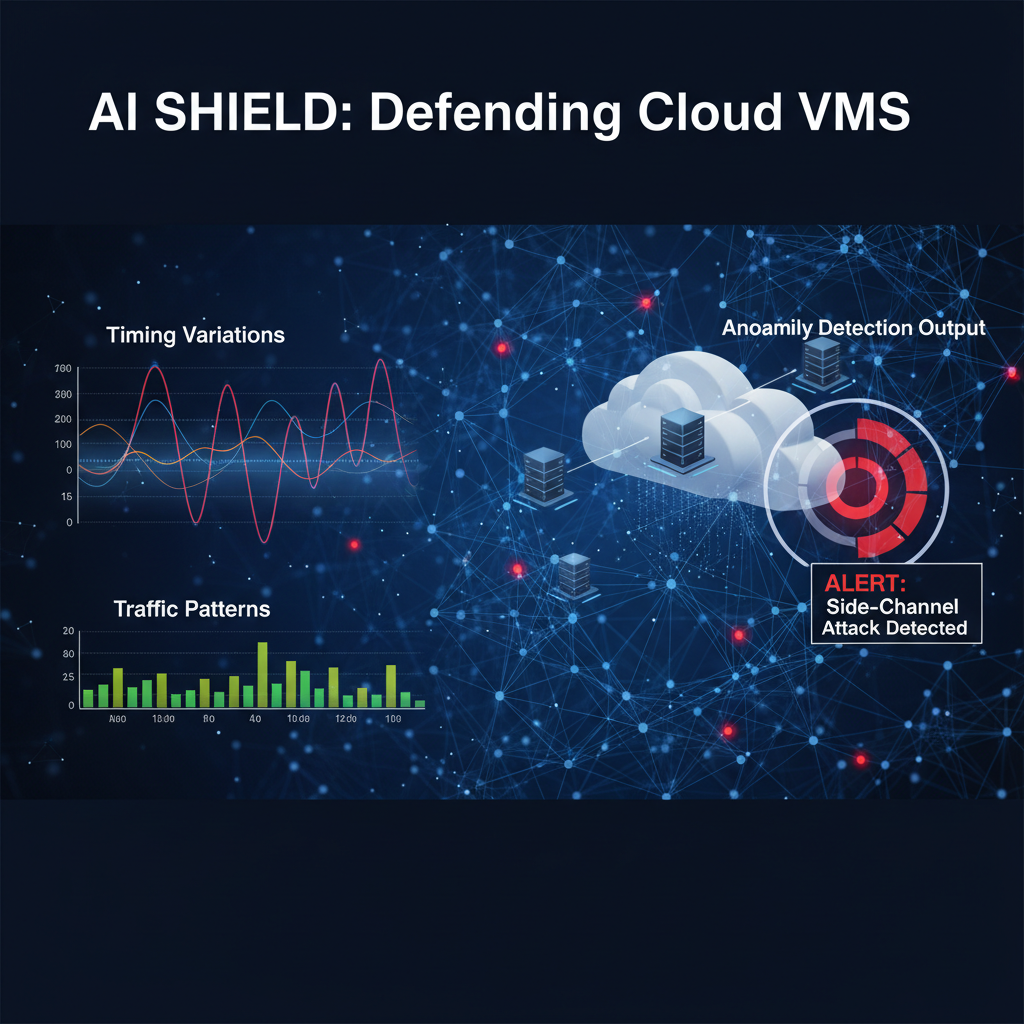

One particularly promising area involves the development of multimodal AI systems that can analyze multiple aspects of software security simultaneously. Rather than focusing exclusively on source code, these systems might integrate static analysis with dynamic behavior monitoring, network traffic analysis, and environmental context to provide more comprehensive security assessments.

Advances in few-shot and zero-shot learning capabilities will expand the applicability of AI security tools to previously challenging domains. Current models often require extensive training on domain-specific data to achieve optimal performance. Emerging techniques that enable effective learning from limited examples could accelerate deployment in new technology stacks or specialized application areas.

The intersection of AI and formal verification methods represents another frontier with tremendous potential. Combining the pattern recognition capabilities of AI models with the mathematical rigor of formal methods could produce hybrid approaches that offer both the scalability of machine learning and the guarantees of formal verification. This synthesis could address one of the primary criticisms of AI security tools—their inability to provide absolute assurances about security properties.

Adversarial robustness and red-team testing will become increasingly important as AI security tools mature. Sophisticated adversaries will inevitably attempt to evade or manipulate AI detection systems, necessitating the development of resilient architectures and continuous adversarial testing programs. This arms race between defenders and attackers will drive innovation in both offensive and defensive AI capabilities.

Explainable AI (XAI) techniques will play crucial roles in building trust and facilitating effective human-AI collaboration. As AI systems assume greater responsibility for security decisions, stakeholders will demand clearer insights into how these systems arrive at their conclusions. Advances in visualization, natural language explanation generation, and interactive debugging interfaces will enhance usability and accountability.

Edge computing and decentralized AI architectures will enable new deployment scenarios for security tools. Rather than relying on centralized cloud services, future AI security systems might operate directly on developer workstations, within containerized environments, or across distributed infrastructure. This shift could improve performance, reduce latency, and address privacy concerns associated with transmitting sensitive code to external services.

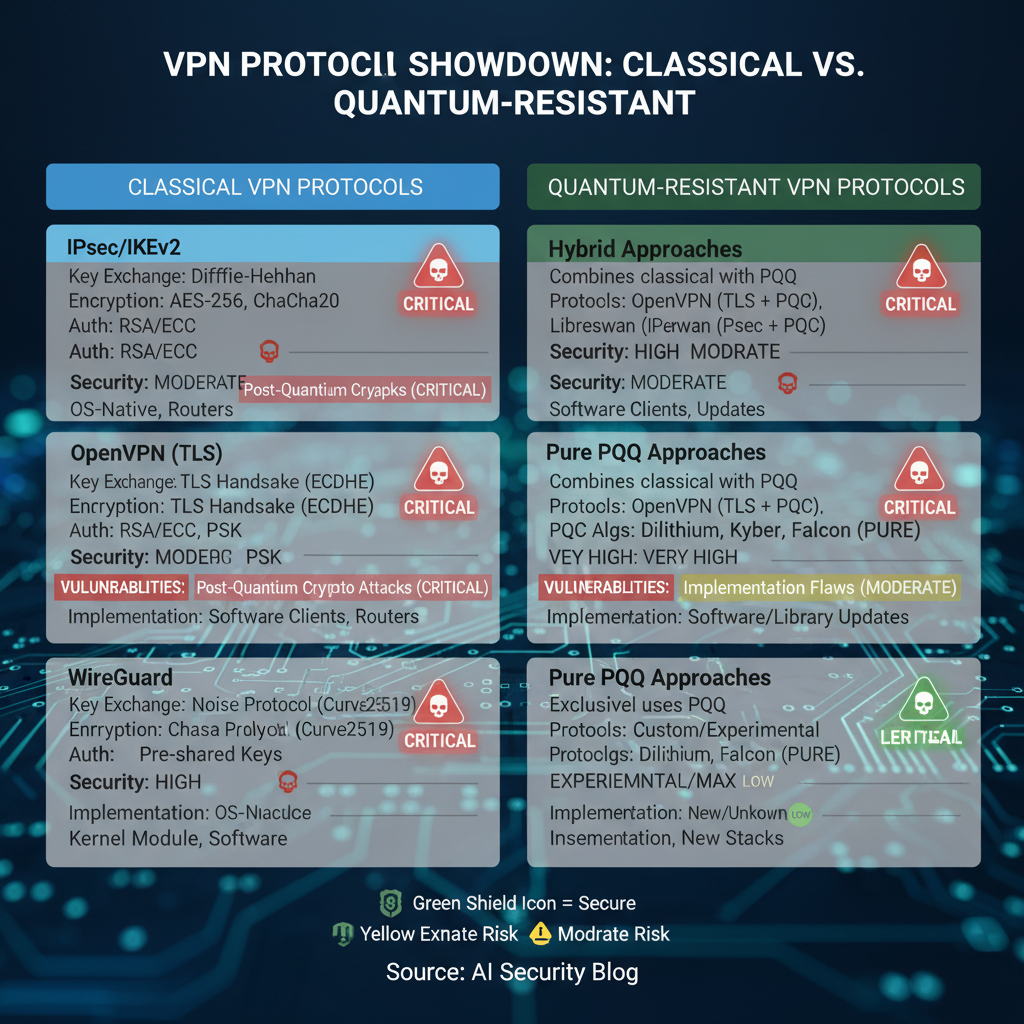

Integration with emerging technologies such as quantum computing and blockchain will create new opportunities and challenges for AI-driven security research. Quantum-resistant cryptography will require updated vulnerability analysis approaches, while blockchain applications introduce novel attack surfaces that AI tools must learn to recognize and assess.

Regulatory compliance and industry standards will increasingly influence AI security tool development. As governments and industry groups establish requirements for AI system transparency, fairness, and safety, security tools will need to incorporate compliance monitoring and reporting capabilities. This evolution will drive standardization efforts and interoperability improvements across the security tool ecosystem.

Collaborative AI systems that can share knowledge and coordinate responses across organizational boundaries represent another exciting possibility. Federated learning approaches could enable security teams to benefit from collective threat intelligence while preserving sensitive data privacy. Such systems could dramatically accelerate vulnerability discovery and response coordination.

Ethical considerations and responsible AI development practices will gain prominence as these technologies become more powerful and pervasive. Organizations developing AI security tools will need to address concerns about bias, privacy, and potential misuse while ensuring that their innovations contribute positively to overall cybersecurity posture.

The democratization of AI security tools through open-source initiatives and accessible platforms will accelerate adoption across diverse organizations. As barriers to entry decrease, smaller companies and individual security researchers will gain access to capabilities previously available only to well-resourced enterprises. This trend could lead to more rapid innovation and broader community engagement in security research.

International cooperation and information sharing will become increasingly important for addressing global cybersecurity challenges. AI tools that can process and correlate threat intelligence from multiple sources and languages will play crucial roles in coordinated defense efforts. Cross-border collaboration on AI security research could accelerate progress on shared challenges while establishing common standards and best practices.

Key Insight: The future of AI-driven security research will be shaped by architectural innovations, multimodal integration, adversarial resilience, and collaborative approaches that enhance both effectiveness and accessibility.

Key Takeaways

• Transformer-based AI models offer superior accuracy and context awareness compared to traditional static analysis approaches, reducing false positive rates by 50-70% • Predictive vulnerability modeling enables proactive risk assessment by analyzing code evolution patterns and historical vulnerability data • AI-enhanced fuzzing techniques combine intelligent input generation with semantic understanding to discover vulnerabilities faster than classical approaches • Successful AI integration requires addressing infrastructure, data quality, interpretability, and operational challenges through systematic planning • Next-generation developments including multimodal analysis, federated learning, and explainable AI will expand capabilities while improving accessibility

Frequently Asked Questions

Q: How do AI vulnerability discovery transformers differ from traditional signature-based scanners?

AI vulnerability discovery transformers use machine learning to understand code semantics and context, enabling them to detect novel vulnerability patterns that signature-based tools miss. Unlike rule-based approaches that rely on predefined patterns, transformers learn from vast code datasets to identify subtle security risks through contextual analysis and pattern recognition.

Q: What programming languages do AI security tools support?

Most modern AI security tools support major programming languages including Python, Java, JavaScript, C/C++, Go, Rust, and PHP. The effectiveness varies by language due to differences in syntax complexity and available training data, with newer tools expanding support through continuous model training and community contributions.

Q: Can AI models replace human security analysts completely?

No, AI models complement rather than replace human analysts by automating routine vulnerability detection and providing intelligent prioritization. Human expertise remains essential for complex threat analysis, business context evaluation, and strategic decision-making that requires nuanced understanding beyond pattern recognition capabilities.

Q: How much training data do AI vulnerability discovery models require?

Effective models typically require thousands to millions of code examples with vulnerability annotations, though transfer learning techniques can reduce requirements for specific domains. The quality and diversity of training data often matter more than quantity, with curated datasets yielding better performance than larger but noisy collections.

Q: What are the main limitations of current AI security tools?

Current limitations include difficulty with zero-day vulnerability detection, challenges in explaining reasoning behind findings, potential for adversarial evasion, and dependence on high-quality training data. Additionally, these tools may struggle with domain-specific code patterns and require significant computational resources for optimal performance.

Ready to Level Up Your Security Research?

Get 10,000 free tokens and start using KaliGPT, 0Day Coder, DarkGPT, OnionGPT, and mr7 Agent today. No credit card required!

Start Free → | Try mr7 Agent →