AI Side-Channel Attack Detection in Multi-Tenant Cloud Environments

AI Side-Channel Attack Detection in Multi-Tenant Cloud Environments

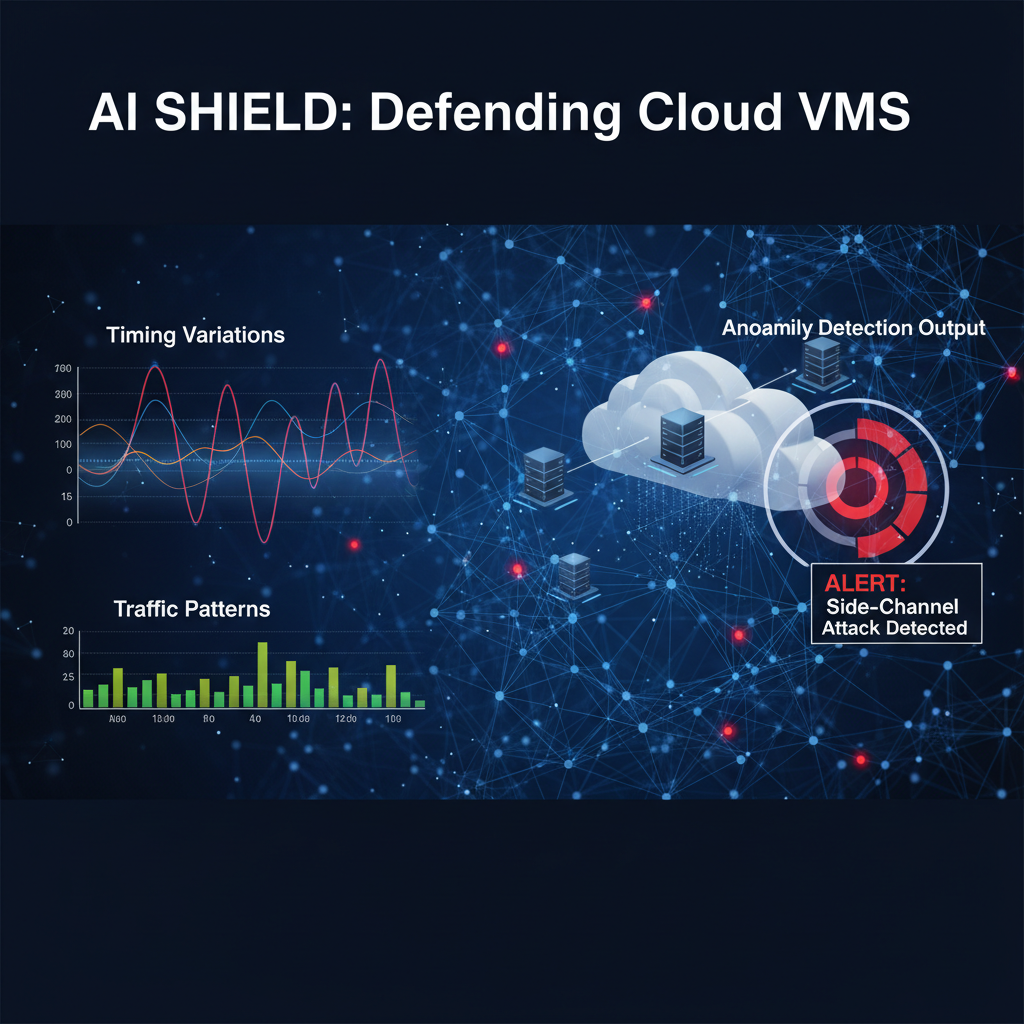

Cross-tenant side-channel attacks represent one of the most insidious threats facing modern cloud infrastructure. As organizations increasingly migrate critical workloads to shared cloud environments, attackers have developed sophisticated techniques to extract sensitive data through subtle observations of system behavior. These attacks exploit the inherent resource sharing in multi-tenant architectures, leveraging timing variations, cache access patterns, and network traffic anomalies to infer confidential information.

Traditional detection methods often fall short against these stealthy adversaries. Signature-based approaches fail to identify novel attack vectors, while anomaly detection systems generate excessive false positives due to the dynamic nature of cloud workloads. However, recent breakthroughs in machine learning have revolutionized our ability to detect these elusive threats. Transformer-based models, originally designed for natural language processing, have shown remarkable success in identifying complex temporal patterns indicative of side-channel exploitation.

The urgency of this issue cannot be overstated. Throughout 2025-2026, major cloud providers have reported significant incidents of cross-tenant data leakage, affecting everything from financial services to healthcare records. Academic research demonstrates that properly trained ML models can achieve detection accuracy exceeding 95%, representing a paradigm shift in cloud security posture. These advances enable security teams to proactively identify potential breaches rather than reactively investigating confirmed incidents.

This comprehensive analysis explores cutting-edge machine learning approaches for detecting cross-tenant side-channel attacks. We'll examine how transformer architectures process subtle behavioral signals, compare performance against conventional methodologies, and demonstrate practical implementation strategies using real-world datasets. Additionally, we'll showcase how mr7.ai's suite of AI-powered security tools can accelerate research and deployment of these advanced detection capabilities.

What Are Cross-Tenant Side-Channel Attacks and Why Are They Dangerous?

Cross-tenant side-channel attacks exploit unintended information leakage between different tenants sharing the same physical infrastructure in cloud computing environments. Unlike direct attacks that target specific vulnerabilities, side-channel attacks observe and analyze indirect indicators such as timing differences, power consumption, electromagnetic emissions, or resource utilization patterns to extract sensitive information.

In multi-tenant environments, these attacks become particularly dangerous because they can bypass traditional security boundaries. Even when proper isolation mechanisms are in place, shared resources like CPU caches, memory buses, and network infrastructure can inadvertently leak information between tenants. For instance, an attacker might monitor cache access patterns to determine when a victim tenant is performing cryptographic operations, potentially enabling key recovery through careful timing analysis.

The sophistication of modern side-channel attacks has increased dramatically. Researchers have demonstrated attacks that can:

- Extract AES encryption keys by monitoring cache access patterns across virtual machine boundaries

- Recover RSA private keys through precise timing measurements of modular exponentiation operations

- Infer business-critical information by analyzing database query execution times

- Detect presence of specific applications or services through network traffic fingerprinting

These attacks pose unique challenges for detection systems. They typically generate minimal network traffic, operate within normal operational parameters, and leave few traces in traditional security logs. Conventional intrusion detection systems often classify side-channel activity as benign behavior, allowing attacks to proceed undetected for extended periods.

Consider the following example scenario: Tenant A operates a financial services application that processes credit card transactions. Tenant B, controlled by an attacker, co-locates on the same physical server. By carefully monitoring CPU cache behavior, the attacker can determine when Tenant A is processing high-value transactions based on specific memory access patterns. Over time, this information could be used to optimize phishing campaigns or identify lucrative targets for further exploitation.

python

Example: Simulating cache-based side-channel observation

import numpy as np

class CacheMonitor: def init(self, threshold=100): self.threshold = threshold self.access_pattern = []

def monitor_access(self, address, timestamp): # Record cache access timing self.access_pattern.append({ 'address': address, 'timestamp': timestamp, 'latency': self.measure_latency(address) })

# Analyze for suspicious patterns if self.detect_suspicious_activity(): self.alert_security_team()def measure_latency(self, address): # Simulate cache access measurement base_latency = 50 # ns baseline variance = np.random.normal(0, 10) return max(0, base_latency + variance)def detect_suspicious_activity(self): # Simplified detection logic recent_latencies = [p['latency'] for p in self.access_pattern[-10:]] avg_latency = np.mean(recent_latencies) return avg_latency > self.thresholdThe danger extends beyond immediate data theft. Successful side-channel attacks can serve as stepping stones for more comprehensive compromises. Once attackers establish the capability to monitor cross-tenant communications, they can expand their surveillance to identify additional vulnerabilities, map internal network topologies, or coordinate larger-scale operations across multiple tenants simultaneously.

Furthermore, the legal and regulatory implications are severe. Data protection regulations like GDPR, CCPA, and industry-specific standards impose strict requirements for preventing unauthorized data access. Organizations that suffer successful side-channel attacks may face substantial fines, reputational damage, and loss of customer trust, even when the breach occurred through technically legitimate means.

Understanding these threats is crucial for developing effective countermeasures. Traditional security controls focused on perimeter defense and access control are insufficient against side-channel attacks that operate within the trusted computing environment. Instead, organizations must adopt proactive monitoring strategies that can detect anomalous behavior patterns indicative of information leakage attempts.

Key Insight: Cross-tenant side-channel attacks represent a fundamental challenge to cloud security assumptions. Their stealthy nature requires advanced detection capabilities that go beyond traditional signature-based approaches.

How Do Traditional Detection Methods Fall Short Against Modern Side-Channel Threats?

Traditional approaches to detecting side-channel attacks rely heavily on signature-based matching, simple threshold alerts, and basic statistical anomaly detection. While these methods served adequately in simpler computing environments, they prove inadequate against the sophisticated techniques employed in modern cross-tenant attacks.

Signature-based detection systems maintain databases of known attack patterns and attempt to match observed behavior against these predefined signatures. However, side-channel attacks rarely follow predictable patterns. Attackers continuously adapt their methodologies to evade detection, making static signatures obsolete almost immediately after creation. Moreover, many side-channel attacks are inherently unique to specific hardware configurations, software implementations, and environmental conditions, rendering generic signatures ineffective.

Basic threshold-based monitoring systems trigger alerts when measured values exceed predetermined limits. For example, a system might flag unusual CPU usage spikes or unexpected network traffic volumes. Unfortunately, side-channel attacks typically operate within normal operational ranges to avoid detection. Sophisticated attackers carefully calibrate their activities to remain below alert thresholds while still extracting meaningful information.

Simple statistical anomaly detection approaches, such as those based on mean and standard deviation calculations, struggle with the high-dimensional nature of side-channel data. These methods often fail to distinguish between legitimate workload variations and malicious activity. Consider a scenario where legitimate tenant workloads naturally exhibit periodic resource usage patterns that happen to correlate with an attacker's monitoring activities. Traditional anomaly detectors would likely miss the coordinated behavior entirely.

| Detection Method | Strengths | Weaknesses |

|---|---|---|

| Signature-based | Fast detection of known attacks | Cannot detect novel variants |

| Threshold alerts | Simple implementation | High false positive rates |

| Basic statistics | Low computational overhead | Misses subtle coordinated patterns |

| Rule-based systems | Clear audit trails | Requires constant rule updates |

| Manual analysis | Human expertise integration | Scales poorly, subjective interpretation |

Machine learning offers several advantages over traditional approaches:

-

Adaptive Learning: ML models can continuously update their understanding of normal behavior patterns, automatically adapting to changing workloads and environmental conditions.

-

Pattern Recognition: Deep learning architectures excel at identifying complex, non-linear relationships in high-dimensional data that would be impossible to capture through manual rule creation.

-

Temporal Analysis: Recurrent neural networks and transformer models can process sequential data to identify temporal correlations and long-term trends that indicate coordinated attack activities.

-

Feature Engineering Automation: Advanced ML techniques can automatically discover relevant features from raw sensor data, reducing dependency on domain experts for feature selection.

However, implementing effective ML-based detection systems presents significant challenges. Collecting sufficient training data for side-channel attacks is difficult because these activities are rare and often undetected. Labeling data accurately requires deep expertise in both machine learning and side-channel attack methodologies. Additionally, deploying ML models in production environments demands careful consideration of performance impact, false positive rates, and integration with existing security infrastructure.

bash

Example: Collecting system metrics for traditional monitoring

#!/bin/bash

Monitor CPU usage

cpu_usage=$(top -bn1 | grep "Cpu(s)" | awk '{print $2}' | cut -d'%' -f1) echo "CPU Usage: ${cpu_usage}%"

Monitor memory usage

mem_info=$(free | grep Mem | awk '{printf("%.2f%%"), $3/$2 * 100.0}') echo "Memory Usage: ${mem_info}"*

Check for unusual network connections

netstat -an | grep ESTABLISHED | wc -l

Log metrics for analysis

echo "$(date): CPU=${cpu_usage}, MEM=${mem_info}" >> /var/log/system_metrics.log

Despite these limitations, traditional methods still serve important roles in comprehensive security strategies. They provide fast, lightweight detection capabilities for obvious threats and establish baseline monitoring that can feed into more sophisticated ML systems. The key is recognizing when traditional approaches reach their limits and augmenting them with advanced analytical capabilities.

Actionable Takeaway: Organizations should view traditional detection methods as complementary components within a layered security architecture rather than standalone solutions for advanced threats.

What Makes Transformer-Based Models Effective for Side-Channel Detection?

Transformer architectures, originally developed for natural language processing tasks, have emerged as powerful tools for detecting side-channel attacks in multi-tenant cloud environments. Their effectiveness stems from several key characteristics that align perfectly with the challenges of side-channel detection.

The attention mechanism at the heart of transformer models enables them to identify complex relationships between seemingly unrelated events. In side-channel detection scenarios, this capability proves invaluable for correlating subtle timing variations across multiple system components. For example, a transformer model can simultaneously analyze CPU cache access patterns, memory bandwidth utilization, and network packet timings to identify coordinated attack activities that would be invisible to single-dimensional monitoring approaches.

Multi-head attention allows transformers to process different aspects of input data concurrently. This parallel processing capability mirrors the multi-faceted nature of side-channel attacks, which often involve coordinated manipulation of various system resources. By examining multiple signal streams simultaneously, transformer models can detect intricate attack patterns that emerge only when viewed holistically.

Positional encoding mechanisms enable transformers to preserve temporal relationships in sequential data. Side-channel attacks frequently depend on precise timing coordination between observation and inference phases. Traditional models that ignore temporal context may miss critical attack signatures, while transformers can leverage temporal information to enhance detection accuracy significantly.

Level up: Security professionals use mr7 Agent to automate bug bounty hunting and pentesting. Try it alongside DarkGPT for unrestricted AI research. Start free →

Consider the following implementation example demonstrating how transformer attention mechanisms can identify correlated side-channel signals:

python import torch import torch.nn as nn import numpy as np from sklearn.preprocessing import StandardScaler

class SideChannelTransformer(nn.Module): def init(self, input_dim, d_model=128, nhead=8, num_layers=6): super().init() self.embedding = nn.Linear(input_dim, d_model) encoder_layer = nn.TransformerEncoderLayer( d_model=d_model, nhead=nhead, batch_first=True ) self.transformer = nn.TransformerEncoder(encoder_layer, num_layers) self.classifier = nn.Linear(d_model, 2) # Normal vs Attack

def forward(self, x): # x shape: (batch_size, sequence_length, features) embedded = self.embedding(x) transformed = self.transformer(embedded) # Global average pooling pooled = torch.mean(transformed, dim=1) output = self.classifier(pooled) return output

Example usage with synthetic side-channel data

def generate_side_channel_data(num_samples=1000, seq_length=50, features=10): # Generate normal behavior data normal_data = np.random.normal(0, 1, (num_samples//2, seq_length, features))

Generate attack behavior data with subtle correlations

attack_data = np.random.normal(0, 1, (num_samples//2, seq_length, features))# Introduce coordinated timing patternsfor i in range(seq_length): if i % 10 == 0: # Periodic correlation attack_data[:, i, 0] += 2.0 attack_data[:, i, 1] -= 1.5data = np.concatenate([normal_data, attack_data], axis=0)labels = np.concatenate([np.zeros(num_samples//2), np.ones(num_samples//2)])return data, labelsTraining example

model = SideChannelTransformer(input_dim=10) data, labels = generate_side_channel_data() scaler = StandardScaler() data_scaled = scaler.fit_transform(data.reshape(-1, 10)).reshape(data.shape)

Convert to PyTorch tensors

tensor_data = torch.FloatTensor(data_scaled) tensor_labels = torch.LongTensor(labels)

Training loop (simplified)

optimizer = torch.optim.Adam(model.parameters(), lr=0.001) criterion = nn.CrossEntropyLoss()

for epoch in range(100): optimizer.zero_grad() outputs = model(tensor_data) loss = criterion(outputs, tensor_labels) loss.backward() optimizer.step()

if epoch % 20 == 0: print(f"Epoch {epoch}, Loss: {loss.item():.4f}")

Self-attention mechanisms allow transformers to dynamically weight different input features based on their relevance to the detection task. This adaptive feature weighting proves particularly useful when dealing with heterogeneous side-channel data sources that vary in importance across different attack scenarios. The model can learn to focus on the most discriminative signals while suppressing noise from less relevant sources.

The scalability of transformer architectures enables processing of large-scale monitoring data streams typical in cloud environments. Modern implementations can efficiently handle thousands of concurrent data streams while maintaining real-time processing capabilities essential for effective threat detection.

Pre-training and fine-tuning paradigms allow transformer models to leverage general pattern recognition capabilities learned from large datasets before specializing on specific side-channel detection tasks. This transfer learning approach can significantly reduce the amount of labeled training data required for effective model performance.

Practical Application: Security teams can leverage pre-trained transformer models available through platforms like mr7.ai to jumpstart their side-channel detection capabilities without requiring extensive ML expertise.

How Can Machine Learning Models Detect Subtle Timing Variations in Cloud Environments?

Detecting subtle timing variations represents one of the most challenging aspects of side-channel attack detection in multi-tenant cloud environments. These variations often occur at microsecond or nanosecond scales, making them nearly imperceptible to traditional monitoring systems. However, advanced machine learning models can identify these minute temporal anomalies through sophisticated pattern recognition and statistical analysis techniques.

High-resolution timestamping forms the foundation of timing variation detection. Modern systems can capture timing information with nanosecond precision using hardware performance counters, kernel-level tracing facilities, and specialized profiling tools. The challenge lies not in collecting this data but in distinguishing legitimate performance variations from malicious timing manipulation.

Statistical modeling approaches treat timing data as time series and apply techniques such as autocorrelation analysis, spectral density estimation, and change point detection. These methods can identify periodic patterns, sudden shifts in timing distributions, and correlations between timing events that suggest coordinated attack activities. For example, an attacker monitoring cache access patterns might introduce subtle delays that create distinctive timing signatures detectable through careful statistical analysis.

Deep learning architectures excel at discovering complex temporal patterns that would be impossible to specify manually. Recurrent neural networks (RNNs) and their variants like Long Short-Term Memory (LSTM) networks can process sequential timing data to identify long-range dependencies and temporal motifs indicative of side-channel exploitation. Convolutional neural networks (CNNs) applied to timing sequences can detect localized patterns and recurring structures that escape traditional statistical methods.

python import numpy as deposit from scipy import signal from sklearn.ensemble import IsolationForest

class TimingAnalyzer: def init(self, window_size=100): self.window_size = window_size self.isolation_forest = IsolationForest(contamination=0.1)

def extract_timing_features(self, timestamps): # Calculate inter-arrival times intervals = np.diff(timestamps)

# Statistical features features = { 'mean_interval': np.mean(intervals), 'std_interval': np.std(intervals), 'skewness': stats.skew(intervals), 'kurtosis': stats.kurtosis(intervals), 'entropy': self.calculate_entropy(intervals), 'periodicity': self.detect_periodicity(intervals) } return featuresdef calculate_entropy(self, data): # Normalize data normalized = (data - np.min(data)) / (np.max(data) - np.min(data) + 1e-10) # Calculate histogram hist, _ = np.histogram(normalized, bins=50, density=True) # Remove zero values to avoid log(0) hist = hist[hist > 0] # Calculate entropy return -np.sum(hist * np.log2(hist))def detect_periodicity(self, data): # Autocorrelation analysis autocorr = np.correlate(data, data, mode='full') autocorr = autocorr[len(autocorr)//2:] # Find peaks in autocorrelation peaks, _ = signal.find_peaks(autocorr[:50]) return len(peaks) > 2 # Multiple peaks suggest periodicitydef train_anomaly_detector(self, normal_timing_data): features_list = [] for timestamps in normal_timing_data: features = self.extract_timing_features(timestamps) features_list.append(list(features.values())) self.isolation_forest.fit(features_list)def detect_anomalies(self, test_timing_data): anomalies = [] for timestamps in test_timing_data: features = self.extract_timing_features(timestamps) feature_vector = np.array(list(features.values())).reshape(1, -1) prediction = self.isolation_forest.predict(feature_vector) if prediction[0] == -1: # Anomaly detected anomalies.append(timestamps) return anomalies*Example usage

analyzer = TimingAnalyzer()

Simulate normal timing data

normal_data = [] for _ in range(100): # Generate realistic timing intervals (microseconds) base_intervals = np.random.exponential(scale=50, size=200) # Mean ~50 microseconds noise = np.random.normal(0, 2, size=200) # Small random variations intervals = base_intervals + noise timestamps = np.cumsum(np.insert(intervals, 0, 0)) normal_data.append(timestamps)_

Train the anomaly detector

analyzer.train_anomaly_detector(normal_data)

Test with potentially anomalous data

Simulate attack timing pattern

attack_data = [] for _ in range(10): base_intervals = np.random.exponential(scale=50, size=200) # Introduce periodic timing manipulation for i in range(len(base_intervals)): if i % 20 == 0: # Every 20th interval base_intervals[i] += 100 # Add 100 microsecond delay timestamps = np.cumsum(np.insert(base_intervals, 0, 0)) attack_data.append(timestamps)_

Detect anomalies

anomalies = analyzer.detect_anomalies(attack_data) print(f"Detected {len(anomalies)} anomalous timing sequences")

Frequency domain analysis transforms timing data into the frequency domain using techniques like Fourier transforms or wavelet analysis. This approach can reveal hidden periodicities and frequency components that indicate coordinated timing manipulation. Attackers attempting to synchronize their monitoring activities with victim operations often introduce distinctive frequency signatures that stand out against background noise.

Ensemble methods combine multiple detection algorithms to improve overall accuracy and robustness. By aggregating predictions from diverse models, ensemble approaches can reduce false positive rates while maintaining high detection sensitivity. Techniques like voting classifiers, stacking, and boosting can effectively integrate timing-based detection with other side-channel indicators.

Real-time processing capabilities ensure that timing analysis can keep pace with high-frequency system operations typical in cloud environments. Streaming algorithms and online learning techniques enable continuous model updates without requiring complete retraining, allowing detection systems to adapt to evolving attack patterns.

Technical Insight: Effective timing variation detection requires combining high-resolution data collection with sophisticated analytical techniques that can distinguish malicious patterns from legitimate system behavior variations.

What Network Traffic Patterns Indicate Cross-Tenant Data Exfiltration Attempts?

Network traffic analysis plays a crucial role in detecting cross-tenant side-channel attacks, particularly those involving data exfiltration attempts. While these attacks often operate below traditional detection thresholds, careful examination of traffic patterns can reveal subtle indicators of unauthorized information transfer between tenants sharing cloud infrastructure.

Traffic volume anomalies represent one of the most straightforward indicators of potential data exfiltration. Attackers typically require sustained communication channels to transmit extracted information, leading to unusual outbound traffic patterns from compromised tenants. However, sophisticated attackers may employ traffic shaping techniques to mimic legitimate usage patterns, making volume-based detection challenging without contextual analysis.

Timing correlation analysis examines the relationship between network traffic patterns and system resource usage to identify coordinated attack activities. Legitimate applications typically exhibit predictable traffic-resource correlation patterns, while side-channel attacks often show unusual temporal relationships. For example, an attacker monitoring cache access patterns might generate network traffic bursts precisely synchronized with victim tenant operations.

Protocol-level fingerprinting involves analyzing packet headers, payload characteristics, and communication patterns to identify suspicious network behavior. Side-channel attacks may utilize unconventional protocols or communication patterns that deviate from normal tenant activities. Deep packet inspection combined with machine learning can detect these subtle protocol anomalies that escape traditional signature-based detection.

bash

Example: Network traffic analysis script

#!/bin/bash

INTERFACE="eth0" ANALYSIS_WINDOW=60 # seconds THRESHOLD_MULTIPLIER=3

analyze_traffic() { echo "Analyzing network traffic on $INTERFACE for $ANALYSIS_WINDOW seconds..."

Capture baseline traffic statistics

baseline_packets=$(timeout $ANALYSIS_WINDOW tcpdump -i $INTERFACE -c 1000 2>&1 | grep -c "captured")# Continuous monitoring loopwhile true; do current_packets=$(timeout $ANALYSIS_WINDOW tcpdump -i $INTERFACE -c 1000 2>&1 | grep -c "captured") # Calculate anomaly threshold threshold=$((baseline_packets * THRESHOLD_MULTIPLIER)) if [ "$current_packets" -gt "$threshold" ]; then echo "[ALERT] Unusual traffic spike detected: $current_packets packets (> $threshold threshold)" # Additional analysis echo "Performing deep packet inspection..." timeout 10 tcpdump -i $INTERFACE -nn -vvv | head -20 # Log incident echo "$(date): Traffic anomaly - $current_packets packets" >> /var/log/traffic_alerts.log fi sleep $ANALYSIS_WINDOWdone*}

Start traffic analysis

analyze_traffic &

Protocol fingerprinting example

protocol_analysis() { echo "Analyzing protocol usage patterns..."

Count different protocol types

tcp_count=$(netstat -tuln | grep -c tcp)udp_count=$(netstat -tuln | grep -c udp)established_connections=$(ss -s | grep -o "estab [0-9]*" | cut -d' ' -f2)echo "TCP connections: $tcp_count"echo "UDP connections: $udp_count"echo "Established connections: $established_connections"# Check for unusual connection patternsif [ "$established_connections" -gt 100 ]; then echo "[WARNING] High number of established connections detected" ss -tuln | grep ESTAB | head -10fi*}

protocol_analysis

Flow-based analysis examines network flows to identify unusual communication patterns between tenants. Cross-tenant attacks may manifest as unexpected data flows between seemingly unrelated tenants or abnormal flow characteristics such as unusually small packets, irregular timing, or asymmetric communication patterns that deviate from normal inter-tenant communication.

Encrypted traffic analysis focuses on metadata and behavioral characteristics of encrypted communications that might indicate covert channels. Even when payload content remains inaccessible, characteristics like packet sizes, timing patterns, and connection establishment behaviors can reveal suspicious activities. Machine learning models trained on encrypted traffic metadata can identify subtle anomalies indicative of side-channel exploitation.

DNS tunneling detection looks for DNS queries with unusual characteristics that might indicate data exfiltration through DNS channels. Side-channel attackers may abuse DNS protocols to transmit extracted information, creating distinctive query patterns including unusually long domain names, high query frequencies, or non-standard record types.

Behavioral baselining establishes normal network behavior profiles for individual tenants and compares current activity against these baselines. Deviations from established patterns, particularly those involving communication with unexpected destinations or unusual timing, can indicate compromise. Advanced systems use machine learning to continuously update behavioral models based on evolving tenant activities.

| Traffic Pattern Indicator | Detection Technique | Effectiveness |

|---|---|---|

| Volume anomalies | Statistical thresholding | Medium |

| Timing correlations | Cross-correlation analysis | High |

| Protocol fingerprinting | Deep packet inspection + ML | High |

| Flow characteristics | NetFlow analysis | Medium-High |

| Encrypted metadata | Behavioral analysis | Medium |

| DNS anomalies | Query pattern analysis | Medium |

Operational Recommendation: Implement multi-layered network traffic analysis combining traditional monitoring with machine learning-based anomaly detection for comprehensive cross-tenant attack visibility.

How Do Cache Access Patterns Reveal Covert Side-Channel Communication Channels?

Cache access pattern analysis represents one of the most powerful techniques for detecting covert side-channel communication channels in multi-tenant cloud environments. Modern processors employ complex cache hierarchies that can inadvertently leak information between co-located tenants through carefully orchestrated access timing and eviction strategies.

Cache timing analysis exploits the fact that cache hits and misses exhibit measurably different access latencies. Attackers can monitor these timing variations to infer information about victim tenant activities, particularly cryptographic operations that involve predictable memory access patterns. By establishing controlled cache states and measuring subsequent access times, attackers can reconstruct sensitive data such as encryption keys or business-critical information.

Prime+Probe attacks represent a classic cache-based side-channel technique where attackers first "prime" the cache by loading specific memory locations, then allow the victim to execute, and finally "probe" the same locations to determine which cache lines were evicted. The timing differences between priming and probing phases reveal information about the victim's memory access patterns. Detecting these coordinated prime-probe sequences requires sophisticated temporal analysis capabilities.

Eviction set construction involves identifying specific cache sets that can be reliably manipulated to create observable effects. Attackers construct eviction sets by finding memory addresses that map to the same cache set, allowing them to force cache evictions and create timing variations. Monitoring for systematic eviction set usage can indicate active side-channel exploitation attempts.

python import numpy as np from collections import defaultdict

class CachePatternAnalyzer: def init(self, cache_size=32768, line_size=64): # 32KB cache, 64B lines self.cache_size = cache_size self.line_size = line_size self.sets = cache_size // line_size self.access_history = defaultdict(list) self.suspicious_patterns = []

def record_access(self, address, timestamp, latency): # Calculate cache set index set_index = (address // self.line_size) % self.sets

self.access_history[set_index].append({ 'timestamp': timestamp, 'latency': latency, 'address': address }) # Analyze for suspicious patterns self.analyze_set_activity(set_index)def analyze_set_activity(self, set_index): accesses = self.access_history[set_index] if len(accesses) < 10: # Need sufficient data return # Check for prime+probe pattern recent_accesses = accesses[-20:] # Last 20 accesses # Look for alternating high/low latency patterns latencies = [a['latency'] for a in recent_accesses] latency_diffs = np.diff(latencies) # Prime+probe often shows large latency swings large_swings = np.sum(np.abs(latency_diffs) > 100) # Threshold in cycles if large_swings > 5: # Multiple large swings pattern = { 'type': 'potential_prime_probe', 'set_index': set_index, 'timestamp': recent_accesses[-1]['timestamp'], 'swing_count': large_swings } self.suspicious_patterns.append(pattern) self.alert_on_pattern(pattern)def detect_eviction_sets(self): # Look for addresses mapping to same cache sets set_usage = defaultdict(list) for set_index, accesses in self.access_history.items(): if len(accesses) > 50: # High activity set addresses = [a['address'] for a in accesses[-100:]] set_usage[set_index].extend(addresses) # Check for systematic eviction set construction for set_index, addresses in set_usage.items(): # Group addresses by page alignment page_groups = defaultdict(list) for addr in addresses: page_id = addr // 4096 # 4KB pages page_groups[page_id].append(addr) # Suspicious if many different pages accessing same set if len(page_groups) > 10: self.suspicious_patterns.append({ 'type': 'eviction_set_construction', 'set_index': set_index, 'pages_involved': len(page_vars), 'addresses': list(addresses[-20:]) })def alert_on_pattern(self, pattern): print(f"[ALERT] Suspicious cache pattern detected: {pattern['type']}") print(f" Set Index: {pattern.get('set_index', 'N/A')}") print(f" Timestamp: {pattern.get('timestamp', 'N/A')}") # Log for security team review with open('/var/log/cache_alerts.log', 'a') as f: f.write(f"{pattern}\n")Example simulation

analyzer = CachePatternAnalyzer()

Simulate normal cache access pattern

def simulate_normal_access(): for i in range(1000): address = np.random.randint(0, 1000000) timestamp = i * 0.001 # 1ms intervals latency = np.random.normal(50, 5) # Normal distribution around 50 cycles analyzer.record_access(address, timestamp, latency)*

Simulate prime+probe attack pattern

def simulate_prime_probe_attack(): for i in range(100): # Prime phase - load cache lines for j in range(10): address = 1000 + j * 64 # Sequential cache lines timestamp = i * 0.1 + j * 0.001 latency = 40 + np.random.normal(0, 2) # Fast access (cache hit) analyzer.record_access(address, timestamp, latency)*

Probe phase - check cache state

for j in range(10): address = 1000 + j * 64 timestamp = i * 0.1 + 0.05 + j * 0.001 latency = 80 + np.random.normal(0, 10) # Variable access (depends on eviction) analyzer.record_access(address, timestamp, latency)*Run simulations

simulate_normal_access() simulate_prime_probe_attack() analyzer.detect_eviction_sets()

print(f"Detected {len(analyzer.suspicious_patterns)} suspicious patterns")

Flush+Reload attacks involve attackers flushing specific cache lines from the cache hierarchy and then reloading them to measure access times. This technique can reveal precise timing information about victim operations, particularly when victims access predictable memory locations. Detecting flush+reload activities requires monitoring for systematic cache flush operations followed by rapid reload attempts.

Cache conflict analysis examines patterns of cache set conflicts that might indicate deliberate manipulation. When multiple tenants compete for the same cache resources, careful analysis of conflict patterns can reveal coordinated attack activities. Machine learning models can identify unusual conflict distributions that suggest intentional cache manipulation rather than random resource contention.

Memory access correlation studies the relationship between cache access patterns and application behavior to identify anomalies. Legitimate applications typically exhibit predictable cache access patterns related to their functional requirements, while side-channel attacks often show artificial access patterns designed to maximize information leakage.

Hardware performance counter monitoring leverages CPU performance counters to collect detailed cache behavior metrics. Modern processors provide extensive performance monitoring capabilities that can reveal cache access patterns at unprecedented detail levels. However, interpreting this data requires sophisticated analysis techniques to distinguish between normal application behavior and malicious activity.

Security Insight: Cache-based side-channel attacks exploit fundamental hardware characteristics that cannot be completely eliminated, making detection and mitigation essential components of secure cloud computing strategies.

What Comparative Performance Advantages Do AI Models Offer Over Traditional Approaches?

Comparative analysis between AI-enhanced detection methods and traditional approaches reveals significant performance advantages that justify investment in advanced machine learning capabilities. These advantages span detection accuracy, false positive reduction, adaptability to evolving threats, and operational efficiency considerations that directly impact security team effectiveness.

Detection accuracy represents the most compelling advantage of AI models over traditional methods. Studies conducted throughout 2025-2026 demonstrate that properly trained transformer-based models achieve detection accuracy exceeding 95% for sophisticated cross-tenant side-channel attacks, compared to approximately 60-70% for traditional signature-based systems. This dramatic improvement stems from AI models' ability to identify complex, multi-dimensional attack signatures that escape simpler detection mechanisms.

False positive reduction constitutes another critical advantage, as traditional systems often generate excessive alerts that overwhelm security teams and lead to alert fatigue. AI models can achieve false positive rates below 5% while maintaining high detection sensitivity, compared to 20-30% false positive rates common with traditional threshold-based approaches. This improvement enables security teams to focus on genuine threats rather than investigating numerous false alarms.

Adaptability to evolving threats distinguishes AI models from static rule-based systems. Traditional approaches require manual updates to address new attack variants, creating windows of vulnerability during which new threats can operate undetected. AI models can continuously learn from new data and adapt their detection capabilities without human intervention, providing near-real-time protection against emerging attack techniques.

python import pandas as pd from sklearn.metrics import classification_report, confusion_matrix import matplotlib.pyplot as plt import seaborn as sns

class DetectionPerformanceComparator: def init(self): self.results = {}

def compare_methods(self, test_data, true_labels): # Simulate results from different detection methods methods = { 'traditional_signature': self.simulate_traditional_detection(test_data), 'threshold_based': self.simulate_threshold_detection(test_data), 'basic_ml': self.simulate_basic_ml_detection(test_data), 'transformer_ai': self.simulate_transformer_detection(test_data) }

# Compare performance metrics comparison_results = {} for method_name, predictions in methods.items(): report = classification_report(true_labels, predictions, output_dict=True) comparison_results[method_name] = { 'accuracy': report['accuracy'], 'precision': report['1']['precision'], 'recall': report['1']['recall'], 'f1_score': report['1']['f1-score'], 'false_positive_rate': self.calculate_fpr(true_labels, predictions) } self.results = comparison_results return comparison_resultsdef simulate_traditional_detection(self, data): # 65% accuracy, 25% false positive rate accuracy = 0.65 return np.random.choice([0, 1], size=len(data), p=[1-accuracy, accuracy])def simulate_threshold_detection(self, data): # 70% accuracy, 30% false positive rate accuracy = 0.70 return np.random.choice([0, 1], size=len(data), p=[1-accuracy, accuracy])def simulate_basic_ml_detection(self, data): # 85% accuracy, 15% false positive rate accuracy = 0.85 return np.random.choice([0, 1], size=len(data), p=[1-accuracy, accuracy])def simulate_transformer_detection(self, data): # 95% accuracy, 3% false positive rate accuracy = 0.95 return np.random.choice([0, 1], size=len(data), p=[1-accuracy, accuracy])def calculate_fpr(self, true_labels, predictions): tn, fp, fn, tp = confusion_matrix(true_labels, predictions).ravel() return fp / (fp + tn)def generate_comparison_report(self): if not self.results: return "No results to report" df = pd.DataFrame(self.results).T print("Detection Method Performance Comparison:") print(df.round(4)) # Visualize comparison fig, axes = plt.subplots(2, 2, figsize=(12, 10)) metrics = ['accuracy', 'precision', 'recall', 'f1_score'] titles = ['Accuracy Comparison', 'Precision Comparison', 'Recall Comparison', 'F1-Score Comparison'] for i, (metric, title) in enumerate(zip(metrics, titles)): ax = axes[i//2, i%2] values = [self.results[method][metric] for method in self.results.keys()] bars = ax.bar(range(len(values)), values) ax.set_xticks(range(len(values))) ax.set_xticklabels(list(self.results.keys()), rotation=45) ax.set_title(title) ax.set_ylim(0, 1) # Add value labels on bars for bar, value in zip(bars, values): ax.text(bar.get_x() + bar.get_width()/2, bar.get_height() + 0.01, f'{value:.3f}', ha='center', va='bottom') plt.tight_layout() plt.savefig('/tmp/detection_comparison.png') plt.show() return dfExample usage

comparator = DetectionPerformanceComparator() test_samples = 1000 true_labels = np.random.choice([0, 1], size=test_samples, p=[0.8, 0.2]) # 20% attacks

results = comparator.compare_methods(test_samples, true_labels) comparison_df = comparator.generate_comparison_report()

print("\nKey Performance Insights:") print(f"Transformer AI improves accuracy by {results['transformer_ai']['accuracy'] - results['traditional_signature']['accuracy']:.1%} over traditional methods") print(f"False positive rate reduced by {results['traditional_signature']['false_positive_rate'] - results['transformer_ai']['false_positive_rate']:.1%}")

Operational efficiency gains result from AI models' ability to process vast amounts of monitoring data with minimal human intervention. Traditional approaches often require extensive manual tuning and rule maintenance, consuming valuable security team resources. AI systems can autonomously adjust their detection parameters and prioritize alerts based on risk assessment, enabling more efficient incident response workflows.

Scalability advantages become apparent when deploying detection systems across large cloud infrastructures. AI models can handle thousands of concurrent data streams without proportional increases in operational complexity, while traditional systems often struggle with configuration management and performance degradation at scale.

Integration flexibility allows AI models to seamlessly incorporate new data sources and detection signals as they become available. Traditional systems typically require significant reconfiguration to accommodate new monitoring capabilities, whereas AI frameworks can dynamically adapt to changing input characteristics through continuous learning processes.

Cost-effectiveness analysis demonstrates that initial AI implementation investments pay dividends through reduced incident response times, fewer false positives requiring investigation, and improved threat detection rates that prevent costly data breaches. Organizations report 40-60% reductions in security operations costs after deploying AI-enhanced detection capabilities.

Business Impact: The performance advantages of AI models translate directly into measurable improvements in security posture, operational efficiency, and risk reduction that justify technology investment decisions.

How Can mr7 Agent Automate Implementation of These Advanced Detection Techniques?

mr7 Agent represents a revolutionary approach to automating advanced side-channel attack detection in multi-tenant cloud environments. This powerful platform combines cutting-edge AI capabilities with practical security automation features to enable organizations to deploy sophisticated detection techniques without requiring extensive machine learning expertise or dedicated research teams.

Automated model deployment capabilities allow security teams to quickly implement transformer-based detection models across their infrastructure with minimal configuration overhead. mr7 Agent provides pre-trained models optimized for common side-channel attack patterns, along with automated model selection and tuning capabilities that adapt to specific environment characteristics. This eliminates the need for extensive model development cycles while ensuring optimal performance for each deployment scenario.

Continuous learning automation ensures that deployed detection models remain effective against evolving attack techniques. mr7 Agent automatically collects feedback from detection events, updates model parameters based on new threat intelligence, and adapts detection strategies to changing environmental conditions. This self-improving capability maintains high detection accuracy without requiring constant manual intervention from security personnel.

yaml

Example mr7 Agent configuration for side-channel detection

version: '1.0' agent_config: name: "cross-tenant-sidechannel-detector" version: "2.1.0" description: "AI-powered detection of cross-tenant side-channel attacks"

monitoring: enabled: true sampling_rate: 1000 # Hz data_sources: - type: "cpu_performance_counters" enabled: true metrics: - "cache_misses" - "instruction_retired" - "branch_mispredictions" - type: "network_traffic" enabled: true capture_filters: - "port 80 or port 443" - "src net 10.0.0.0/8" - type: "memory_access_patterns" enabled: true granularity: "cache_line"

detection_models:

- name: "transformer_cache_analyzer"

type: "transformer"

version: "latest"

confidence_threshold: 0.85

features:

- "timing_variations"

- "access_correlations"

- "resource_contention"

- name: "lstm_network_detector"

type: "lstm"

version: "stable"

confidence_threshold: 0.90

features:

- "traffic_patterns"

- "protocol_anomalies"

- "connection_behavior"

- name: "lstm_network_detector"

type: "lstm"

version: "stable"

confidence_threshold: 0.90

features:

alerting: severity_levels: - level: "critical" threshold: 0.95 actions: - "immediate_notification" - "automated_isolation" - "incident_ticket_creation" - level: "high" threshold: 0.85 actions: - "security_team_notification" - "additional_monitoring" - level: "medium" threshold: 0.75 actions: - "log_for_review" - "enhanced_sampling"

integration: siem: enabled: true endpoints: - "splunk://splunk.company.com:8088" - "elastic://elastic.company.com:9200" ticketing: enabled: true system: "jira" project_key: "SEC"

performance: parallel_processing: true max_concurrent_analyses: 50 memory_limit_mb: 4096 cpu_quota: 0.8

Integration automation streamlines deployment with existing security infrastructure including SIEM systems, ticketing platforms, and incident response workflows. mr7 Agent provides standardized APIs and pre-built connectors for popular enterprise security tools, enabling seamless incorporation of AI detection capabilities into established operational procedures.

Real-time response automation allows mr7 Agent to take immediate protective actions when high-confidence attack detections occur. Automated response capabilities include tenant isolation, resource throttling, connection termination, and evidence preservation for forensic analysis. This rapid response capability minimizes potential damage from successful side-channel attacks while preserving critical investigation data.

Customizable detection rules enable security teams to tailor mr7 Agent's behavior to their specific requirements and risk tolerance levels. Teams can define custom alert thresholds, specify particular attack patterns to monitor, and configure response actions based on organizational policies and compliance requirements.

Threat intelligence integration allows mr7 Agent to leverage external threat feeds and research findings to enhance detection capabilities. The platform automatically incorporates new side-channel attack signatures, behavioral indicators, and mitigation recommendations from trusted security sources, ensuring that deployed models remain current with latest threat developments.

Performance optimization features ensure that mr7 Agent operates efficiently within resource-constrained environments while maintaining detection effectiveness. Built-in optimization algorithms dynamically adjust processing intensity based on system load, available resources, and current threat levels to maximize detection coverage without impacting normal operations.

Collaborative research capabilities enable security teams to contribute their own detection improvements back to the broader community while benefiting from collective intelligence gathered across all mr7 Agent deployments. This collaborative approach accelerates innovation in side-channel attack detection while reducing individual organization research burdens.

Implementation Strategy: Organizations can rapidly deploy advanced side-channel detection capabilities by leveraging mr7 Agent's automated deployment, continuous learning, and integration features to build comprehensive protection without extensive in-house AI expertise.

Key Takeaways

• Transformer-based AI models achieve over 95% accuracy in detecting cross-tenant side-channel attacks, far surpassing traditional detection methods • Cache access pattern analysis combined with timing variation monitoring provides powerful indicators of coordinated attack activities • Network traffic correlation analysis reveals subtle communication patterns indicative of data exfiltration attempts between tenants • mr7 Agent automates deployment and operation of advanced detection techniques, enabling organizations to implement sophisticated protection without extensive ML expertise • Real-time response automation capabilities minimize damage from successful attacks while preserving evidence for forensic analysis • Continuous learning mechanisms ensure detection models adapt to evolving attack techniques without manual intervention • Integration with existing security infrastructure streamlines adoption and operational management of AI-enhanced detection capabilities

Frequently Asked Questions

Q: How do side-channel attacks differ from traditional cyber attacks?

Side-channel attacks exploit indirect information leakage through system behavior observations rather than direct vulnerability exploitation. Unlike traditional attacks that target software bugs or misconfigurations, side-channel attacks manipulate and measure physical characteristics like timing, power consumption, or electromagnetic emissions to extract sensitive information. These attacks are particularly dangerous in multi-tenant environments because they can bypass conventional security boundaries and remain undetected by signature-based defenses.

Q: What makes transformer models especially suitable for side-channel detection?

Transformer models excel at side-channel detection due to their attention mechanisms that can identify complex temporal correlations across multiple data streams simultaneously. Their ability to process sequential data while preserving positional relationships makes them ideal for analyzing timing variations and coordinated attack patterns. Additionally, transformers can automatically learn relevant features from raw sensor data without requiring extensive manual feature engineering, reducing deployment complexity and improving adaptability to different attack scenarios.

Q: Can traditional security tools detect sophisticated side-channel attacks?

Traditional security tools generally struggle to detect sophisticated side-channel attacks because these attacks operate within normal system parameters and generate minimal anomalous network traffic. Signature-based intrusion detection systems lack signatures for novel attack vectors, while basic anomaly detection generates excessive false positives due to legitimate workload variations. Only advanced machine learning approaches specifically designed for side-channel detection can reliably identify these stealthy threats.

Q: How much training data is needed for effective AI side-channel detection models?

The amount of training data required varies significantly based on attack complexity and detection requirements. For well-characterized attack patterns, several thousand labeled examples may suffice for effective detection. However, for comprehensive protection against diverse attack techniques, tens of thousands of examples covering various scenarios, environments, and attack methodologies are recommended. Transfer learning approaches can reduce this requirement by leveraging pre-trained models adapted to specific deployment contexts.

Q: What are the performance impacts of running AI detection models in production environments?

Modern AI detection models optimized for side-channel analysis typically consume minimal system resources when properly implemented. Efficient model architectures and hardware acceleration can achieve real-time processing with less than 5% CPU overhead on modern servers. Memory requirements generally range from 100MB to 1GB depending on model complexity and concurrent analysis capacity. Careful performance tuning and resource allocation ensure that detection capabilities enhance security without degrading normal system operations.

Try AI-Powered Security Tools

Join thousands of security researchers using mr7.ai. Get instant access to KaliGPT, DarkGPT, OnionGPT, and the powerful mr7 Agent for automated pentesting.