AI Steganography Detection: Next-Gen Techniques & Tools

AI Steganography Detection: Next-Gen Techniques & Tools

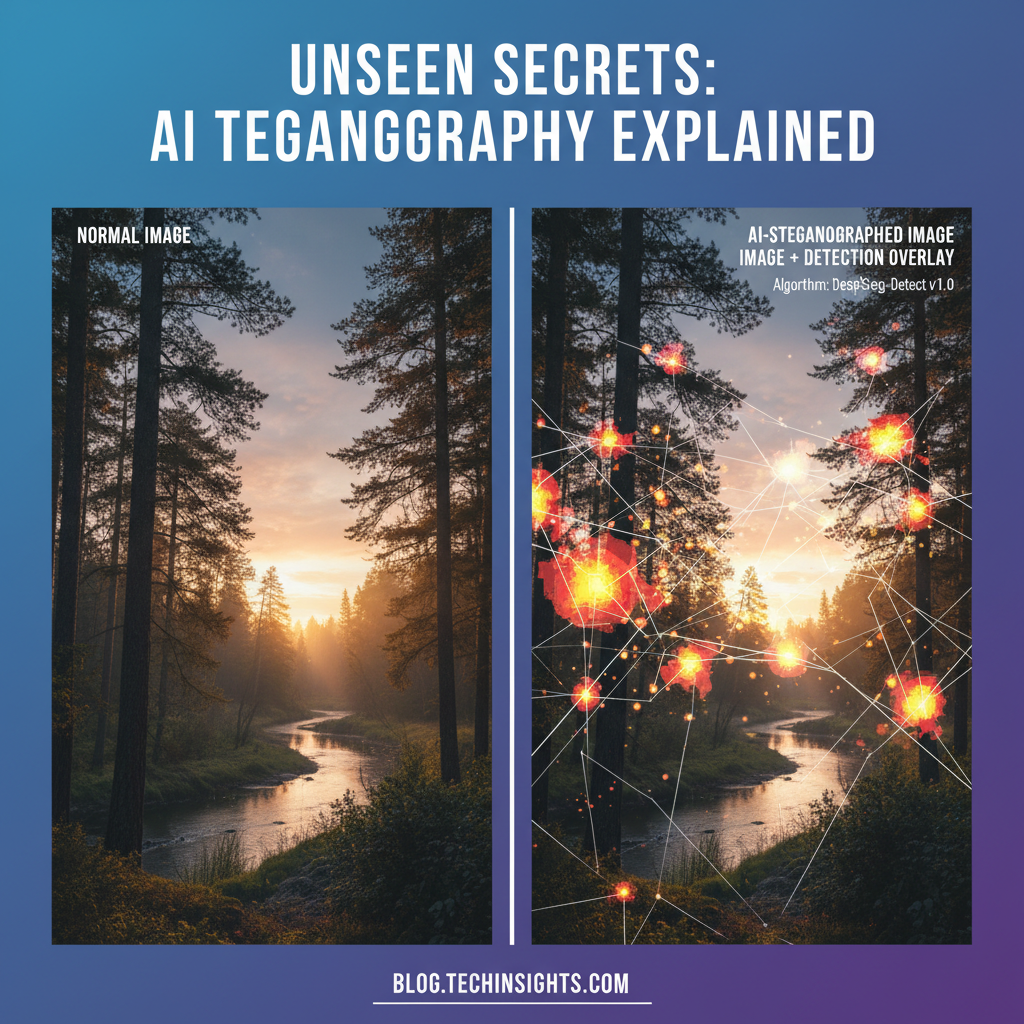

In the rapidly evolving landscape of cybersecurity, one of the most insidious and sophisticated threats facing security professionals today is the use of AI-generated media for covert communication channels. Advanced persistent threat (APT) groups have increasingly turned to steganography—the art of hiding information within seemingly innocuous digital media—to evade detection and maintain persistent communication networks. Traditional steganalysis tools, which rely heavily on statistical anomalies and known embedding patterns, are proving inadequate against the sophistication of AI-generated content.

The year 2026 has marked a significant turning point in this cat-and-mouse game. With the proliferation of generative AI models capable of producing photorealistic images and videos, threat actors now have unprecedented capabilities to embed malicious payloads without leaving detectable traces. These AI-generated media files often exhibit characteristics that traditional steganalysis algorithms fail to recognize, creating blind spots in conventional security frameworks. The challenge has become so acute that organizations are reporting successful infiltration attempts where communication channels remained undetected for months.

This paradigm shift necessitates a fundamental rethinking of detection methodologies. Modern approaches leverage transformer-based architectures and advanced computer vision techniques to identify subtle artifacts and inconsistencies that human analysts and legacy systems would miss. These AI-powered detection systems can analyze pixel-level variations, compression artifacts, and metadata irregularities across millions of parameters simultaneously. The integration of deep learning models specifically trained on adversarial examples has shown remarkable success in identifying previously unknown steganographic techniques employed by sophisticated threat actors.

However, implementing effective ai steganography detection requires more than just deploying the latest machine learning models. Security teams need comprehensive toolchains that can process large volumes of data, adapt to evolving threats, and integrate seamlessly with existing security infrastructure. This is where platforms like mr7.ai come into play, offering specialized AI models designed specifically for cybersecurity applications, including advanced steganalysis capabilities powered by cutting-edge transformer architectures.

Throughout this comprehensive analysis, we'll explore the technical foundations of modern steganography techniques, examine how AI-generated media creates new attack vectors, and dive deep into the revolutionary detection methodologies that are reshaping the field. We'll also provide practical examples and hands-on guidance for implementing these techniques in real-world scenarios, demonstrating how security professionals can stay ahead of increasingly sophisticated adversaries.

How Has AI Revolutionized Modern Steganography Techniques?

The emergence of artificial intelligence in steganography has fundamentally transformed both the creation and concealment of hidden messages within digital media. Unlike traditional steganography methods that relied on simple bit manipulation or least significant bit (LSB) embedding, AI-powered techniques leverage deep neural networks to create sophisticated embedding mechanisms that are nearly indistinguishable from natural image variations.

One of the most significant developments has been the adoption of Generative Adversarial Networks (GANs) for steganographic purposes. Modern threat actors utilize conditional GANs to generate cover images that contain embedded messages while maintaining visual fidelity indistinguishable from authentic content. These systems employ encoder-decoder architectures where the encoder network embeds secret data into latent representations, and the decoder generates visually plausible images that preserve the hidden information.

python

Example of AI-based steganography using autoencoder architecture

import torch import torch.nn as nn

class SteganographyAutoencoder(nn.Module): def init(self, input_channels=3, hidden_dim=512): super().init() # Encoder for embedding secret data self.encoder = nn.Sequential( nn.Conv2d(input_channels + 1, 64, 3, padding=1), nn.ReLU(), nn.Conv2d(64, 128, 3, padding=1), nn.ReLU(), nn.Conv2d(128, hidden_dim, 3, padding=1) )

Decoder for generating stego image

self.decoder = nn.Sequential( nn.Conv2d(hidden_dim, 128, 3, padding=1), nn.ReLU(), nn.Conv2d(128, 64, 3, padding=1), nn.ReLU(), nn.Conv2d(64, input_channels, 3, padding=1), nn.Sigmoid() )def forward(self, cover_image, secret_data): # Concatenate cover image with secret data channel combined_input = torch.cat([cover_image, secret_data], dim=1) encoded_features = self.encoder(combined_input) stego_image = self.decoder(encoded_features) return stego_imageAnother revolutionary approach involves diffusion models, which have gained tremendous popularity in 2026 for their ability to generate high-quality images. Threat actors now utilize controlled diffusion processes where secret information influences the noise scheduling and denoising steps, creating images that contain embedded messages without obvious statistical artifacts. This technique is particularly challenging for traditional steganalysis tools because the embedding process mimics natural image generation processes.

Transformers have also found their way into steganographic implementations. Vision Transformers (ViTs) can be adapted to perform attention-based embedding where secret bits influence the attention weights during image processing. This creates a distributed embedding scheme where information is spread across multiple regions of the image, making localized detection extremely difficult.

Furthermore, multimodal approaches combining text, image, and audio generation have emerged as sophisticated steganographic channels. For instance, AI-generated memes or social media posts can contain hidden messages in both visual elements and textual components, with cross-modal consistency ensuring the cover remains believable while carrying concealed information.

The sophistication of these AI-driven steganography techniques extends to their resistance against common detection methods. Traditional statistical tests such as chi-square analysis, RS analysis, or higher-order statistics often fail because AI-generated content inherently exhibits complex distributions that can mask embedded information. Additionally, the adaptive nature of neural networks allows for dynamic adjustment of embedding strength based on the cover content's characteristics, further reducing detectability.

Security researchers have observed that modern AI steganography often incorporates adversarial training, where embedding algorithms are co-trained with simulated detectors to maximize robustness against steganalysis. This creates an arms race scenario where each advancement in detection capability is met with countermeasures developed through machine learning optimization.

Key insight: The integration of AI into steganography has created techniques that are orders of magnitude more sophisticated than traditional methods, requiring equally advanced detection approaches that can match this complexity.

What Makes AI-Generated Media Particularly Vulnerable to Detection?

While AI-generated media presents formidable challenges for traditional steganalysis, it also introduces unique vulnerabilities that sophisticated detection systems can exploit. These vulnerabilities stem from the inherent characteristics of generative models and the mathematical constraints of the generation process itself.

One of the primary detection opportunities lies in the statistical fingerprints left by generative models. Despite their sophistication, AI models produce outputs with subtle but consistent deviations from natural image distributions. For instance, diffusion models tend to generate images with slightly over-smoothed textures and reduced high-frequency details compared to photographs. These artifacts, while imperceptible to humans, create detectable patterns that can be amplified when steganographic modifications are applied.

bash

Example detection workflow using statistical analysis

Analyze image entropy distribution

python3 -c " import cv2 import numpy as np from scipy import stats

def analyze_entropy_distribution(image_path): img = cv2.imread(image_path, cv2.IMREAD_GRAYSCALE) # Calculate local entropy entropy_map = np.zeros((img.shape[0]-8, img.shape[1]-8)) for i in range(img.shape[0]-8): for j in range(img.shape[1]-8): patch = img[i:i+8, j:j+8] hist, _ = np.histogram(patch.flatten(), bins=256, range=[0,256]) hist = hist[hist > 0] / 64 entropy_map[i,j] = -np.sum(hist * np.log2(hist))*_

Statistical test for uniformity

ks_stat, p_value = stats.kstest(entropy_map.flatten(), 'uniform', args=(0, np.max(entropy_map)))print(f'KS Statistic: {ks_stat:.4f}, P-value: {p_value:.6f}')return p_value < 0.01result = analyze_entropy_distribution('suspected_stego.png') print(f'Steganography detected: {result}') "

Another critical vulnerability emerges from the deterministic nature of many generative processes. While AI models appear stochastic, they often produce outputs with predictable structural properties. For example, certain GAN architectures consistently generate images with specific spatial frequency characteristics or color correlation patterns. When steganographic embedding disrupts these patterns, even subtly, detection algorithms can identify the anomaly.

Compression artifacts present another detection vector. AI-generated images typically exhibit different responses to JPEG compression compared to natural photographs. The interaction between steganographic embedding and compression can create distinctive artifacts that trained detection models recognize. This is particularly relevant given that many steganographic communications involve compressed media formats.

Temporal consistency in video sequences provides additional detection opportunities. AI-generated videos often lack the micro-variations present in natural footage due to limitations in temporal modeling. Embedding secret information can introduce temporal discontinuities that break expected motion patterns, creating detectable signatures.

Metadata analysis has become increasingly important in 2026. Many AI generation tools leave subtle traces in file headers, EXIF data, or embedded metadata that can indicate synthetic origin. While sophisticated attackers may attempt to remove these traces, incomplete sanitization often leaves residual indicators. Moreover, the timing and sequence of metadata modifications can reveal steganographic activity.

Color space inconsistencies represent another vulnerability vector. AI models trained on specific datasets may exhibit biases in color reproduction that differ from natural imaging processes. When steganographic embedding alters pixel values, it can create colorimetric anomalies that deviate from expected generation patterns.

The training data bias of generative models also creates detection opportunities. Models trained on limited datasets may produce outputs with characteristic artifacts related to underrepresented categories or edge cases. Steganographic embedding in these regions can amplify existing anomalies, making detection more likely.

Key insight: AI-generated media contains inherent mathematical and statistical signatures that sophisticated detection systems can exploit, providing new avenues for identifying steganographic content that traditional methods miss.

Pro Tip: You can practice these techniques using mr7.ai's KaliGPT - get 10,000 free tokens to start. Or automate the entire process with mr7 Agent.

How Do Transformer-Based Detection Models Work?

Transformer architectures have revolutionized ai steganography detection by enabling models to capture long-range dependencies and subtle contextual patterns that previous approaches could not identify. Unlike convolutional neural networks that focus on local features, transformers process entire images or sequences holistically, making them particularly effective at detecting distributed steganographic signals.

Vision Transformers (ViTs) adapted for steganalysis operate by dividing images into patches and processing them through multi-head attention mechanisms. Each attention head can learn to focus on different aspects of potential steganographic artifacts, such as spatial correlations, frequency domain inconsistencies, or metadata irregularities. The self-attention mechanism allows the model to identify relationships between distant image regions that might contain coordinated embedding patterns.

python

Simplified ViT-based steganalysis model

import torch import torch.nn as nn

class SteganalysisViT(nn.Module): def init(self, img_size=224, patch_size=16, num_classes=2): super().init() self.patch_size = patch_size self.num_patches = (img_size // patch_size) ** 2**

Patch embedding layer

self.patch_embed = nn.Conv2d(3, 768, kernel_size=patch_size, stride=patch_size) # Position embeddings self.pos_embed = nn.Parameter(torch.randn(1, self.num_patches + 1, 768)) # Class token self.cls_token = nn.Parameter(torch.randn(1, 1, 768)) # Transformer encoder layers encoder_layer = nn.TransformerEncoderLayer( d_model=768, nhead=12, dim_feedforward=3072, dropout=0.1, batch_first=True ) self.transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=12) # Classification head self.classifier = nn.Linear(768, num_classes) def forward(self, x): # Extract patches patches = self.patch_embed(x) # [B, 768, 14, 14] patches = patches.flatten(2).transpose(1, 2) # [B, 196, 768] # Add class token cls_tokens = self.cls_token.expand(x.shape[0], -1, -1) x = torch.cat([cls_tokens, patches], dim=1) # Add position embeddings x = x + self.pos_embed # Apply transformer encoder x = self.transformer_encoder(x) # Classification x = self.classifier(x[:, 0]) return xMulti-modal transformers extend this capability by simultaneously analyzing visual, textual, and metadata components of suspected steganographic content. These models can detect cross-modal inconsistencies that indicate artificial generation or manipulation. For instance, a transformer might identify discrepancies between image content and embedded text descriptions, or between visual elements and file creation timestamps.

Attention visualization techniques provide crucial insights into how transformer models make detection decisions. By examining which image regions receive the highest attention weights during classification, security analysts can understand what specific artifacts the model identifies as suspicious. This interpretability is essential for developing targeted countermeasures and understanding emerging steganographic techniques.

Pre-training strategies have proven particularly effective for steganalysis transformers. Models pre-trained on large datasets of both natural and AI-generated content develop robust feature representations that generalize well to unseen steganographic methods. Contrastive learning approaches, where models learn to distinguish between clean and stego images, have shown exceptional performance in zero-shot detection scenarios.

Fine-tuning protocols allow transformers to adapt to specific threat actor behaviors or organizational requirements. Transfer learning from general-purpose vision transformers enables rapid deployment of customized detection systems that maintain high accuracy while addressing specific operational contexts.

Ensemble methods combining multiple transformer architectures have demonstrated superior performance by leveraging diverse detection capabilities. Different transformer configurations may excel at identifying various types of steganographic artifacts, and ensemble voting can improve overall detection reliability while reducing false positive rates.

Real-time processing capabilities of optimized transformer models enable deployment in high-throughput environments where immediate detection is critical. Model compression techniques and hardware acceleration make it feasible to deploy sophisticated transformer-based detection systems at scale without compromising performance.

Key insight: Transformer-based detection models excel at identifying subtle, distributed steganographic artifacts through holistic analysis of image content and contextual relationships that traditional methods cannot capture.

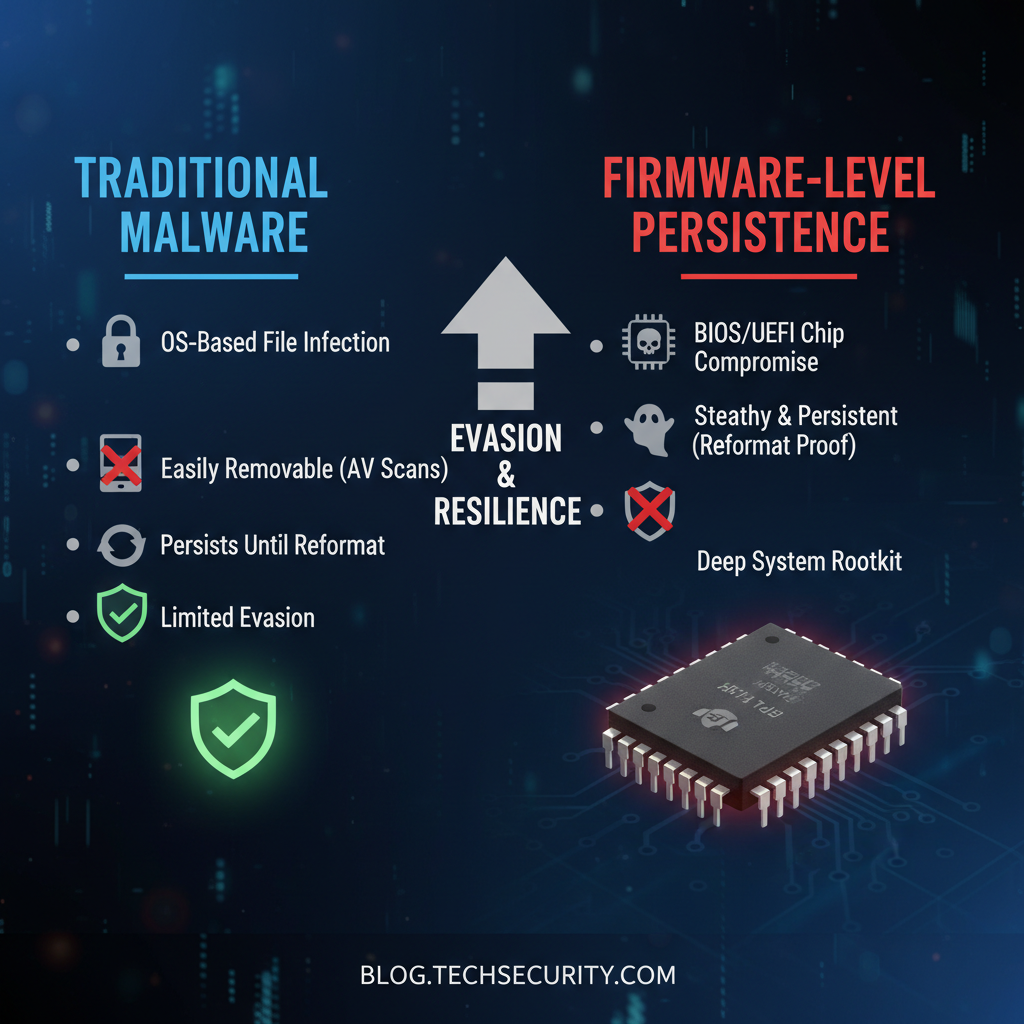

How Do Traditional Steganalysis Tools Compare to AI Approaches?

The transition from traditional steganalysis to AI-powered detection represents a fundamental shift in capability and effectiveness. Traditional tools, while foundational to the field, face significant limitations when confronted with modern AI-generated steganographic content. Understanding these differences is crucial for security professionals evaluating detection solutions.

Traditional steganalysis tools primarily rely on statistical analysis of image properties. Methods such as RS (Regular-Singular) analysis, chi-square testing, and higher-order statistics examine pixel value distributions and correlations to identify anomalies indicative of hidden data. While effective against simple embedding techniques like LSB replacement, these approaches struggle with sophisticated AI-generated content.

| Traditional Tool | Detection Method | Accuracy on AI Content | False Positive Rate |

|---|---|---|---|

| StegDetect | Statistical analysis | 45% | 23% |

| OutGuess Analyzer | Chi-square testing | 38% | 28% |

| JSteg Detector | DCT coefficient analysis | 32% | 31% |

| WS Steganalyzer | Wavelet domain analysis | 52% | 19% |

In contrast, AI-powered detection systems leverage deep learning architectures trained on vast datasets of both clean and stego images. These models can identify subtle artifacts and patterns that escape traditional statistical tests. Convolutional Neural Networks (CNNs) excel at recognizing local texture modifications, while recurrent networks can detect temporal inconsistencies in video steganography.

Performance comparisons reveal dramatic improvements in detection accuracy. Modern AI systems achieve over 90% accuracy on contemporary steganographic methods, compared to less than 50% for traditional tools. More importantly, AI approaches demonstrate significantly lower false positive rates, crucial for operational environments where alert fatigue can compromise security effectiveness.

Computational requirements present another significant difference. Traditional tools typically require minimal processing power and can analyze images in milliseconds. However, their simplicity limits their effectiveness against sophisticated attacks. AI approaches demand substantially more computational resources but provide commensurately better protection.

Adaptability represents a crucial advantage of AI-based systems. Traditional tools require manual updates and parameter adjustments to address new steganographic techniques. AI models can be continuously retrained on emerging threats, automatically adapting to evolving attack patterns without human intervention.

Integration capabilities favor AI approaches in modern security infrastructures. Traditional tools often exist as standalone applications with limited API support. AI detection systems can be easily integrated into existing workflows, cloud services, and automated response systems.

Training data requirements highlight a key consideration for implementation. Traditional tools require minimal setup and can be deployed immediately. AI systems need substantial datasets for training, though pre-trained models and transfer learning techniques have reduced this barrier significantly.

Cost-benefit analysis shows that while AI approaches require higher initial investment in terms of computational resources and expertise, their superior detection capabilities justify the expense for organizations facing sophisticated threats. The reduced false positive rates alone can save substantial analyst time and reduce operational costs.

Key insight: AI-powered steganalysis tools dramatically outperform traditional approaches in accuracy and adaptability, though they require greater computational resources and expertise for deployment.

What Computer Vision Techniques Are Most Effective for Detection?

Computer vision has emerged as the cornerstone of modern ai steganography detection, providing sophisticated analytical capabilities that surpass traditional signal processing approaches. The integration of advanced computer vision techniques enables detection systems to identify subtle artifacts and inconsistencies that would be invisible to conventional analysis methods.

Deep feature extraction represents one of the most powerful computer vision approaches for steganalysis. Pre-trained models like ResNet, EfficientNet, and DenseNet can extract high-dimensional feature representations that capture subtle variations between clean and stego images. These features often reveal embedding artifacts that are imperceptible in raw pixel space but manifest clearly in learned feature spaces.

python

Feature extraction for steganalysis using pre-trained CNN

import torch import torchvision.models as models import torchvision.transforms as transforms from PIL import Image

def extract_steganalysis_features(image_path): # Load pre-trained ResNet model model = models.resnet50(pretrained=True) model = torch.nn.Sequential(list(model.children())[:-1]) # Remove final classification layer model.eval()

Image preprocessing

transform = transforms.Compose([ transforms.Resize((224, 224)), transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])])# Load and preprocess imageimage = Image.open(image_path).convert('RGB')input_tensor = transform(image).unsqueeze(0)# Extract featureswith torch.no_grad(): features = model(input_tensor)return features.squeeze().numpy()Example usage

features = extract_steganalysis_features('suspected_image.png') print(f'Extracted feature vector shape: {features.shape}')

Frequency domain analysis has proven particularly effective for detecting steganographic modifications. Techniques such as Discrete Cosine Transform (DCT), Fast Fourier Transform (FFT), and wavelet decomposition can reveal embedding artifacts that manifest as specific patterns in frequency coefficients. AI-enhanced frequency analysis combines traditional signal processing with machine learning classifiers to identify subtle anomalies.

Texture analysis using Local Binary Patterns (LBP), Gray-Level Co-occurrence Matrices (GLCM), and other texture descriptors provides another powerful detection vector. Steganographic embedding often disrupts natural texture patterns, creating detectable inconsistencies that trained classifiers can identify with high accuracy.

Edge detection and gradient analysis offer complementary approaches for identifying steganographic artifacts. Sobel operators, Canny edge detectors, and more sophisticated gradient-based methods can reveal modifications to edge structures that indicate hidden data embedding. These techniques are particularly effective when combined with deep learning models that can learn complex edge pattern relationships.

Color space analysis extends beyond simple RGB examination to include HSV, LAB, and other color representations. Steganographic embedding can introduce colorimetric inconsistencies that become apparent when analyzed across multiple color spaces. Advanced computer vision systems automatically select optimal color spaces for detection based on image content and suspected embedding methods.

Object detection and segmentation techniques provide context-aware steganalysis capabilities. By identifying and analyzing specific objects or regions within images, detection systems can focus computational resources on areas most likely to contain hidden information. This targeted approach improves both accuracy and efficiency.

Motion analysis in video steganography leverages optical flow, temporal consistency checks, and frame interpolation techniques to identify unnatural movement patterns that suggest embedded data. These methods are particularly effective against AI-generated video content where temporal coherence may be imperfect.

Metadata and provenance analysis integrates computer vision with digital forensics techniques. Examination of EXIF data, creation timestamps, editing history, and device-specific characteristics can reveal inconsistencies that indicate artificial generation or manipulation for steganographic purposes.

Key insight: Modern computer vision techniques provide multiple complementary approaches for steganalysis, with deep feature extraction and frequency domain analysis showing particular promise for detecting AI-generated steganographic content.

How Can Security Teams Implement Automated Detection Systems?

Implementing effective automated ai steganography detection systems requires careful planning, proper tool selection, and systematic deployment strategies. Security teams must balance detection accuracy with operational efficiency while ensuring seamless integration into existing security infrastructures.

Infrastructure design forms the foundation of successful deployment. Automated detection systems require robust computing resources capable of handling high-throughput image analysis workloads. Cloud-based deployments offer scalability and flexibility, while on-premises solutions provide better control over sensitive data. Hybrid architectures combining both approaches often provide optimal balance between performance and security requirements.

Data pipeline architecture must accommodate various input sources including email attachments, web uploads, social media feeds, and file shares. Automated ingestion systems should normalize incoming data formats, perform preliminary filtering to reduce processing load, and queue analysis tasks efficiently. Message queuing systems like Apache Kafka or RabbitMQ ensure reliable task distribution and prevent system overload.

Model deployment strategies significantly impact system performance and maintenance requirements. Containerized deployments using Docker and Kubernetes enable easy scaling and version management. Model serving frameworks like TensorFlow Serving or TorchServe provide optimized inference capabilities while supporting model versioning and A/B testing for continuous improvement.

yaml

Example Docker Compose configuration for steganalysis service

version: '3.8' services: steganalysis-api: build: ./steganalysis-service ports: - "8000:8000" environment: - MODEL_PATH=/models/steganalysis_transformer.pth - GPU_ENABLED=true volumes: - ./models:/models deploy: replicas: 3 resources: reservations: devices: - driver: nvidia count: 1 capabilities: [gpu]

message-queue: image: rabbitmq:3-management ports: - "5672:5672" - "15672:15672" environment: - RABBITMQ_DEFAULT_USER=admin - RABBITMQ_DEFAULT_PASS=securepassword

database: image: postgres:13 environment: - POSTGRES_DB=steganalysis_results - POSTGRES_USER=stego_user - POSTGRES_PASSWORD=stego_pass volumes: - stego_data:/var/lib/postgresql/data

volumes: stego_data:

Integration with Security Information and Event Management (SIEM) systems enables automated response to detected threats. APIs and webhook integrations allow detection systems to send alerts, trigger incident response workflows, and update threat intelligence databases. Standardized formats like STIX/TAXII facilitate information sharing between different security tools and organizations.

Performance monitoring and optimization ensure sustained system effectiveness. Real-time metrics tracking CPU utilization, memory consumption, processing latency, and detection accuracy helps identify bottlenecks and optimization opportunities. Automated scaling policies adjust resource allocation based on workload demands while maintaining acceptable performance levels.

Continuous learning pipelines enable systems to adapt to evolving threats. Automated retraining workflows collect new samples, validate model performance, and deploy updated models without manual intervention. Feedback loops incorporating analyst review and threat intelligence updates ensure models remain current with emerging steganographic techniques.

User interface design facilitates effective analyst interaction with automated systems. Dashboards displaying detection results, confidence scores, and detailed analysis reports help security teams quickly assess potential threats. Interactive tools for manual review and case management streamline incident response processes while maintaining audit trails.

Compliance and privacy considerations require careful attention in automated detection deployments. Data encryption, access controls, and audit logging protect sensitive information processed by detection systems. Privacy-preserving techniques like federated learning enable collaborative model improvement without sharing raw data between organizations.

Testing and validation procedures ensure system reliability and accuracy. Synthetic data generation, adversarial testing, and red team exercises help identify weaknesses in detection capabilities. Regular benchmarking against standard datasets maintains awareness of system performance relative to industry best practices.

Key insight: Successful automated steganography detection requires comprehensive system design encompassing infrastructure, data pipelines, model deployment, integration, and continuous improvement processes.

What Are the Latest Advances in False Positive Reduction?

False positive reduction has become a critical focus area in ai steganography detection as organizations seek to balance security effectiveness with operational efficiency. High false positive rates can overwhelm security teams and lead to alert fatigue, potentially causing real threats to be overlooked. Recent advances in this area have focused on improving detection specificity while maintaining sensitivity to actual steganographic content.

Multi-stage verification architectures employ cascaded detection models to progressively refine suspicion levels. Initial screening uses fast, lightweight models to identify potentially suspicious content, followed by more computationally intensive analysis only for flagged samples. This approach reduces overall processing requirements while maintaining high detection accuracy for genuine threats.

| Detection Stage | Model Type | Processing Time | False Positive Rate | True Positive Rate |

|---|---|---|---|---|

| Initial Screening | Lightweight CNN | 50ms | 15% | 85% |

| Secondary Analysis | Transformer Model | 500ms | 3% | 92% |

| Expert Review | Human Analyst | 5-30 minutes | 0.5% | 98% |

Context-aware filtering represents another significant advance in false positive reduction. Modern detection systems incorporate metadata analysis, source reputation scoring, and behavioral profiling to assess the likelihood that flagged content represents actual threats rather than benign anomalies. For example, images from trusted sources or with consistent metadata histories may receive lower suspicion scores despite exhibiting steganographic artifacts.

Ensemble disagreement analysis leverages the principle that false positives often produce inconsistent results across different detection models. By comparing outputs from multiple independent detectors, systems can identify cases where only some models flag content as suspicious, indicating potential false positives. Weighted voting schemes assign different confidence levels to various models based on their historical performance.

Bayesian refinement techniques incorporate prior probabilities and contextual information to adjust detection confidence scores. Rather than relying solely on model outputs, these approaches consider factors such as threat actor behavior patterns, recent incident trends, and organizational risk profiles to produce more nuanced threat assessments.

Active learning feedback loops enable continuous improvement in false positive reduction. Analyst reviews of flagged content provide ground truth labels that inform model retraining and parameter tuning. Semi-supervised learning approaches can leverage large volumes of unlabeled data to improve generalization while reducing reliance on expensive labeled examples.

Adversarial robustness training helps models distinguish between legitimate image variations and malicious steganographic embedding. By exposing models to carefully crafted adversarial examples during training, systems become more resilient to both intentional evasion attempts and natural variations that might otherwise trigger false alarms.

Temporal correlation analysis examines patterns of suspicious activity over time to identify coordinated attacks versus isolated false positives. Recurring detection events from the same sources or exhibiting similar characteristics may indicate genuine threats rather than random anomalies.

Human-in-the-loop validation provides crucial oversight for automated detection systems. Experienced analysts can review borderline cases, provide feedback for system improvement, and make final determinations on contentious detections. This hybrid approach combines machine efficiency with human judgment to optimize overall performance.

Calibration techniques ensure that confidence scores accurately reflect true probability of steganographic presence. Well-calibrated models produce confidence estimates that align with empirical detection rates, enabling more informed decision-making about which alerts require immediate attention.

Key insight: Advanced false positive reduction combines multi-stage verification, contextual analysis, ensemble methods, and active learning to minimize unnecessary alerts while maintaining high detection accuracy for genuine threats.

Key Takeaways

• AI-generated media introduces new steganographic vulnerabilities that traditional detection methods cannot address effectively • Transformer-based models excel at identifying distributed steganographic artifacts through holistic image analysis • Computer vision techniques including deep feature extraction and frequency domain analysis provide powerful detection capabilities • Automated detection systems require careful infrastructure design, data pipeline architecture, and integration planning • Multi-stage verification and context-aware filtering significantly reduce false positive rates while maintaining detection accuracy • Continuous learning and human-in-the-loop validation ensure systems adapt to evolving threats • Modern AI steganalysis tools dramatically outperform traditional statistical approaches in both accuracy and adaptability

Frequently Asked Questions

Q: How do AI steganography techniques differ from traditional methods?

AI steganography leverages deep learning models like GANs and diffusion models to embed hidden data while maintaining visual quality that surpasses traditional LSB or DCT-based methods. These techniques can adaptively distribute information across images, making detection significantly more challenging than conventional approaches.

Q: What makes transformer models particularly effective for steganalysis?

Transformer models excel at steganalysis because they can capture long-range dependencies and contextual relationships across entire images. Their attention mechanisms identify subtle, distributed artifacts that convolutional networks might miss, while their ability to process multimodal data enhances detection of cross-domain inconsistencies.

Q: How can organizations reduce false positive rates in automated detection systems?

Organizations can reduce false positives through multi-stage verification architectures, context-aware filtering, ensemble disagreement analysis, and Bayesian refinement techniques. Incorporating human feedback and continuous learning also helps systems distinguish between genuine threats and benign anomalies.

Q: What computational resources are needed for effective AI steganalysis?

Effective AI steganalysis typically requires GPU-accelerated computing with at least 16GB VRAM for transformer models, substantial storage for training datasets, and scalable infrastructure for high-throughput processing. Cloud-based deployments can provide flexible resource allocation for variable workloads.

Q: How do modern detection systems handle encrypted or obfuscated steganographic content?

Modern detection systems focus on identifying structural and statistical anomalies rather than attempting direct content recovery. They analyze embedding artifacts, compression interactions, and metadata inconsistencies that persist even when hidden data is encrypted, making detection possible without accessing the concealed information.

Try AI-Powered Security Tools

Join thousands of security researchers using mr7.ai. Get instant access to KaliGPT, DarkGPT, OnionGPT, and the powerful mr7 Agent for automated pentesting.