NPM Package Signature Verification: Complete Implementation Guide

NPM Package Signature Verification: A Comprehensive Security Implementation Guide

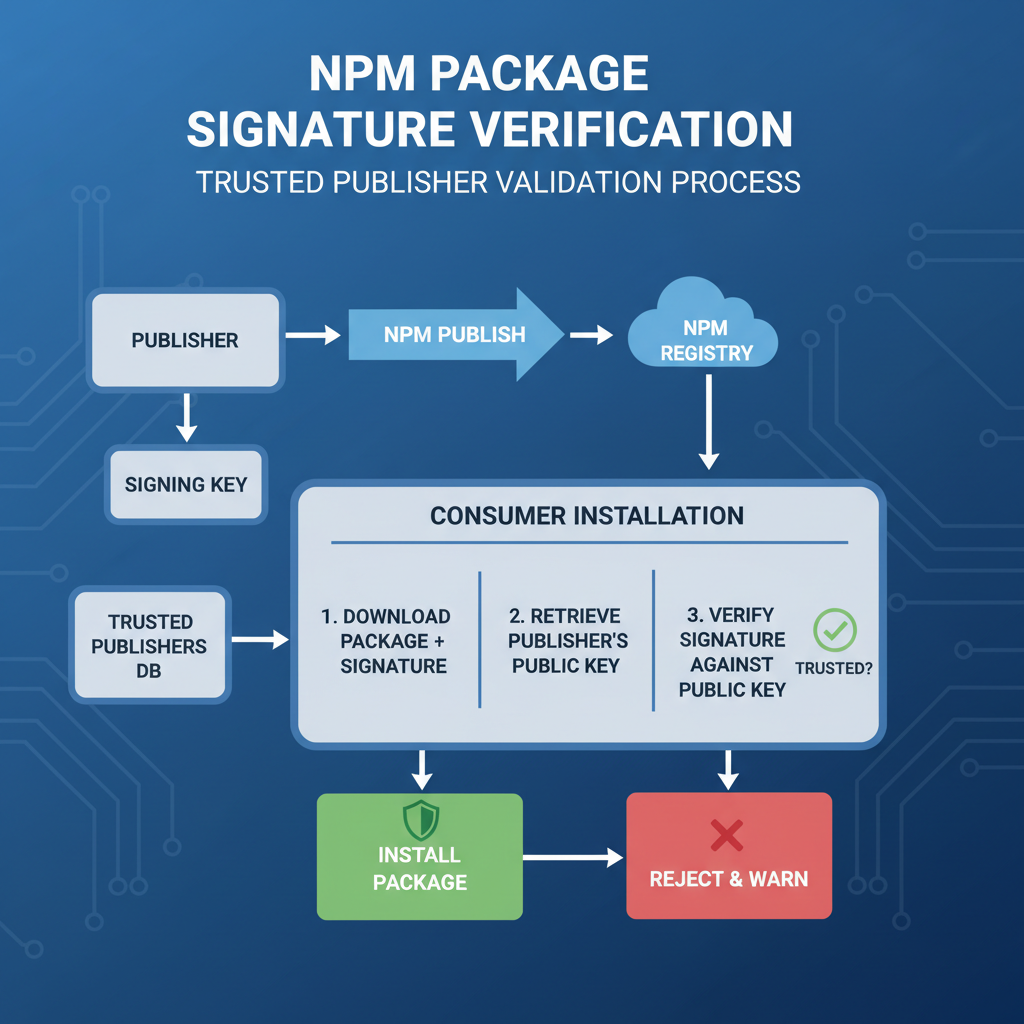

In the wake of several high-profile supply chain attacks targeting Node.js ecosystems in late 2025, the urgency to implement robust npm package signature verification has never been greater. These incidents demonstrated how attackers could compromise widely-used packages, leading to widespread vulnerability propagation across countless applications. As organizations scramble to secure their software supply chains, understanding and implementing effective signature verification mechanisms becomes paramount.

While major registries like npmjs.com have begun supporting package signing capabilities, adoption rates remain disappointingly low. The primary barrier? Complexity. Many developers find themselves overwhelmed by the technical intricacies involved in setting up proper signature verification workflows. This guide aims to demystify the process by providing step-by-step instructions for implementing npm package signature verification using Sigstore cosign, establishing internal signing policies, managing key rotation, and integrating verification checks into continuous integration/continuous deployment (CI/CD) pipelines.

We'll explore both the theoretical foundations and practical implementation details required for securing your Node.js dependencies. From configuring Sigstore cosign integration to handling edge cases in large-scale deployments, this comprehensive resource will equip you with the knowledge needed to protect your organization's software supply chain. Additionally, we'll highlight common pitfalls that often derail implementation efforts and provide actionable strategies for overcoming them.

Throughout this guide, we'll demonstrate how modern AI-powered tools like mr7.ai can accelerate your security workflow, from initial setup to ongoing maintenance. Whether you're a seasoned security professional or a developer looking to enhance your project's security posture, this guide provides the technical depth and practical insights necessary for successful npm package signature verification implementation.

How Do You Configure Sigstore Cosign Integration for NPM Packages?

Configuring Sigstore cosign integration represents the foundational step in establishing npm package signature verification. Before diving into the technical implementation, it's essential to understand the core components involved in this process. Sigstore provides a framework for software transparency and cryptographic signing that eliminates the need for managing traditional PKI infrastructure. For npm packages, this means leveraging cosign to create verifiable signatures that can be validated during installation or runtime.

The first step involves installing the cosign CLI tool, which serves as the primary interface for generating and verifying signatures. Modern distributions make this straightforward:

bash

Install cosign via package manager (Linux/macOS)

curl -O https://github.com/sigstore/cosign/releases/latest/download/cosign-linux-amd64 sudo install cosign-linux-amd64 /usr/local/bin/cosign

Verify installation

cosign version

For Windows environments, PowerShell-based installation scripts are available. Once installed, you'll need to generate signing keys. While cosign supports various key types, we recommend using ephemeral keys generated by Fulcio for production environments:

bash

Generate key pair using cosign

cosign generate-key-pair

This creates cosign.key and cosign.pub files

Store these securely - never commit to version control

Next, configure your npm registry to support signed packages. This typically involves setting up a custom registry that can store and serve package signatures alongside the actual packages. For public packages, you might leverage npmjs.com's built-in signing features, while private registries require additional configuration:

// .npmrc configuration registry=https://your-private-registry.com/ //authToken=YOUR_AUTH_TOKEN

To sign an npm package, you'll need to create a signature for the package tarball. This process involves:

bash

First, pack your package

npm pack

Sign the resulting tarball

cosign sign-blob your-package-1.0.0.tgz --key cosign.key --output-signature package.sig

Upload both the package and signature to your registry

npm publish

Verification requires checking both the package integrity and the associated signature. This can be accomplished programmatically:

javascript const { execSync } = require('child_process'); const fs = require('fs');

function verifyPackageSignature(packagePath, signaturePath, publicKeyPath) {

try {

const result = execSync(

cosign verify-blob ${packagePath} --signature ${signaturePath} --key ${publicKeyPath},

{ encoding: 'utf8' }

);

console.log('Signature verification successful:', result);

return true;

} catch (error) {

console.error('Signature verification failed:', error.message);

return false;

}

}

// Usage verifyPackageSignature('./my-package-1.0.0.tgz', './package.sig', './cosign.pub');

Configuration also extends to your build environment. Ensure that cosign is available in all relevant CI/CD contexts and that proper authentication mechanisms are in place. This might involve setting up service accounts or integrating with identity providers that can issue temporary signing credentials.

A crucial aspect of configuration involves establishing trust relationships. You'll need to determine which entities are authorized to sign packages within your ecosystem. This typically involves maintaining a list of trusted public keys or certificate authorities:

yaml

Example trust policy configuration

apiVersion: v1 kind: TrustPolicy metadata: name: npm-signing-policy spec: trustedIdentities: - email: [email protected] - issuer: https://accounts.google.com signatureVerification: level: strict

Finally, consider implementing automated signature generation as part of your release pipeline. This ensures that every published package receives a valid signature without manual intervention. Tools like GitHub Actions can be configured to automatically sign packages during the publishing process.

Pro Tip: You can practice these techniques using mr7.ai's KaliGPT - get 10,000 free tokens to start. Or automate the entire process with mr7 Agent.

Actionable Insight: Proper Sigstore cosign configuration requires careful attention to key management, registry integration, and trust policy establishment. Start with a simple proof-of-concept implementation before scaling to production environments.

What Are the Best Practices for Establishing Internal Signing Policies?

Establishing robust internal signing policies forms the backbone of any effective npm package signature verification strategy. Without clear, well-defined policies, even the most technically sound implementation can fail to provide adequate protection. These policies govern who can sign packages, under what circumstances, and how signatures should be verified throughout the software development lifecycle.

The foundation of any signing policy begins with defining roles and responsibilities. Create distinct categories of signers based on their authority levels and responsibilities:

| Role Type | Permissions | Examples |

|---|---|---|

| Publisher | Can sign and publish packages | Package maintainers |

| Reviewer | Can approve signing requests | Security team members |

| Administrator | Can manage signing infrastructure | DevOps engineers |

| External Partner | Limited signing rights | Third-party vendors |

Each role should have clearly defined criteria for authorization. For instance, publishers might require two-factor authentication and completion of security training, while reviewers need additional approval privileges.

Implementing policy enforcement requires establishing technical controls that align with your organizational requirements. Consider using policy-as-code approaches to define and enforce signing rules:

yaml

Example policy definition using OPA (Open Policy Agent)

package npm.signing

default allow = false

allow {

Only allow packages from trusted publishers

input.publisher.email == data.trusted_publishers[]

Require multi-signature approval for critical packages

count(input.approvers) >= 2

Enforce time-based restrictions

now := time.now_ns() input.timestamp < now input.expiry > now }

deny[msg] { not input.publisher.certified msg := "Publisher not certified for signing" }

Certificate management becomes critical when implementing internal signing policies. Establish procedures for issuing, renewing, and revoking signing certificates:

bash

Generate CA certificate for internal use

openssl req -x509 -newkey rsa:4096 -keyout ca-key.pem -out ca-cert.pem -days 365 -nodes

Issue signing certificates with specific constraints

openssl req -newkey rsa:2048 -keyout signer-key.pem -out signer-csr.pem -nodes openssl x509 -req -in signer-csr.pem -CA ca-cert.pem -CAkey ca-key.pem -out signer-cert.pem -days 180 -extfile <(cat <<EOF basicConstraints=CA:FALSE keyUsage=digitalSignature extendedKeyUsage=codeSigning EOF )

Time-based constraints add another layer of security to your signing policies. Implement expiration mechanisms that prevent indefinite use of signing credentials:

javascript // Example policy enforcement function function validateSigningRequest(request) { const now = Date.now(); const maxValidityPeriod = 24 * 60 * 60 * 1000; // 24 hours*

if (request.expiresAt - request.createdAt > maxValidityPeriod) { throw new Error('Signing request validity period exceeds maximum allowed'); }

if (request.expiresAt < now) { throw new Error('Signing request has expired'); }

// Additional policy checks... return true; }

Monitoring and auditing represent essential components of effective signing policies. Implement comprehensive logging for all signing activities:

{ "timestamp": "2026-03-19T10:30:00Z", "event": "package_signed", "package": "[email protected]", "signer": "[email protected]", "certificate_fingerprint": "abc123...", "policy_violations": [], "audit_trail": [ "approval_granted_by_security_team", "vulnerability_scan_completed", "dependency_check_passed" ] }

Consider implementing automated policy validation as part of your CI/CD pipeline. This ensures that policy violations are caught early in the development process:

yaml

GitHub Actions example

name: Signing Policy Validation

on: [push, pull_request]

jobs:

validate-policy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Install policy engine

run: npm install -g opa

- name: Validate signing policy

run: |

opa eval

--input policy-input.json

--data signing-policy.rego

'data.npm.signing.allow'

--format pretty

Regular policy reviews ensure that your signing practices remain aligned with evolving security requirements. Schedule quarterly reviews to assess policy effectiveness and update as needed.

Actionable Insight: Internal signing policies should balance security requirements with operational efficiency. Start with conservative policies and gradually expand permissions as your team gains experience with the signing process.

How Should You Handle Key Rotation and Certificate Management?

Key rotation and certificate management form critical aspects of maintaining secure npm package signature verification systems over time. Without proper key management practices, even the strongest initial security measures can become compromised through key exposure or aging vulnerabilities. Effective key rotation requires strategic planning, automated processes, and robust backup mechanisms.

Understanding the key lifecycle is fundamental to developing effective rotation strategies. Keys progress through several phases: generation, active use, rotation preparation, inactive status, and eventual destruction. Each phase requires specific handling procedures:

bash

Key lifecycle management script

#!/bin/bash

KEY_NAME="npm-signing-key" KEY_DIR="/etc/signing-keys" BACKUP_DIR="/backup/signing-keys"

Generate new key pair

generate_new_key() { echo "Generating new signing key..." openssl genpkey -algorithm RSA -out ${KEY_DIR}/${KEY_NAME}-new.pem -aes256 openssl rsa -pubout -in ${KEY_DIR}/${KEY_NAME}-new.pem -out ${KEY_DIR}/${KEY_NAME}-new.pub

Backup immediately

cp ${KEY_NAME}-new.* ${BACKUP_DIR}/ chmod 600 ${BACKUP_DIR}/${KEY_NAME}-new.pem }*

Rotate active key

rotate_key() { echo "Rotating signing key..."

Backup current key

cp ${KEY_DIR}/${KEY_NAME}.pem ${BACKUP_DIR}/${KEY_NAME}-$(date +%Y%m%d).pem

Activate new key

mv ${KEY_DIR}/${KEY_NAME}-new.pem ${KEY_DIR}/${KEY_NAME}.pem mv ${KEY_DIR}/${KEY_NAME}-new.pub ${KEY_DIR}/${KEY_NAME}.pub

Update key references in applications

sed -i "s/old-key-fingerprint/new-key-fingerprint/g" /etc/app-config.json }

Automated key rotation reduces human error and ensures consistent execution. Implement scheduled rotation tasks that follow industry best practices:

python

Python script for automated key rotation

import os import subprocess import json from datetime import datetime, timedelta

class KeyManager: def init(self, config_path): with open(config_path) as f: self.config = json.load(f)

def should_rotate(self): # Check if key age exceeds rotation interval key_info = os.stat(self.config['private_key_path']) key_age = datetime.now() - datetime.fromtimestamp(key_info.st_mtime) return key_age > timedelta(days=self.config['rotation_interval'])

def rotate_keys(self): print("Starting key rotation process...") # Generate new key pair subprocess.run([ 'openssl', 'genpkey', '-algorithm', 'RSA', '-out', self.config['temp_private_key'], '-aes256' ]) # Extract public key subprocess.run([ 'openssl', 'rsa', '-pubout', '-in', self.config['temp_private_key'], '-out', self.config['temp_public_key'] ]) # Backup old keys timestamp = datetime.now().strftime('%Y%m%d') os.rename( self.config['private_key_path'], f"{self.config['backup_dir']}/private-{timestamp}.pem" ) # Activate new keys os.rename( self.config['temp_private_key'], self.config['private_key_path'] ) os.rename( self.config['temp_public_key'], self.config['public_key_path'] ) print("Key rotation completed successfully")Usage

if name == "main": km = KeyManager('/etc/key-manager/config.json') if km.should_rotate(): km.rotate_keys()

Certificate management adds another layer of complexity, particularly when dealing with certificate authorities and intermediate certificates. Implement centralized certificate management systems:

bash

Certificate management utilities

Renew certificate before expiration

renew_certificate() { CERT_FILE="$1" DAYS_UNTIL_EXPIRY=$(openssl x509 -in $CERT_FILE -noout -enddate | cut -d= -f2) CURRENT_DATE=$(date -u +%s) EXPIRY_DATE=$(date -d "$DAYS_UNTIL_EXPIRY" +%s) DAYS_REMAINING=$(( ($EXPIRY_DATE - $CURRENT_DATE) / 86400 ))

if [ $DAYS_REMAINING -lt 30 ]; then echo "Certificate expires in $DAYS_REMAINING days. Initiating renewal..." # Renewal logic here fi }

Revoke compromised certificates

revoke_certificate() { CERT_SERIAL="$1" CA_KEY="$2" CA_CERT="$3"

openssl ca -revoke $CERT_SERIAL -keyfile $CA_KEY -cert $CA_CERT openssl ca -gencrl -keyfile $CA_KEY -cert $CA_CERT -out revoked-certs.crl }

Backup and recovery procedures ensure business continuity even during key management failures. Implement redundant storage and regular backup verification:

yaml

Backup configuration

backup: enabled: true destinations: - type: s3 bucket: signing-keys-backup region: us-west-2 encryption: aes256 - type: local path: /mnt/backups/signing-keys schedule: "0 2 * * " # Daily at 2 AM retention: 90 # Keep backups for 90 days verification: enabled: true interval: 7 # Verify weekly

Monitoring key usage patterns helps identify potential security issues and optimize rotation schedules. Track metrics such as:

- Number of signatures created per key

- Geographic distribution of signing requests

- Anomalous access patterns

- Failed signature attempts

Implement alerting mechanisms for suspicious activities:

javascript // Key usage monitoring const keyUsageMonitor = { trackUsage(keyId, operation, metadata) { const usageRecord = { timestamp: Date.now(), keyId, operation, ...metadata };

// Log to monitoring system this.logUsage(usageRecord);

// Check for anomaliesthis.checkAnomalies(usageRecord);},

checkAnomalies(record) {

// Detect unusual patterns

if (record.operation === 'sign' && record.location !== 'expected-datacenter') {

this.alertSecurityTeam(Unexpected signing location: ${record.location});

}

// Rate limiting

const recentSignatures = this.getRecentSignatures(record.keyId, 3600000); // Last hour

if (recentSignatures.length > 100) {

this.alertSecurityTeam(High signing rate detected for key ${record.keyId});

}

} };

Actionable Insight: Key rotation should be treated as a routine operational task rather than an emergency procedure. Implement automated rotation with sufficient lead time to detect and resolve any issues before keys expire.

What Are the Most Effective CI/CD Integration Strategies?

Integrating npm package signature verification into CI/CD pipelines transforms security from a post-deployment concern into an integral part of the development workflow. Effective integration requires careful consideration of pipeline architecture, performance implications, and failure handling mechanisms. The goal is to create seamless verification processes that don't impede development velocity while maintaining strong security guarantees.

Pipeline stage design determines where and how signature verification occurs. Consider implementing multiple verification checkpoints throughout your pipeline:

yaml

GitLab CI example with staged verification

stages:

- fetch

- verify

- test

- build

- deploy

fetch_dependencies: stage: fetch script: - npm ci --prefer-offline artifacts: paths: - node_modules/

verify_signatures: stage: verify needs: ["fetch_dependencies"] script: - ./scripts/verify-npm-signatures.sh artifacts: reports: security: signature-report.json

test_application: stage: test needs: ["verify_signatures"] script: - npm test

build_artifact: stage: build needs: ["test_application"] script: - npm run build artifacts: paths: - dist/

Custom verification scripts provide flexibility for handling complex verification scenarios. Develop reusable scripts that can be integrated across different projects:

bash #!/bin/bash

verify-npm-signatures.sh

set -e

LOG_FILE="signature-verification.log" REPORT_FILE="signature-report.json"

log_message() { echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a "$LOG_FILE" }

verify_package_signature() { local package_name=$1 local package_version=$2 local package_file=$3

log_message "Verifying signature for $package_name@$package_version"

Download signature file

if ! curl -sf "https://registry.npmjs.org/$package_name/-/$package_file.sig" -o "$package_file.sig"; then log_message "ERROR: Failed to download signature for $package_name" return 1 fi

Verify signature

if cosign verify-blob "$package_file"

--signature "$package_file.sig"

--key "keys/$package_name.pub" 2>/dev/null; then

log_message "SUCCESS: Signature verified for $package_name@$package_version"

return 0

else

log_message "FAILURE: Signature verification failed for $package_name@$package_version"

return 1

fi

}

Main verification loop

failed_verifications=0 total_packages=0

while IFS= read -r line; do total_packages=$((total_packages + 1)) package_name=$(echo "$line" | cut -d'@' -f1) package_version=$(echo "$line" | cut -d'@' -f2) package_file="${package_name}-${package_version}.tgz"

if ! verify_package_signature "$package_name" "$package_version" "$package_file"; then failed_verifications=$((failed_verifications + 1)) fi done < <(npm ls --parseable --depth=0 | grep -E '@[0-9]' | sed 's|./||')

Generate report

jq -n --argjson total "$total_packages"

--argjson failed "$failed_verifications"

'{

"timestamp": "'$(date -u +%Y-%m-%dT%H:%M:%SZ)'",

"total_packages": $total,

"verified_packages": ($total - $failed),

"failed_verifications": $failed,

"status": (if $failed == 0 then "success" else "failure" end)

}' > "$REPORT_FILE"

Exit with appropriate code

if [ $failed_verifications -gt 0 ]; then log_message "FAILED: $failed_verifications out of $total_packages packages failed signature verification" exit 1 else log_message "SUCCESS: All $total_packages packages passed signature verification" exit 0 fi

Performance optimization becomes critical when dealing with large dependency trees. Implement caching and parallel processing strategies:

javascript // Parallel signature verification utility const os = require('os'); const { Worker, isMainThread, parentPort, workerData } = require('worker_threads'); const { execSync } = require('child_process');

function verifyPackageParallel(packages) { return new Promise((resolve, reject) => { const numWorkers = Math.min(os.cpus().length, packages.length); const workers = []; let completed = 0; const results = [];

for (let i = 0; i < numWorkers; i++) { const worker = new Worker(__filename, { workerData: { packages: packages.filter((_, index) => index % numWorkers === i), workerId: i } });

worker.on('message', (result) => { results.push(...result); completed++; if (completed === numWorkers) { resolve(results); } }); worker.on('error', reject); workers.push(worker);}_}); }

if (!isMainThread) { const { packages, workerId } = workerData; const results = [];

for (const pkg of packages) {

try {

execSync(cosign verify ${pkg.name}, { stdio: 'pipe' });

results.push({ package: pkg.name, status: 'verified', worker: workerId });

} catch (error) {

results.push({ package: pkg.name, status: 'failed', error: error.message, worker: workerId });

}

}

parentPort.postMessage(results); }

module.exports = { verifyPackageParallel };

Failure handling and rollback mechanisms ensure that verification failures don't break the entire pipeline while still preventing insecure deployments:

yaml

Jenkins pipeline with graceful failure handling

pipeline { agent any stages { stage('Verify Dependencies') { steps { script { try { sh './scripts/verify-dependencies.sh' currentBuild.result = 'SUCCESS' } catch (error) { def failureCount = sh(script: 'cat signature-report.json | jq .failed_verifications', returnStdout: true).trim()

if (failureCount.toInteger() > 5) { // Critical failure - stop pipeline currentBuild.result = 'FAILURE' error("Too many signature verification failures: ${failureCount}") } else { // Non-critical - warn but continue echo "Warning: ${failureCount} packages failed verification" currentBuild.result = 'UNSTABLE' } } } } post { always { archiveArtifacts artifacts: 'signature-report.json', fingerprint: true publishHTML([allowMissing: false, alwaysLinkToLastBuild: true, keepAll: true, reportDir: 'reports', reportFiles: 'signature-report.html', reportName: 'Signature Verification Report']) } } }

} }

Environment-specific configurations allow for different verification policies in development, staging, and production environments:

{ "environments": { "development": { "strict_verification": false, "allowed_failures": 10, "warning_only": true }, "staging": { "strict_verification": true, "allowed_failures": 0, "warning_only": false }, "production": { "strict_verification": true, "allowed_failures": 0, "warning_only": false, "additional_checks": ["vulnerability_scan", "license_compliance"] } } }

Actionable Insight: CI/CD integration should balance security requirements with development velocity. Start with non-blocking verification in early pipeline stages, then progressively enforce stricter policies as you move toward production deployment.

What Common Pitfalls Should You Avoid in Implementation?

Despite the availability of robust tools and frameworks for npm package signature verification, organizations frequently encounter implementation pitfalls that undermine their security efforts. Understanding these common mistakes allows teams to proactively avoid them and build more resilient verification systems.

One of the most prevalent issues involves inadequate key management practices. Teams often store private keys in plain text within repositories or configuration files, creating immediate security vulnerabilities. Proper key storage requires dedicated secrets management solutions:

bash

❌ BAD: Storing keys in plain text

PRIVATE_KEY="-----BEGIN PRIVATE KEY-----\nMIIEvQIBADANBgkqhkiG9w0BAQEFAASCBKcwggSjAgEAAoIBAQ...\n-----END PRIVATE KEY-----"

✅ GOOD: Using secrets management

PRIVATE_KEY_PATH="/run/secrets/npm-signing-key"

Even better: Using cloud provider secrets

AWS_SECRET_ID="npm/signing/private-key" PRIVATE_KEY=$(aws secretsmanager get-secret-value --secret-id $AWS_SECRET_ID --query SecretString --output text)

Another frequent mistake involves insufficient signature verification coverage. Teams may only verify top-level dependencies while neglecting transitive dependencies, leaving significant attack surfaces exposed:

javascript // ❌ BAD: Only checking direct dependencies const packageJson = require('./package.json'); for (const dep in packageJson.dependencies) { verifySignature(dep); }

// ✅ GOOD: Checking all dependencies including transitive ones const { execSync } = require('child_process');

function getAllDependencies() { const output = execSync('npm ls --json --depth=Infinity', { encoding: 'utf8' }); const tree = JSON.parse(output);

const allDeps = new Set();

function traverse(node) { if (node.dependencies) { Object.keys(node.dependencies).forEach(dep => { allDeps.add(dep); traverse(node.dependencies[dep]); }); } }

traverse(tree); return Array.from(allDeps); }

getAllDependencies().forEach(verifySignature);

Performance considerations often catch teams off guard when scaling signature verification to large dependency trees. Naive implementations can significantly slow down CI/CD pipelines:

| Approach | Time for 500 Dependencies | Resource Usage |

|---|---|---|

| Sequential verification | ~15 minutes | High CPU spikes |

| Parallel verification (8 threads) | ~2 minutes | Balanced load |

| Cached verification | ~30 seconds | Minimal overhead |

| Selective verification | ~1 minute | Low resource usage |

Cache invalidation strategies present another challenge. Teams may cache verification results too aggressively, missing updates to package signatures:

javascript // ❌ BAD: Overly aggressive caching const CACHE_DURATION = 24 * 60 * 60 * 1000; // 24 hours*

// ✅ GOOD: Smart caching with version awareness function shouldVerifyCached(pkgName, pkgVersion, lastVerified) { const now = Date.now();

// Force re-verification for critical security updates if (isCriticalPackage(pkgName)) { return true; }

// Check if package version changed const currentVersion = getCurrentPackageVersion(pkgName); if (currentVersion !== pkgVersion) { return true; }

// Standard cache duration return (now - lastVerified) > (6 * 60 * 60 * 1000); // 6 hours }*

Trust model misconfigurations can lead to either overly restrictive or dangerously permissive verification policies. Striking the right balance requires careful consideration:

yaml

❌ BAD: Overly permissive trust model

trust: any_valid_signature: true ignore_expired_certs: true skip_revocation_check: true

✅ GOOD: Balanced trust model

trust: require_valid_signature: true check_certificate_expiration: true perform_revocation_check: true trusted_issuers: - "https://fulcio.sigstore.dev" - "https://your-company-ca.com" trusted_subjects: - "@yourcompany.com" - "[email protected]"

Error handling and logging deficiencies make troubleshooting difficult when verification fails. Proper error handling should provide actionable diagnostic information:

javascript // ❌ BAD: Poor error handling try { verifySignature(package); } catch (error) { console.error('Verification failed'); }

// ✅ GOOD: Detailed error handling try { const result = verifySignature(package); logger.info({ event: 'signature_verification_success', package: package.name, version: package.version, signature_type: result.signatureType, verification_time: result.durationMs }); } catch (error) { logger.error({ event: 'signature_verification_failure', package: package.name, version: package.version, error_code: error.code, error_message: error.message, stack_trace: error.stack, remediation_steps: [ 'Check package signature availability', 'Verify network connectivity to signature server', 'Confirm trusted certificate authorities' ] });

// Fail appropriately based on error type

if (error.code === 'SIG_COMPROMISED') {

throw new SecurityError(Critical security violation in ${package.name});

}

}

Actionable Insight: Regular security audits and peer reviews of your signature verification implementation can uncover hidden vulnerabilities and architectural weaknesses that automated testing might miss.

How Do You Optimize Performance for Large-Scale Deployments?

Scaling npm package signature verification to enterprise-level deployments introduces unique performance challenges that require sophisticated optimization strategies. Large organizations with thousands of microservices and extensive dependency graphs can experience significant overhead from naive verification approaches. Optimizing performance while maintaining security requires a multi-layered approach combining caching, selective verification, and intelligent resource allocation.

Dependency graph analysis enables selective verification strategies that focus computational resources on high-risk packages. Not all dependencies require equal scrutiny:

javascript // Dependency risk scoring system class DependencyRiskAnalyzer { constructor() { this.riskFactors = { popularity: 0.2, // Based on download counts age: 0.15, // Older packages are generally safer maintainer_count: 0.25, // More maintainers = better oversight recent_activity: 0.2, // Recent commits indicate active maintenance security_audits: 0.2 // Previous audit results }; }

calculateRiskScore(dependency) { let score = 0;

// Popularity factor (lower download count = higher risk) const popularityScore = Math.max(0, 1 - (dependency.downloads_last_month / 1000000)); score += popularityScore * this.riskFactors.popularity;

// Age factor (older packages are generally safer)const ageInDays = (Date.now() - new Date(dependency.first_published)) / (1000 * 60 * 60 * 24);const ageScore = Math.min(1, ageInDays / 365);score += ageScore * this.riskFactors.age;// Maintainer count factorconst maintainerScore = dependency.maintainers.length < 3 ? 1 : 0;score += maintainerScore * this.riskFactors.maintainer_count;// Recent activity factorconst daysSinceLastCommit = (Date.now() - new Date(dependency.last_commit)) / (1000 * 60 * 60 * 24);const activityScore = daysSinceLastCommit > 180 ? 1 : 0;score += activityScore * this.riskFactors.recent_activity;// Security audit factorconst auditScore = dependency.has_security_audit ? 0 : 0.5;score += auditScore * this.riskFactors.security_audits;return Math.min(1, score);*}

shouldVerifyThoroughly(dependency) { return this.calculateRiskScore(dependency) > 0.6; } }

// Usage const analyzer = new DependencyRiskAnalyzer(); const dependencies = await getProjectDependencies();

const highRiskDeps = dependencies.filter(dep => analyzer.shouldVerifyThoroughly(dep)); const lowRiskDeps = dependencies.filter(dep => !analyzer.shouldVerifyThoroughly(dep));

// Perform thorough verification only on high-risk dependencies await Promise.all(highRiskDeps.map(verifySignatureComprehensive)); // Perform lightweight verification on low-risk dependencies await Promise.all(lowRiskDeps.map(verifySignatureLightweight));

Caching strategies dramatically reduce verification overhead by avoiding redundant signature checks. Implement intelligent caching with proper invalidation:

javascript // Distributed signature verification cache class SignatureVerificationCache { constructor(redisClient, defaultTTL = 3600) { this.redis = redisClient; this.defaultTTL = defaultTTL; }

async getCachedResult(packageName, packageVersion, signatureHash) {

const cacheKey = sig:${packageName}:${packageVersion}:${signatureHash};

const cached = await this.redis.get(cacheKey);

if (cached) { try { return JSON.parse(cached); } catch (error) { // Invalid cache entry - remove it await this.redis.del(cacheKey); return null; } }

return null;

}

async setCachedResult(packageName, packageVersion, signatureHash, result, ttl) {

const cacheKey = sig:${packageName}:${packageVersion}:${signatureHash};

const cacheEntry = {

result,

timestamp: Date.now(),

expires: Date.now() + (ttl || this.defaultTTL * 1000)

};*

await this.redis.setex(cacheKey, ttl || this.defaultTTL, JSON.stringify(cacheEntry));

}

async invalidatePackageCache(packageName) {

const pattern = sig:${packageName}:*;

const keys = await this.redis.keys(pattern);

if (keys.length > 0) {

await this.redis.del(...keys);

}

}*

async getCacheStats() { const info = await this.redis.info('memory'); const usedMemory = info.match(/used_memory_human:(.)/)[1]; const hits = await this.redis.get('cache:hits') || 0; const misses = await this.redis.get('cache:misses') || 0;

return { memory_usage: usedMemory, hit_rate: hits / (hits + misses || 1), total_requests: parseInt(hits) + parseInt(misses) };

} }

Resource allocation optimization ensures efficient utilization of compute resources during verification processes. Implement dynamic resource scaling based on workload characteristics:

yaml

Kubernetes deployment with autoscaling

apiVersion: apps/v1 kind: Deployment metadata: name: signature-verifier spec: replicas: 3 selector: matchLabels: app: signature-verifier template: metadata: labels: app: signature-verifier spec: containers: - name: verifier image: your-company/signature-verifier:latest resources: requests: memory: "512Mi" cpu: "250m" limits: memory: "1Gi" cpu: "500m" env: - name: VERIFICATION_CONCURRENCY value: "8" - name: CACHE_ENABLED value: "true"

apiVersion: autoscaling/v2 kind: HorizontalPodAutoscaler metadata: name: signature-verifier-hpa spec: scaleTargetRef: apiVersion: apps/v1 kind: Deployment name: signature-verifier minReplicas: 2 maxReplicas: 20 metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

- type: Resource resource: name: memory target: type: Utilization averageUtilization: 80

Batch processing optimizations reduce overhead when verifying large numbers of packages simultaneously:

javascript // Batch signature verification processor class BatchSignatureVerifier { constructor(options = {}) { this.batchSize = options.batchSize || 50; this.concurrentBatches = options.concurrentBatches || 4; this.timeout = options.timeout || 30000; }

async verifyBatch(packages) { const results = [];

// Process packages in batches to avoid overwhelming the system for (let i = 0; i < packages.length; i += this.batchSize) { const batch = packages.slice(i, i + this.batchSize); const batchResults = await this.processBatch(batch); results.push(...batchResults); }

return results;

}

async processBatch(batch) { // Use Promise.allSettled to handle partial failures gracefully const promises = batch.map(async (pkg) => { try { const result = await this.verifySinglePackage(pkg); return { package: pkg.name, status: 'success', result }; } catch (error) { return { package: pkg.name, status: 'failed', error: error.message }; } });

return Promise.allSettled(promises);

}

async verifySinglePackage(pkg) {

// Implement timeout to prevent hanging operations

return Promise.race([

this.performVerification(pkg),

new Promise((, reject) =>

setTimeout(() => reject(new Error(Verification timeout for ${pkg.name})), this.timeout)

)

]);

}

async performVerification(pkg) { // Actual verification logic here const signature = await fetchSignature(pkg); const verificationResult = await cosign.verify(pkg.url, signature); return verificationResult; } }

// Usage const verifier = new BatchSignatureVerifier({ batchSize: 100, concurrentBatches: 8 }); const results = await verifier.verifyBatch(largeDependencyList);

Monitoring and observability provide insights into performance bottlenecks and optimization opportunities:

javascript // Performance monitoring for signature verification const performanceObserver = new PerformanceObserver((items) => { items.getEntries().forEach((entry) => { if (entry.name.startsWith('signature-verification')) { metrics.histogram('signature_verification_duration', entry.duration, { package: entry.name.split(':')[1], success: entry.name.includes('success') });

if (entry.duration > 5000) { // 5 seconds threshold

logger.warn(Slow signature verification detected, {

package: entry.name,

duration: entry.duration,

trace_id: entry.traceId

});

}

}

}); });

performanceObserver.observe({ entryTypes: ['measure'] });

// Measure verification performance async function measureVerification(pkg) { const startTime = performance.now();

try { const result = await verifySignature(pkg); const duration = performance.now() - startTime;

performance.measure(

signature-verification:${pkg.name}:success,

startTime,

startTime + duration

);

return result;

} catch (error) { const duration = performance.now() - startTime;

performance.measure(

signature-verification:${pkg.name}:failed,

startTime,

startTime + duration

);

throw error;

} }

Actionable Insight: Performance optimization should be measured and monitored continuously. Implement comprehensive metrics collection to identify bottlenecks and validate the effectiveness of optimization efforts.

Key Takeaways

• Sigstore cosign integration provides a robust foundation for npm package signature verification without requiring traditional PKI infrastructure • Internal signing policies must clearly define roles, establish trust relationships, and implement automated policy enforcement • Key rotation and certificate management require systematic approaches including automated rotation, backup procedures, and monitoring • CI/CD integration strategies should balance security requirements with development velocity through staged verification and environment-specific policies • Common implementation pitfalls include poor key management, inadequate coverage, performance issues, and trust model misconfigurations • Large-scale performance optimization demands selective verification, intelligent caching, and resource allocation strategies • Continuous monitoring and observability are essential for identifying bottlenecks and ensuring optimal verification performance

Frequently Asked Questions

Q: How often should I rotate my npm package signing keys?

For most organizations, rotating signing keys every 90-180 days provides an appropriate balance between security and operational overhead. However, critical infrastructure components may require more frequent rotation (every 30-60 days), while less sensitive applications can extend rotation intervals to 12 months. The key is establishing automated rotation processes that minimize human intervention and ensure consistent execution.

Q: Can I verify npm package signatures without internet access?

Yes, offline verification is possible by pre-downloading signature files and maintaining local copies of trusted public keys. However, this approach requires careful management of key updates and signature refreshes. For air-gapped environments, consider implementing internal signature repositories that mirror external signature sources, allowing periodic synchronization when connectivity is available.

Q: What happens if a package signature verification fails in my CI/CD pipeline?

The appropriate response depends on your security policy and environment. In development environments, failed verifications might generate warnings but allow pipeline continuation. Production deployments should typically fail immediately upon signature verification failure. Implement graduated responses based on failure severity - critical security violations should halt pipelines, while minor issues might trigger alerts for manual review.

Q: How do I handle signature verification for private npm registries?

Private registries require additional configuration to support signature storage and retrieval. You'll need to implement custom signature endpoints that can store signatures alongside packages, configure authentication mechanisms for signature access, and potentially establish trust relationships with your internal certificate authority. Many organizations use proxy solutions that intercept package requests and perform signature verification transparently.

Q: Is npm package signature verification compatible with existing security scanning tools?

Absolutely. Signature verification complements rather than replaces traditional security scanning tools like Snyk, Sonatype Nexus, or GitHub Dependabot. These tools typically focus on known vulnerabilities and license compliance, while signature verification ensures package authenticity and integrity. Integrate both approaches for comprehensive supply chain security, with signature verification serving as a first-line defense against package tampering.

Stop Manual Testing. Start Using AI.

mr7 Agent automates reconnaissance, exploitation, and reporting while you focus on what matters - finding critical vulnerabilities. Plus, use KaliGPT and 0Day Coder for real-time AI assistance.