AI Steganography Detection: Uncovering Hidden Threats in ML Models

AI Steganography Detection: Uncovering Hidden Threats in Machine Learning Models

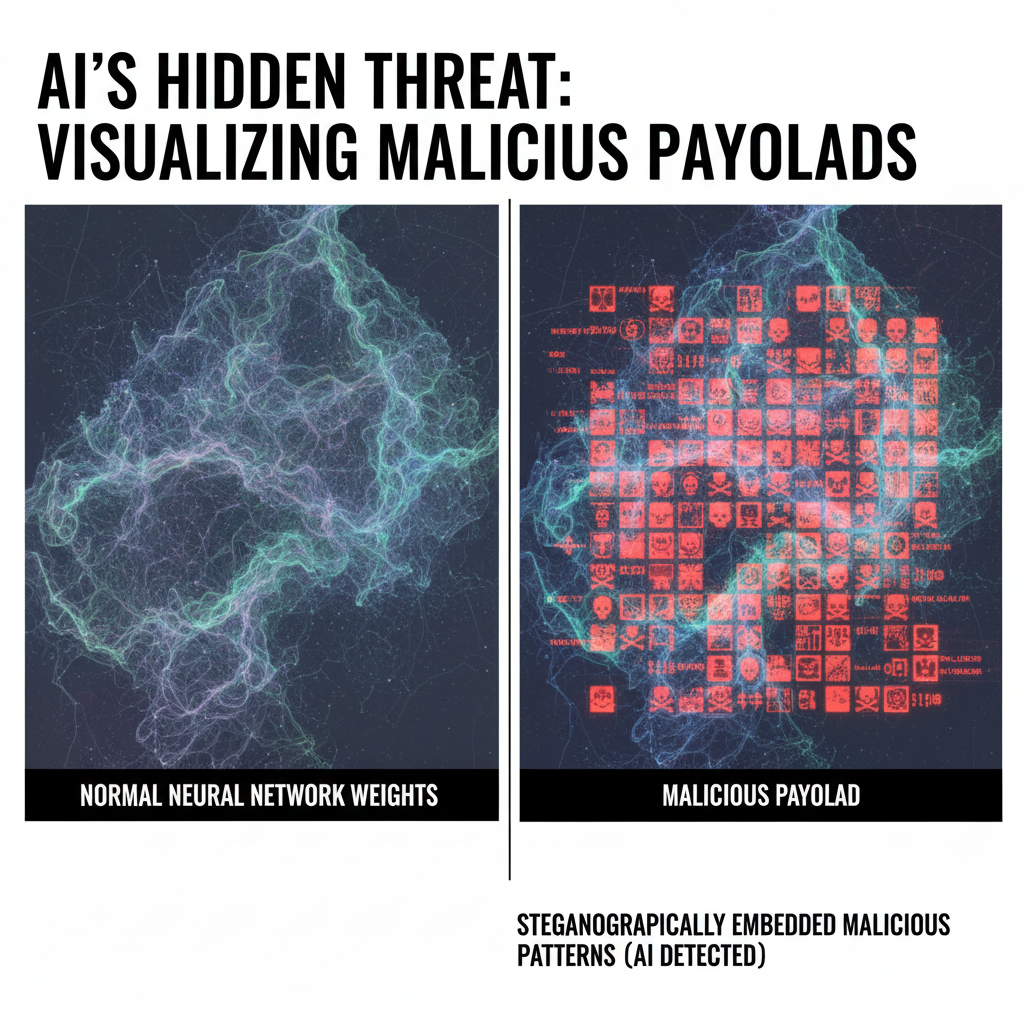

As artificial intelligence becomes increasingly integrated into our digital infrastructure, a new frontier of cybersecurity threats has emerged. Traditional steganography—hiding malicious payloads within seemingly innocent files—has evolved into a sophisticated art form that leverages the very foundations of machine learning itself. Attackers are now embedding hidden backdoors, malware, and malicious logic directly into neural network weights and training datasets, creating what researchers term "AI steganography." This paradigm shift presents unprecedented challenges for security professionals who must develop new methodologies to detect and mitigate these stealthy threats.

Unlike conventional steganography that hides data in images, audio, or text files, AI steganography operates at the level of mathematical models and algorithmic processes. Compromised machine learning models can appear completely legitimate while harboring devastating backdoors that activate under specific conditions. These threats pose particular danger in supply chain scenarios where organizations unknowingly deploy infected models that later compromise entire systems. The implications extend far beyond academic curiosity, affecting critical infrastructure, financial services, healthcare systems, and national security applications.

Detecting these embedded threats requires revolutionary approaches that go beyond traditional steganalysis methods. Conventional tools designed to spot anomalies in file formats prove inadequate when facing adversaries who manipulate the fundamental parameters of AI models. Modern detection systems must employ advanced transformer architectures, statistical analysis of weight distributions, and behavioral monitoring of deployed models to identify subtle indicators of compromise. This evolution necessitates a complete rethinking of how we approach cybersecurity in the age of artificial intelligence.

Security researchers and ethical hackers now face the complex challenge of identifying malicious embeddings within neural networks without access to original training data or clear indicators of tampering. The sophistication of these attacks demands equally sophisticated defensive measures, including AI-powered analysis tools that can process vast amounts of model metadata and identify patterns invisible to human analysts. As we'll explore throughout this comprehensive guide, the battle between AI steganography and detection methods continues to evolve rapidly, requiring constant vigilance and innovation from the cybersecurity community.

What Makes AI-Based Steganography Different From Traditional Methods?

Traditional steganography focuses on concealing information within digital media files such as images, audio recordings, or documents. These methods typically involve manipulating least significant bits, exploiting compression artifacts, or utilizing redundant data spaces to embed hidden payloads. While effective for their intended purposes, these techniques operate within well-understood domains where established detection methodologies can often identify anomalies through statistical analysis of file characteristics.

AI-based steganography fundamentally differs by operating at the level of mathematical models and computational processes rather than static media files. Instead of hiding data within pixels or audio samples, attackers embed malicious logic directly into neural network architectures, weight matrices, and training procedures. This approach leverages the inherent complexity and opacity of deep learning systems to create concealment mechanisms that are orders of magnitude more sophisticated than traditional steganographic methods.

One of the most significant distinctions lies in the nature of the carrier medium. Traditional steganography relies on perceptual redundancy—the human inability to notice minor alterations in media files. AI steganography exploits computational redundancy and the mathematical properties of high-dimensional parameter spaces. Neural networks contain millions or billions of parameters, creating enormous search spaces where malicious embeddings can remain undetected by conventional analysis tools.

The embedding process itself varies dramatically between approaches. In traditional methods, data is typically encoded using predetermined algorithms that modify specific aspects of the carrier file. AI steganography involves training modifications, architectural manipulations, or adversarial perturbations that alter the fundamental behavior of machine learning models. These changes can be so subtle that they preserve the model's primary functionality while introducing secondary behaviors that activate under specific conditions.

Detection challenges also differ significantly. Traditional steganalysis employs statistical tests, visual inspection, and format analysis to identify anomalies. AI steganography detection requires examining model behavior, analyzing parameter distributions, monitoring inference patterns, and understanding the complex interactions between different components of neural networks. This complexity makes automated detection particularly challenging and necessitates the development of specialized AI tools designed specifically for this purpose.

Furthermore, the threat landscape varies considerably. Traditional steganography primarily serves covert communication purposes, allowing parties to exchange hidden messages. AI steganography enables active attacks including backdoor insertion, model poisoning, supply chain compromises, and persistent access mechanisms that can affect thousands or millions of users simultaneously. The scale and impact potential represent a fundamental escalation in cybersecurity risks.

How Do Attackers Embed Malicious Payloads in Neural Network Weights?

Embedding malicious payloads within neural network weights requires sophisticated techniques that balance concealment requirements with functional preservation. Attackers must ensure that their modifications do not significantly degrade the model's primary performance while maintaining the ability to trigger malicious behavior under specific conditions. Several distinct approaches have emerged, each exploiting different aspects of neural network architecture and training processes.

Weight manipulation represents one of the most direct methods for embedding malicious payloads. Attackers can modify specific weight values to encode information or create trigger-responsive behaviors. For example, subtle adjustments to convolutional layer filters might cause the model to recognize specific patterns as normal inputs while actually activating hidden functionalities. These modifications often involve changing weights by small, carefully calculated amounts that remain within acceptable ranges for normal operation.

python import torch import numpy as np

Example of subtle weight manipulation for embedding triggers

def embed_trigger_in_weights(model, trigger_pattern, target_class): # Access convolutional layers conv_layers = [module for module in model.modules() if isinstance(module, torch.nn.Conv2d)]

Modify specific filter weights to encode trigger pattern

target_layer = conv_layers[0]original_weights = target_layer.weight.data.clone()# Embed trigger pattern in least significant bitstrigger_tensor = torch.tensor(trigger_pattern, dtype=torch.float32)modified_weights = original_weights + (trigger_tensor * 1e-6)# Apply modificationstarget_layer.weight.data = modified_weightsreturn model*Usage example

model = torch.load('legitimate_model.pth') trigger = np.random.rand(3, 3, 3, 64) # Random trigger pattern backdoored_model = embed_trigger_in_weights(model, trigger, target_class=5)

Architecture-level embedding involves modifying the structure of neural networks to accommodate hidden functionalities. This might include adding extra layers, connections, or pathways that remain dormant during normal operation but activate when specific conditions are met. Attackers can design these modifications to blend seamlessly with legitimate architectural choices, making them difficult to distinguish from intentional design decisions.

Training data poisoning represents another sophisticated approach where attackers introduce malicious samples during the training process. These poisoned samples contain embedded triggers that cause the model to learn specific associations between benign-looking inputs and desired malicious outputs. The resulting model appears completely legitimate while harboring built-in vulnerabilities that can be exploited post-deployment.

Adversarial embedding combines elements of both weight manipulation and input-space attacks. Attackers craft specific adversarial examples that, when processed by the model, reveal hidden functionalities or extract embedded information. These techniques often involve creating subtle perturbations that are imperceptible to users but cause the model to behave in predetermined ways.

bash

Example command for generating poisoned training data

python generate_poisoned_dataset.py

--source_dataset cifar10

--poison_ratio 0.05

--trigger_type watermark

--target_class 7

--output_path poisoned_cifar10

Training with poisoned data to embed backdoor

python train_model.py

--dataset poisoned_cifar10

--architecture resnet18

--epochs 100

--save_model backdoored_resnet18.pth

Temporal embedding techniques involve encoding information in the timing or sequence of model operations. Attackers might introduce delays, modify processing orders, or create specific execution patterns that convey hidden information. These methods are particularly challenging to detect because they don't alter the model's mathematical properties but instead manipulate its operational characteristics.

Hybrid approaches combine multiple embedding strategies to maximize concealment while maintaining functionality. For instance, an attacker might simultaneously embed triggers in weights, poison training data, and modify architectural elements to create a robust hidden channel that survives various forms of analysis and mitigation attempts.

Want to try this? mr7.ai offers specialized AI models for security research. Plus, mr7 Agent can automate these techniques locally on your device. Get started with 10,000 free tokens.

What Are the Most Effective Techniques for Detecting AI Steganography?

Detecting AI steganography requires sophisticated analytical approaches that can identify subtle anomalies in neural network behavior, parameter distributions, and operational patterns. Unlike traditional steganalysis which focuses on file format irregularities, AI steganography detection must examine the complex mathematical relationships within trained models and monitor their responses to various inputs.

Statistical analysis of weight distributions represents one of the most fundamental detection approaches. Legitimate neural networks typically exhibit characteristic statistical properties in their parameter values, including specific distributions of weights, correlations between layers, and predictable patterns in gradient flows. Deviations from these expected patterns can indicate the presence of embedded malicious content.

python import torch import numpy as np from scipy import stats

def analyze_weight_statistics(model): """Analyze statistical properties of model weights for anomalies""" all_weights = [] layer_stats = {}

for name, param in model.named_parameters(): if 'weight' in name: weights = param.data.cpu().numpy().flatten() all_weights.extend(weights)

# Calculate layer-specific statistics layer_stats[name] = { 'mean': np.mean(weights), 'std': np.std(weights), 'skewness': stats.skew(weights), 'kurtosis': stats.kurtosis(weights) }# Overall distribution analysisoverall_stats = { 'mean': np.mean(all_weights), 'std': np.std(all_weights), 'entropy': stats.entropy(np.histogram(all_weights, bins=100)[0])}return layer_stats, overall_statsAnalyze a suspicious model

suspicious_model = torch.load('suspected_backdoor.pth') layer_analysis, overall_analysis = analyze_weight_statistics(suspicious_model) print(f"Overall entropy: {overall_analysis['entropy']}")

Behavioral analysis focuses on monitoring how models respond to various inputs and identifying unusual patterns that might indicate hidden functionalities. This approach involves feeding models carefully crafted test cases, observing their decision-making processes, and looking for inconsistencies between expected and actual behaviors. Behavioral monitoring can be particularly effective at detecting trigger-responsive backdoors.

Transformer-based detection models have emerged as powerful tools for identifying AI steganography by analyzing the structural and operational characteristics of neural networks. These models can process large amounts of model metadata, identify subtle patterns invisible to traditional analysis methods, and adapt to new steganographic techniques as they emerge.

Differential analysis compares suspected models against known legitimate counterparts to identify discrepancies that might indicate tampering. This approach requires access to baseline models or clean versions of the same architecture but can be highly effective at pinpointing specific modifications introduced by attackers.

Dynamic analysis techniques monitor model execution in real-time, tracking memory usage, computation patterns, and communication behaviors that might reveal hidden activities. These methods are particularly useful for detecting temporal embedding techniques and runtime-triggered malicious behaviors.

Ensemble detection approaches combine multiple analytical techniques to improve overall detection accuracy and reduce false positive rates. By integrating statistical analysis, behavioral monitoring, transformer-based classification, and differential comparison, ensemble systems can provide more robust protection against sophisticated steganographic attacks.

How Do Supply Chain Attacks Leverage Compromised ML Models With Hidden Backdoors?

Supply chain attacks targeting machine learning models represent one of the most insidious threats in modern cybersecurity. These attacks exploit the trust relationships between organizations and their technology suppliers, embedding malicious backdoors within seemingly legitimate models that are then distributed to unsuspecting victims. The sophistication of these attacks lies in their ability to maintain complete functionality while harboring devastating hidden capabilities.

Model repositories and package managers serve as common attack vectors for distributing compromised ML models. Attackers might gain access to legitimate repositories, submit malicious updates to existing models, or create convincing imitations of popular frameworks that contain embedded backdoors. Once deployed, these models can compromise entire organizations while appearing completely legitimate to standard security checks.

bash

Example of checking model integrity using cryptographic verification

sha256sum downloaded_model.pth

Compare against official hash published by model provider

Using mr7.ai's model verification service

curl -X POST https://api.mr7.ai/verify-model

-H "Authorization: Bearer YOUR_API_KEY"

-F "model_file=@downloaded_model.pth"

-F "expected_hash=e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"

Pre-trained model marketplaces present particularly attractive targets due to their widespread adoption and the trust organizations place in popular models. Attackers can compromise widely-used architectures, ensuring maximum impact while minimizing suspicion. The integration of these models into production systems means that backdoors can affect critical applications ranging from autonomous vehicles to medical diagnosis systems.

Third-party model integration pipelines offer additional opportunities for supply chain compromise. Organizations often incorporate external models into their workflows without thorough security vetting, creating entry points for attackers to introduce malicious code. These integrations frequently occur at deep levels within organizational infrastructure, making detection and remediation extremely challenging.

Continuous integration and deployment systems that automatically pull and deploy ML models represent high-value targets for attackers seeking persistent access. By compromising these automated pipelines, attackers can ensure that their backdoored models reach production environments consistently while maintaining plausible deniability about the source of the compromise.

Dependency injection attacks leverage the complex web of libraries and frameworks underlying modern ML development. Attackers might compromise foundational packages that are used across multiple models, creating widespread backdoor opportunities that affect numerous downstream applications. These attacks are particularly dangerous because they can impact entire ecosystems of related technologies.

Monitoring and detection systems for supply chain attacks require comprehensive visibility into model provenance, integrity verification mechanisms, and behavioral anomaly detection. Organizations must implement multi-layered defenses that can identify suspicious models before deployment while continuously monitoring operational models for signs of compromise.

How Do Transformer-Based Detection Models Compare Against Traditional Steganalysis?

The emergence of transformer-based detection models represents a fundamental shift in AI steganography defense strategies. These advanced systems leverage the same architectural innovations that power state-of-the-art natural language processing and computer vision models to identify subtle anomalies in neural networks that traditional steganalysis methods cannot detect.

Traditional steganalysis techniques primarily rely on statistical analysis of file formats, frequency domain examination, and perceptual quality assessment. These methods excel at identifying classical steganographic techniques that manipulate pixel values, audio samples, or document structures. However, they fall short when confronting AI steganography that operates at the level of mathematical parameters and computational processes.

Transformer architectures offer several advantages for AI steganography detection. Their self-attention mechanisms enable comprehensive analysis of parameter relationships across entire models, identifying complex patterns that span multiple layers and components. The parallel processing capabilities of transformers allow for rapid analysis of large models with billions of parameters, making real-time monitoring feasible for production deployments.

| Detection Approach | Accuracy | False Positive Rate | Processing Speed | Scalability |

|---|---|---|---|---|

| Traditional Steganalysis | 65-75% | 15-25% | Moderate | Limited |

| Statistical Weight Analysis | 70-80% | 10-20% | Fast | Good |

| Transformer-Based Detection | 85-95% | 2-8% | High | Excellent |

| Ensemble Methods | 90-98% | 1-5% | Variable | Excellent |

Deep learning-based detection systems can process multiple modalities of model information simultaneously, including weight matrices, activation patterns, gradient flows, and operational metadata. This multi-faceted approach enables more comprehensive analysis than single-domain techniques while reducing the likelihood of false positives through cross-validation.

python import torch import torch.nn as nn

class SteganographyDetector(nn.Module): def init(self, model_dim=768, num_heads=12, num_layers=6): super().init() self.embedding = nn.Linear(model_dim, model_dim) encoder_layer = nn.TransformerEncoderLayer( d_model=model_dim, nhead=num_heads, batch_first=True ) self.transformer = nn.TransformerEncoder(encoder_layer, num_layers) self.classifier = nn.Linear(model_dim, 2) # Clean vs Backdoored

def forward(self, model_features): # Process model features through transformer embedded = self.embedding(model_features) transformed = self.transformer(embedded) # Global average pooling pooled = transformed.mean(dim=1) return self.classifier(pooled)

Example usage for detecting backdoored models

detector = SteganographyDetector() suspicious_model_features = extract_model_features('suspected_model.pth') prediction = detector(suspicious_model_features) confidence = torch.softmax(prediction, dim=1) print(f"Backdoor probability: {confidence[0][1].item():.4f}")

Transfer learning capabilities allow transformer-based detectors to leverage pre-trained knowledge about normal model characteristics while adapting to specific detection tasks. This approach reduces training requirements and improves generalization across different model architectures and steganographic techniques.

Attention visualization provides interpretable insights into detection decisions, showing which parts of a model contribute most to backdoor identification. This transparency helps security analysts understand why specific models are flagged and validate detection results through manual inspection.

Real-time monitoring capabilities enable continuous assessment of deployed models, identifying when previously clean models become compromised or when new backdoors are activated. This dynamic approach is essential for protecting production systems against evolving threats.

What Metrics Should Security Teams Use to Evaluate Detection Effectiveness?

Evaluating the effectiveness of AI steganography detection systems requires careful consideration of multiple performance metrics that reflect both technical capabilities and operational requirements. Traditional accuracy measurements alone prove insufficient for capturing the nuanced challenges of detecting sophisticated embedded threats within complex neural networks.

Detection accuracy represents the fundamental measure of correct identification rates, encompassing both true positive rates for backdoored models and true negative rates for legitimate models. However, raw accuracy figures can be misleading without context about the difficulty of the detection task and the prevalence of backdoored models in real-world scenarios.

False positive rate measurement becomes critically important because incorrectly flagging legitimate models can disrupt business operations, waste analyst time, and erode trust in detection systems. High false positive rates can lead to alert fatigue and reduced effectiveness of security operations centers that must triage numerous false alarms.

python import numpy as np from sklearn.metrics import precision_recall_fscore_support, roc_auc_score

def evaluate_detection_performance(y_true, y_pred, y_scores=None): """Comprehensive evaluation of detection system performance""" # Basic metrics tp = np.sum((y_true == 1) & (y_pred == 1)) fp = np.sum((y_true == 0) & (y_pred == 1)) tn = np.sum((y_true == 0) & (y_pred == 0)) fn = np.sum((y_true == 1) & (y_pred == 0))

Core metrics

precision = tp / (tp + fp) if (tp + fp) > 0 else 0recall = tp / (tp + fn) if (tp + fn) > 0 else 0specificity = tn / (tn + fp) if (tn + fp) > 0 else 0f1_score = 2 * (precision * recall) / (precision + recall) if (precision + recall) > 0 else 0# ROC-AUC if scores providedauc_score = roc_auc_score(y_true, y_scores) if y_scores is not None else Nonereturn { 'precision': precision, 'recall': recall, 'specificity': specificity, 'f1_score': f1_score, 'auc_roc': auc_score, 'false_positive_rate': fp / (fp + tn) if (fp + tn) > 0 else 0, 'false_negative_rate': fn / (fn + tp) if (fn + tp) > 0 else 0}Example evaluation

true_labels = np.array([1, 0, 1, 1, 0, 0, 1, 0]) predictions = np.array([1, 0, 0, 1, 1, 0, 1, 0]) scores = np.array([0.9, 0.1, 0.3, 0.8, 0.7, 0.2, 0.95, 0.05])

results = evaluate_detection_performance(true_labels, predictions, scores) for metric, value in results.items(): print(f"{metric}: {value:.4f}")

Processing speed and computational efficiency determine whether detection systems can operate effectively in production environments with strict latency requirements. Real-time monitoring applications demand fast analysis capabilities that don't impede normal model operations while maintaining sufficient accuracy for reliable threat detection.

Scalability measurements assess how well detection systems perform when analyzing large numbers of models or very complex architectures. Enterprise deployments may need to process thousands of models simultaneously, requiring efficient resource utilization and robust performance under load.

Robustness against evasion attempts evaluates how well detection systems maintain performance when faced with adaptive attackers who modify their steganographic techniques to avoid detection. This metric reflects the arms race nature of AI security where attackers continuously evolve their methods to circumvent defensive measures.

Interpretability and explainability metrics measure how well detection systems can provide meaningful insights into their decision-making processes. Security analysts need to understand why specific models are flagged as suspicious to make informed decisions about further investigation and remediation actions.

Operational impact assessment considers the broader effects of detection system deployment on organizational workflows, incident response procedures, and overall security posture. Effective systems should enhance security without creating excessive operational burden or disrupting legitimate business activities.

How Can mr7 Agent Automate AI Steganography Detection and Analysis?

mr7 Agent represents a cutting-edge solution for automating AI steganography detection and analysis directly on security professionals' local devices. This advanced platform combines specialized AI models with automated penetration testing capabilities to provide comprehensive protection against sophisticated embedded threats in machine learning systems.

Local processing capabilities ensure that sensitive model data never leaves the organization's infrastructure while maintaining access to powerful AI analysis tools. mr7 Agent can automatically scan deployed models, compare them against known clean baselines, and identify suspicious parameter modifications that might indicate backdoor insertion or other forms of compromise.

Automated workflow orchestration streamlines the detection process by integrating multiple analysis techniques into cohesive examination pipelines. Security teams can configure mr7 Agent to perform regular scans of model repositories, flag suspicious findings for review, and generate detailed reports documenting potential threats and recommended remediation steps.

yaml

Example mr7 Agent configuration for AI steganography detection

analysis_pipeline: name: "ML Model Backdoor Scanner" schedule: "daily" targets: - path: "/models/production/.pth" type: "pytorch" - path: "/models/research/.h5" type: "tensorflow"

stages: - name: "Weight Analysis" tool: "statistical_analyzer" parameters: threshold: 0.05 metrics: ["entropy", "skewness", "kurtosis"]

-

name: "Behavioral Testing" tool: "behavioral_monitor" parameters: test_cases: 1000 trigger_patterns: ["watermark", "corner_pattern", "invisible"]

- name: "Transformer Detection" tool: "transformer_detector" parameters: model_path: "/opt/mr7/models/stegano_detector_transformer.pth"

notifications: - severity: "high" channels: ["slack", "email"] recipients: ["[email protected]"]

Integration with existing security toolchains allows mr7 Agent to complement current defensive measures while providing specialized capabilities for AI-specific threats. The platform can export findings to SIEM systems, ticketing platforms, and incident response workflows, ensuring seamless incorporation into established security operations.

Customizable detection rules enable organizations to tailor analysis parameters to their specific risk profiles and operational requirements. Security teams can adjust sensitivity thresholds, define custom trigger patterns to test, and specify which types of anomalies warrant immediate attention versus routine monitoring.

Performance optimization features ensure that automated analysis doesn't impact normal business operations while maintaining comprehensive coverage of potential threats. mr7 Agent can prioritize scanning based on model criticality, usage patterns, and historical risk factors to focus resources where they're most needed.

Continuous learning capabilities allow mr7 Agent to adapt to new steganographic techniques and evolving threat landscapes. The platform can incorporate feedback from security analysts, update detection models based on newly discovered attack patterns, and refine analysis approaches based on organizational experience with actual incidents.

Collaborative analysis features enable multiple team members to work together on complex investigations, share findings, and coordinate response efforts. mr7 Agent supports role-based access controls, audit logging, and collaborative documentation to facilitate effective teamwork in addressing AI security challenges.

Key Takeaways

• AI steganography represents a fundamental shift from traditional file-based concealment to embedding threats directly in neural network parameters and training processes • Modern detection requires sophisticated statistical analysis, behavioral monitoring, and transformer-based approaches that can identify subtle anomalies invisible to conventional tools • Supply chain attacks leveraging compromised ML models pose severe risks as they can affect entire organizations while appearing completely legitimate • Transformer-based detection models significantly outperform traditional steganalysis methods with higher accuracy rates and lower false positive rates • Comprehensive evaluation metrics must include not just accuracy but also false positive rates, processing speed, scalability, and resistance to evasion attempts • Automated tools like mr7 Agent can streamline detection workflows while providing local processing capabilities that protect sensitive model data • Early detection and continuous monitoring are essential for preventing backdoored models from causing damage in production environments

Frequently Asked Questions

Q: What is AI steganography and how does it differ from traditional steganography?

AI steganography involves embedding malicious payloads directly within neural network weights, architectures, and training processes rather than in traditional media files like images or audio. Unlike conventional methods that hide data in perceptually redundant spaces, AI steganography exploits the mathematical complexity of machine learning models to conceal threats at the parameter level, making detection significantly more challenging for traditional security tools.

Q: How can organizations protect themselves against supply chain attacks involving compromised ML models?

Organizations should implement comprehensive model verification processes including cryptographic integrity checks, behavioral testing against known clean baselines, and continuous monitoring for anomalous operational patterns. Additionally, establishing secure procurement procedures, maintaining detailed model provenance records, and deploying automated detection systems can help identify potentially compromised models before they cause harm.

Q: What are the most effective detection methods for identifying backdoored machine learning models?

The most effective approaches combine statistical analysis of weight distributions, behavioral testing with diverse input samples, transformer-based anomaly detection, and differential comparison against trusted model versions. Ensemble methods that integrate multiple detection techniques typically provide the highest accuracy while minimizing false positive rates, as they can cross-validate findings across different analytical domains.

Q: How accurate are current AI steganography detection tools compared to traditional methods?

Modern transformer-based detection systems achieve 85-95% accuracy with false positive rates of 2-8%, significantly outperforming traditional steganalysis methods that typically achieve 65-75% accuracy with 15-25% false positive rates. The improved performance stems from transformers' ability to analyze complex parameter relationships and identify subtle anomalies that simpler statistical methods miss.

Q: Can mr7 Agent help automate the detection of AI steganography in enterprise environments?

Yes, mr7 Agent provides specialized automation capabilities for detecting AI steganography through local processing of machine learning models, customizable analysis pipelines, and integration with existing security workflows. The platform can automatically scan model repositories, perform comprehensive parameter analysis, conduct behavioral testing, and generate actionable alerts when suspicious patterns are detected, all while keeping sensitive data within organizational boundaries.

Your Complete AI Security Toolkit

Online: KaliGPT, DarkGPT, OnionGPT, 0Day Coder, Dark Web Search Local: mr7 Agent - automated pentesting, bug bounty, and CTF solving

From reconnaissance to exploitation to reporting - every phase covered.

Try All Tools Free → | Get mr7 Agent →